Adaptive and Balanced Re-initialization for Long-timescale Continual Test-time Domain Adaptation

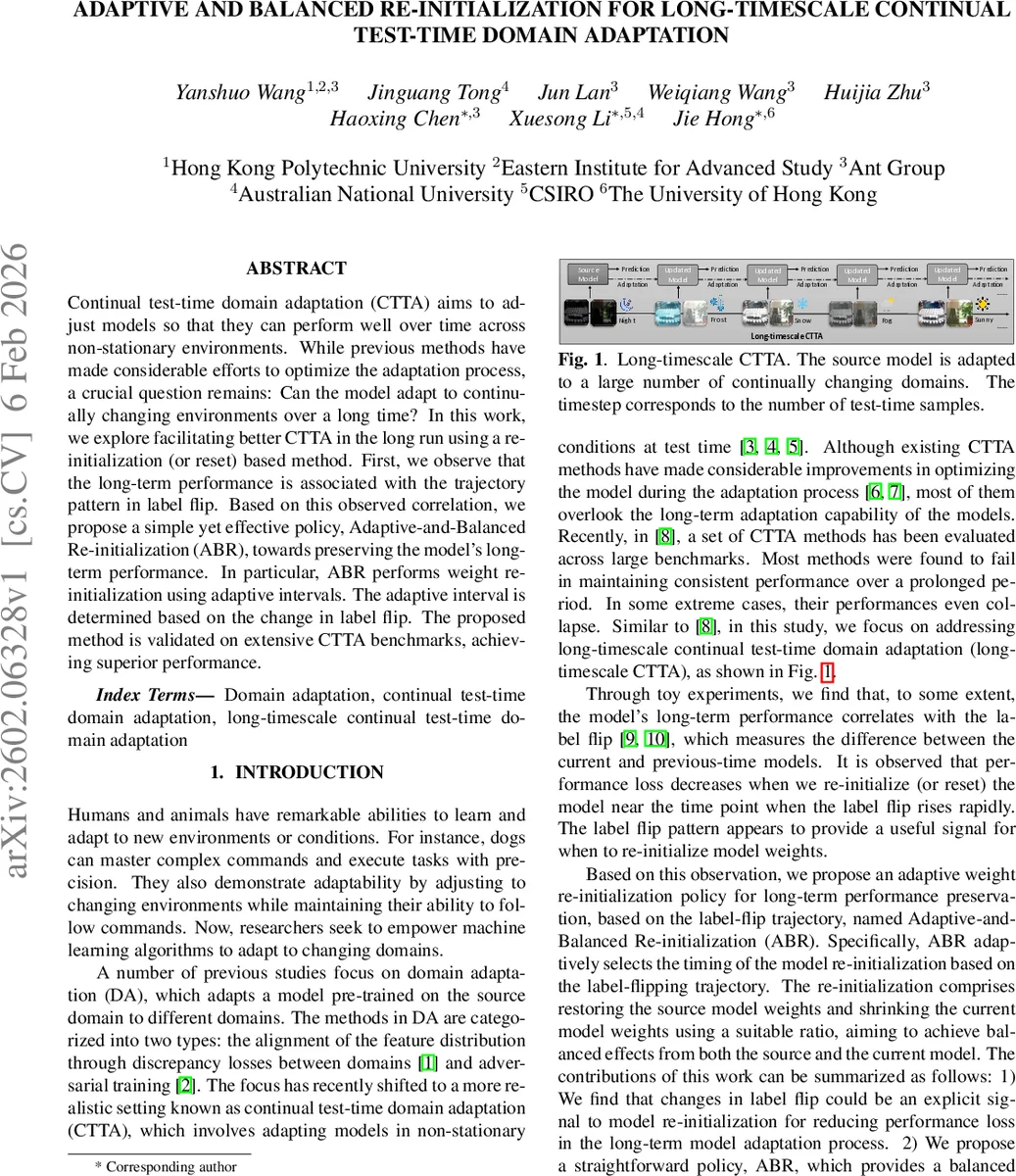

Continual test-time domain adaptation (CTTA) aims to adjust models so that they can perform well over time across non-stationary environments. While previous methods have made considerable efforts to optimize the adaptation process, a crucial question remains: Can the model adapt to continually changing environments over a long time? In this work, we explore facilitating better CTTA in the long run using a re-initialization (or reset) based method. First, we observe that the long-term performance is associated with the trajectory pattern in label flip. Based on this observed correlation, we propose a simple yet effective policy, Adaptive-and-Balanced Re-initialization (ABR), towards preserving the model’s long-term performance. In particular, ABR performs weight re-initialization using adaptive intervals. The adaptive interval is determined based on the change in label flip. The proposed method is validated on extensive CTTA benchmarks, achieving superior performance.

💡 Research Summary

The paper addresses a critical gap in continual test‑time domain adaptation (CTTA): the degradation of model performance over long adaptation horizons when the test distribution changes continuously. While prior CTTA works have focused on improving short‑term adaptation using entropy minimization, pseudo‑labeling, or adversarial alignment, they often suffer from error accumulation that eventually collapses accuracy. The authors discover that the trajectory of “label flip” – the proportion of samples whose predicted class changes between consecutive adaptation steps, weighted by confidence differences – serves as a reliable early‑warning signal of impending performance loss.

Motivated by this observation, they propose Adaptive‑and‑Balanced Re‑initialization (ABR). ABR first smooths the raw label‑flip signal with an exponential moving average (α = 0.5) to obtain a stable trajectory LFₜ. The minimum value of this trajectory (LF_min) is used as a reference point. At each time step the algorithm computes the slope Sₜ = ΔLF / Δt, where ΔLF = LFₜ − LF_min and Δt is the elapsed time since LF_min occurred. When Sₜ exceeds a dynamically scaled threshold β √(t − t_min) (β = 2 × 10⁻⁶), ABR triggers a re‑initialization.

Instead of a naïve reset to the source model, ABR performs a “shrink‑restore” weight update:

θₜ = λₜ θ_source + (1 − λₜ) θ_{t‑1},

where λₜ = LFₜ / (LFₜ + LF_min). When label flip rises sharply, λₜ grows, pulling the model closer to the source weights; when the rise is modest, λₜ stays small, preserving more of the adapted knowledge. This adaptive blending mitigates error accumulation while still allowing the model to learn new domain characteristics.

The method is evaluated on three large‑scale CTTA benchmarks: CIN‑C, CIN‑3DCC, and the CCC suite (Easy, Medium, Hard). Using a ResNet‑50 backbone within the EA‑TTA framework, ABR is compared against a wide range of baselines, including BN, TENT, RPL, SLR, CPL, CoTTA, EA‑TTA, ET‑A, RDumb, and a recent TCA method. Results show that most baselines either degrade to below the pre‑trained accuracy or completely collapse on the hardest settings. ABR consistently achieves the highest mean accuracy (40.2 % overall), improving by 2.3 % over the strongest baseline (RDumb) and delivering especially large gains on the challenging CCC‑Hard scenario (+12.7 % absolute). Ablation studies confirm that random or fixed‑interval resets do not match the performance of the label‑flip‑driven adaptive trigger, and that the shrink‑restore blending is essential for retaining previously learned knowledge.

Key contributions are: (1) identifying label‑flip dynamics as an explicit indicator of long‑term performance loss; (2) designing a simple yet effective adaptive re‑initialization policy that balances source and adapted knowledge; (3) demonstrating superior long‑timescale CTTA performance across diverse, realistic benchmarks.

Limitations include the need to compute label‑flip using predictions from two consecutive models, which adds overhead in streaming settings, and the use of fixed hyper‑parameters (α, β) that may require tuning for extremely rapid domain shifts. Future work could explore lightweight approximations of label flip or meta‑learning strategies to automatically adjust the trigger thresholds.

Comments & Academic Discussion

Loading comments...

Leave a Comment