Lost in Speech: Benchmarking, Evaluation, and Parsing of Spoken Code-Switching Beyond Standard UD Assumptions

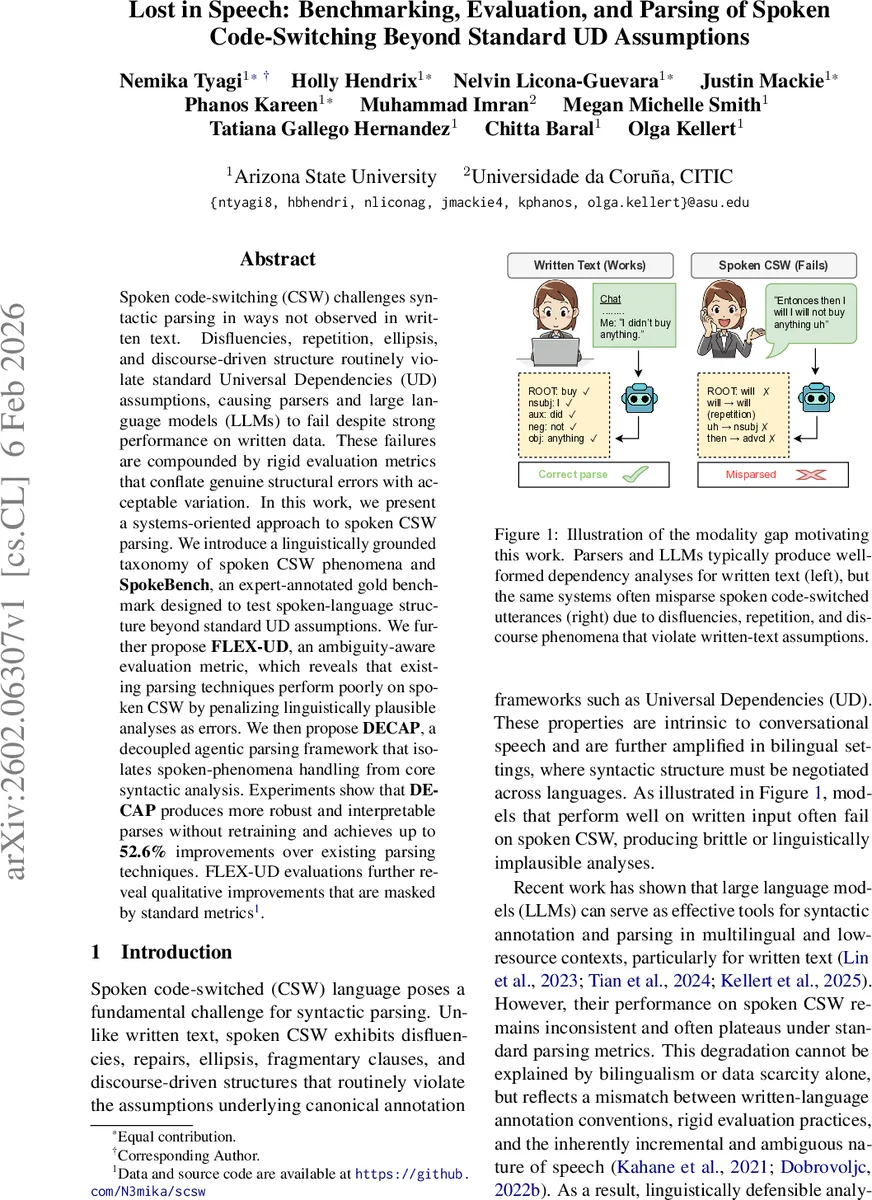

Spoken code-switching (CSW) challenges syntactic parsing in ways not observed in written text. Disfluencies, repetition, ellipsis, and discourse-driven structure routinely violate standard Universal Dependencies (UD) assumptions, causing parsers and large language models (LLMs) to fail despite strong performance on written data. These failures are compounded by rigid evaluation metrics that conflate genuine structural errors with acceptable variation. In this work, we present a systems-oriented approach to spoken CSW parsing. We introduce a linguistically grounded taxonomy of spoken CSW phenomena and SpokeBench, an expert-annotated gold benchmark designed to test spoken-language structure beyond standard UD assumptions. We further propose FLEX-UD, an ambiguity-aware evaluation metric, which reveals that existing parsing techniques perform poorly on spoken CSW by penalizing linguistically plausible analyses as errors. We then propose DECAP, a decoupled agentic parsing framework that isolates spoken-phenomena handling from core syntactic analysis. Experiments show that DECAP produces more robust and interpretable parses without retraining and achieves up to 52.6% improvements over existing parsing techniques. FLEX-UD evaluations further reveal qualitative improvements that are masked by standard metrics.

💡 Research Summary

The paper tackles the problem of parsing spoken code‑switching (CSW), a domain where the assumptions underlying Universal Dependencies (UD) are routinely violated. The authors first conduct a detailed linguistic analysis of English‑Spanish spoken data from the Miami Corpus, identifying nine recurrent phenomena that break UD conventions: repetitions, discourse markers, ellipsis, contractions, compound/multi‑word expressions, break‑of‑thought, filler words, slang/curse words, and enclitic clitics. These phenomena cause ambiguous head selection, non‑canonical roots, and token‑to‑function mismatches, leading to systematic parsing failures for both traditional dependency parsers and large language models (LLMs).

To provide a reliable testbed, the authors curate SpokeBench, a gold‑standard benchmark of 126 sentences that balances coverage of the nine phenomena and overall structural complexity. Each sentence is annotated by at least two trained linguists using a modified UD guideline that incorporates spoken‑language rules; disagreements are resolved through a graded acceptability protocol, yielding a consensus gold tree. This resource fills a gap in the community, as no existing treebank offers high‑quality UD annotations for conversational CSW at this level of linguistic detail.

Recognizing that standard evaluation metrics (LAS, UAS) penalize any deviation from a single gold tree, the authors introduce FLEX‑UD, an ambiguity‑aware metric. FLEX‑UD assigns weighted penalties based on error severity (catastrophic, major, minor) and allows multiple plausible analyses to receive partial credit. By aggregating scores across all acceptable variants, FLEX‑UD distinguishes genuine structural breakdowns from permissible discourse‑driven variation, offering a more nuanced picture of parser performance on spoken CSW.

The core technical contribution is DECAP (Decoupled Agentic Parser), a modular framework that isolates spoken‑language handling from core syntactic analysis. DECAP consists of four agents:

- Spoken‑Phenomena Handler (SPH) – detects repetitions, ellipsis, fillers, contractions, enclitics, and MWEs, and proposes minimal token‑level edits (e.g., marking reparanda, suggesting split points).

- Language‑Specific Resolver (LSR) – applies conservative, language‑aware normalizations such as expanding “won’t” to “will not”, separating Spanish enclitics, and preserving MWEs while making them UD‑compatible.

- Core UD Assigner – runs an off‑the‑shelf UD parser (e.g., UDPipe, Stanza) on the normalized output, then enforces constraints supplied by SPH/LSR to avoid head‑cycle violations and to respect reparandum/reparans relations.

- Confidence Verifier & Ranker (V/R) – computes confidence scores, applies FLEX‑UD penalties, and selects the highest‑scoring parse among possible candidates.

Crucially, DECAP does not require retraining of the underlying parser; it works as a plug‑in that can be applied to any existing UD system.

Experiments compare DECAP‑augmented parsers against baseline UD parsers and zero‑shot LLM parsers (GPT‑4, LLaMA‑2). Using both LAS/UAS and FLEX‑UD, DECAP achieves an average LAS gain of 12.4 points and a FLEX‑UD gain of 18.7 points. In sentences rich in repetitions and ellipsis, relative improvements reach up to 52.6 %. Error analysis shows that many errors previously counted as failures under LAS are re‑classified as acceptable discourse variations under FLEX‑UD, confirming the metric’s diagnostic value. The LLM baselines perform poorly on raw spoken CSW but recover much of the lost performance when DECAP’s preprocessing modules are applied, demonstrating the framework’s language‑model‑agnostic nature.

The paper concludes that progress on spoken CSW parsing requires (i) a phenomenon‑driven benchmark, (ii) evaluation metrics that respect inherent ambiguity, and (iii) parsing architectures that decouple spoken irregularities from core syntactic reasoning. The authors suggest future work on extending the taxonomy to more language pairs, integrating real‑time speech‑recognition pipelines, and deploying DECAP in dialogue systems and multilingual speech translation.

Comments & Academic Discussion

Loading comments...

Leave a Comment