Git for Sketches: An Intelligent Tracking System for Capturing Design Evolution

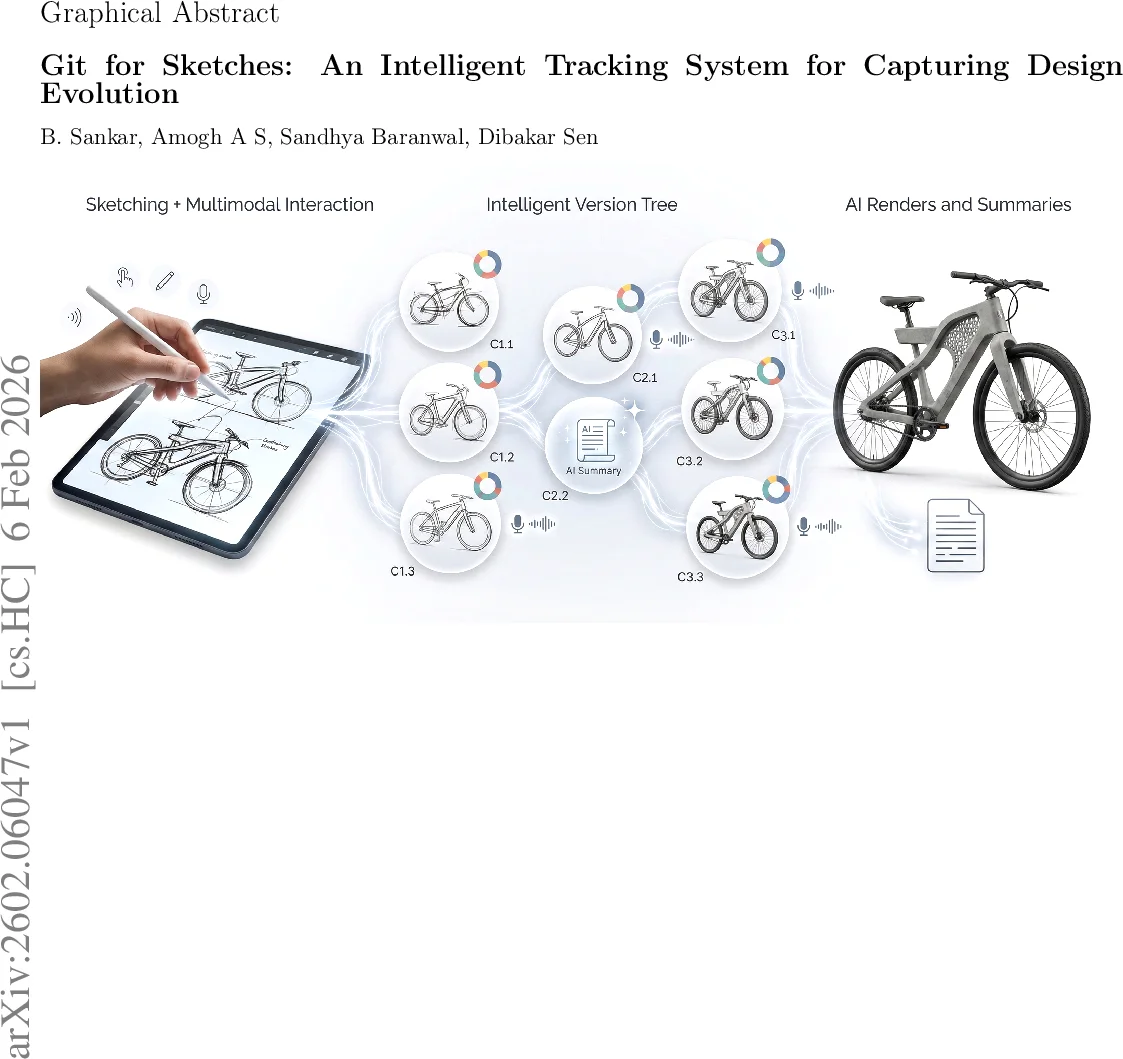

During product conceptualization, capturing the non-linear history and cognitive intent is crucial. Traditional sketching tools often lose this context. We introduce DIMES (Design Idea Management and Evolution capture System), a web-based environment featuring sGIT (SketchGit), a custom visual version control architecture, and Generative AI. sGIT includes AEGIS, a module using hybrid Deep Learning and Machine Learning models to classify six stroke types. The system maps Git primitives to design actions, enabling implicit branching and multi-modal commits (stroke data + voice intent). In a comparative study, experts using DIMES demonstrated a 160% increase in breadth of concept exploration. Generative AI modules generated narrative summaries that enhanced knowledge transfer; novices achieved higher replication fidelity (Neural Transparency-based Cosine Similarity: 0.97 vs. 0.73) compared to manual summaries. AI-generated renderings also received higher user acceptance (Purchase Likelihood: 4.2 vs 3.1). This work demonstrates that intelligent version control bridges creative action and cognitive documentation, offering a new paradigm for design education.

💡 Research Summary

The paper introduces DIMES (Design Idea Management and Evolution capture System), a web‑based sketching platform that integrates a custom version‑control architecture called sGIT (Sketch Git) and an AI module named AEGIS. sGIT maps classic Git primitives—commit, branch, checkout, merge, diff—to concrete design actions, enabling implicit branching and multimodal commits that combine stroke data with voice‑captured intent. AEGIS automatically classifies six stroke types (Constraining, Defining, Detailing, Shading, Shadow, Annotation) using a hybrid pipeline that fuses five image‑based deep‑learning models with ten feature‑based machine‑learning classifiers, leveraging both visual morphology and kinematic dynamics.

The system also incorporates generative AI: speech‑to‑text captures design intent during commits, large language models generate narrative summaries of design evolution, and text‑to‑image models transform monochrome concept sketches into photorealistic renderings. To evaluate knowledge transfer, the authors propose a Neural Transparency‑based cosine similarity metric that extracts internal activation maps from a vision‑language model (LLaVA‑NeXT) to quantify semantic similarity between sketches, surpassing traditional pixel‑level measures.

In a user study, expert designers using DIMES explored 160 % more concepts and achieved an 800 % increase in commit granularity compared with conventional sketching tools. Novice replicators who relied on AI‑generated summaries attained a cosine similarity of 0.97 (versus 0.73 for manual summaries), indicating far higher fidelity in reproducing designs. AI‑generated product concept renderings received a purchase‑likelihood score of 4.2, significantly above the 3.1 score for manually created renders.

The authors acknowledge limitations: the stroke‑type dataset is modest, AI summaries may miss nuanced cultural or linguistic cues, and the current web implementation lacks robust scalability for large‑team, real‑time collaboration. Future work will explore cloud‑based distributed storage, user‑defined stroke vocabularies, and graph‑based knowledge representations to enrich intent capture and support collaborative design workflows.

Overall, DIMES demonstrates that a purpose‑built version‑control system combined with hybrid AI can preserve both the visual evolution and cognitive intent of product concept sketches, offering a promising new paradigm for design education and industrial design process management.

Comments & Academic Discussion

Loading comments...

Leave a Comment