DimABSA: Building Multilingual and Multidomain Datasets for Dimensional Aspect-Based Sentiment Analysis

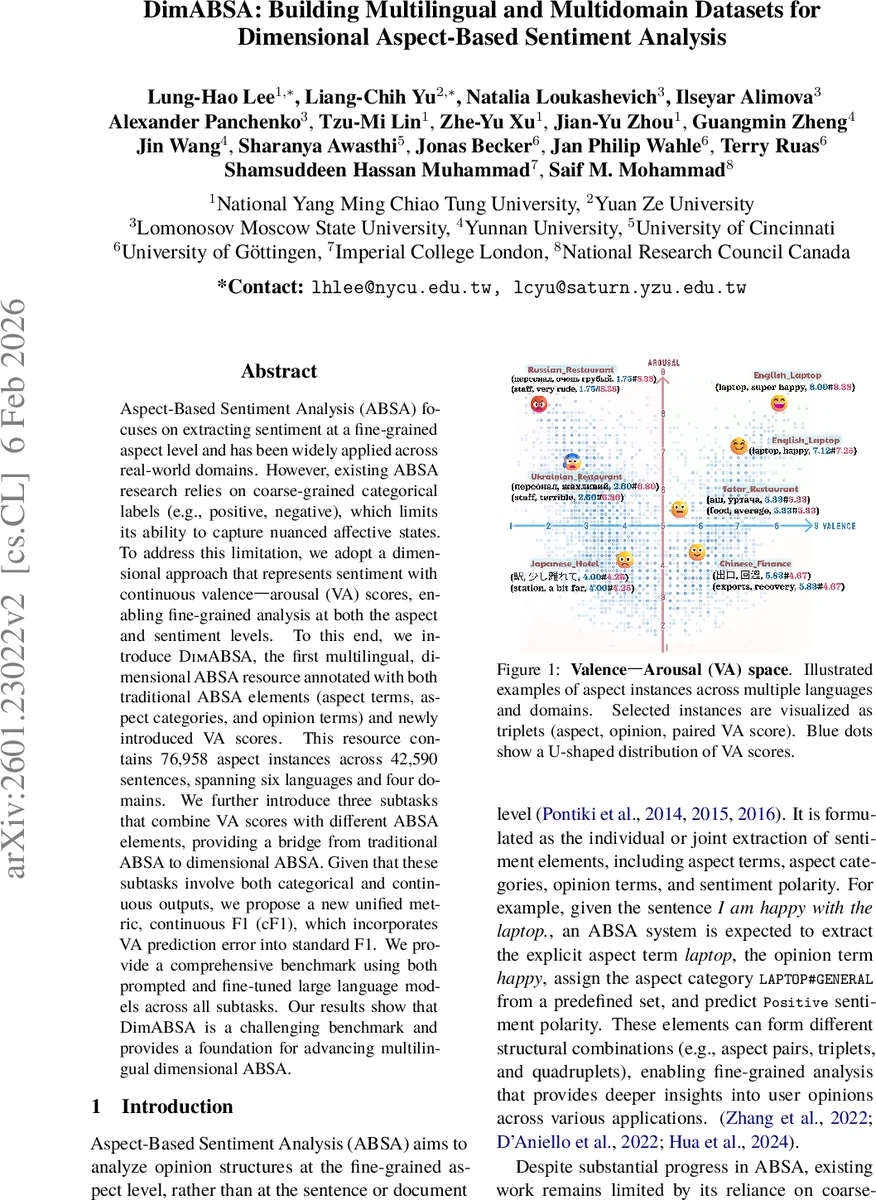

Aspect-Based Sentiment Analysis (ABSA) focuses on extracting sentiment at a fine-grained aspect level and has been widely applied across real-world domains. However, existing ABSA research relies on coarse-grained categorical labels (e.g., positive, negative), which limits its ability to capture nuanced affective states. To address this limitation, we adopt a dimensional approach that represents sentiment with continuous valence-arousal (VA) scores, enabling fine-grained analysis at both the aspect and sentiment levels. To this end, we introduce DimABSA, the first multilingual, dimensional ABSA resource annotated with both traditional ABSA elements (aspect terms, aspect categories, and opinion terms) and newly introduced VA scores. This resource contains 76,958 aspect instances across 42,590 sentences, spanning six languages and four domains. We further introduce three subtasks that combine VA scores with different ABSA elements, providing a bridge from traditional ABSA to dimensional ABSA. Given that these subtasks involve both categorical and continuous outputs, we propose a new unified metric, continuous F1 (cF1), which incorporates VA prediction error into standard F1. We provide a comprehensive benchmark using both prompted and fine-tuned large language models across all subtasks. Our results show that DimABSA is a challenging benchmark and provides a foundation for advancing multilingual dimensional ABSA.

💡 Research Summary

The paper introduces DimABSA, the first large‑scale multilingual and multidomain dataset for dimensional aspect‑based sentiment analysis (ABSA) that augments traditional ABSA annotations (aspect terms, aspect categories, opinion terms) with continuous valence‑arousal (VA) scores. Existing ABSA research has been limited to coarse categorical sentiment labels (e.g., positive, negative), which cannot capture the nuanced affective states that humans experience. By adopting the psychological VA model, the authors enable fine‑grained sentiment representation at both the aspect and sentiment levels.

DimABSA comprises 42,590 sentences across six languages—English, Chinese, Japanese, Russian, Ukrainian, and a sixth language (unspecified in the excerpt)—and four domains (restaurant, laptop/electronics, hotel, and service). In total, 76,958 aspect instances are annotated. For each sentence, annotators first identify aspect terms, aspect categories, and opinion terms. Then, native speakers assign a valence score (degree of pleasantness) and an arousal score (degree of activation) on a 0‑1 continuous scale to each aspect. The annotation pipeline includes three stages: (1) expert extraction of aspect and opinion elements, (2) native‑speaker rating of VA values, and (3) cross‑validation and quality control using inter‑annotator agreement and RMSE metrics. The resulting VA distribution is U‑shaped, indicating a concentration of extreme positive and negative affect, while aspect‑category frequencies follow a long‑tailed pattern, ensuring coverage of both frequent and rare categories.

To bridge traditional categorical ABSA and the new dimensional setting, the authors define three subtasks:

- Dimensional Aspect Sentiment Regression (DimASR) – given an aspect, predict its continuous VA pair.

- Dimensional Aspect Sentiment Triplet Extraction (DimASTE) – extract (aspect, category, opinion) triples together with the associated VA scores.

- Dimensional Aspect Sentiment Quadruplet Extraction (DimASQP) – simultaneously extract (aspect, category, opinion, VA) quadruplets.

Because these tasks involve both discrete and continuous outputs, standard F1 is insufficient. The authors therefore propose continuous F1 (cF1), which integrates the standard F1 (precision/recall for discrete elements) with a penalty based on VA prediction error, yielding a single metric that reflects both extraction quality and regression accuracy.

The benchmark experiments evaluate both prompted (zero‑shot and few‑shot) and fine‑tuned large language models (LLMs), including GPT‑3.5‑Turbo, GPT‑4, LLaMA‑2, and Alpaca variants. Across all languages and domains, the models achieve cF1 scores ranging from 0.45 to 0.58, substantially lower than human performance and roughly 15 % below results on traditional categorical ABSA datasets. Performance degrades notably in low‑resource languages and less‑represented domains, highlighting the difficulty of modeling continuous affective dimensions.

Key contributions of the work are:

- Dataset: a multilingual, multidomain resource with over 76 k aspect instances annotated with both traditional ABSA elements and continuous VA scores.

- Tasks: three novel subtasks that integrate aspect extraction with dimensional sentiment prediction.

- Metric: the continuous F1 (cF1) measure that jointly evaluates discrete extraction and continuous regression.

- Benchmark: a comprehensive evaluation of state‑of‑the‑art LLMs, establishing a baseline and demonstrating the challenging nature of dimensional ABSA.

DimABSA opens new research avenues for sentiment analysis that move beyond binary or categorical sentiment labels toward richer, psychologically grounded affect representations. Future work may explore multimodal extensions (e.g., incorporating visual cues), dynamic sentiment modeling over time, and generation of sentiment‑aware text using the VA framework.

Comments & Academic Discussion

Loading comments...

Leave a Comment