inversedMixup: Data Augmentation via Inverting Mixed Embeddings

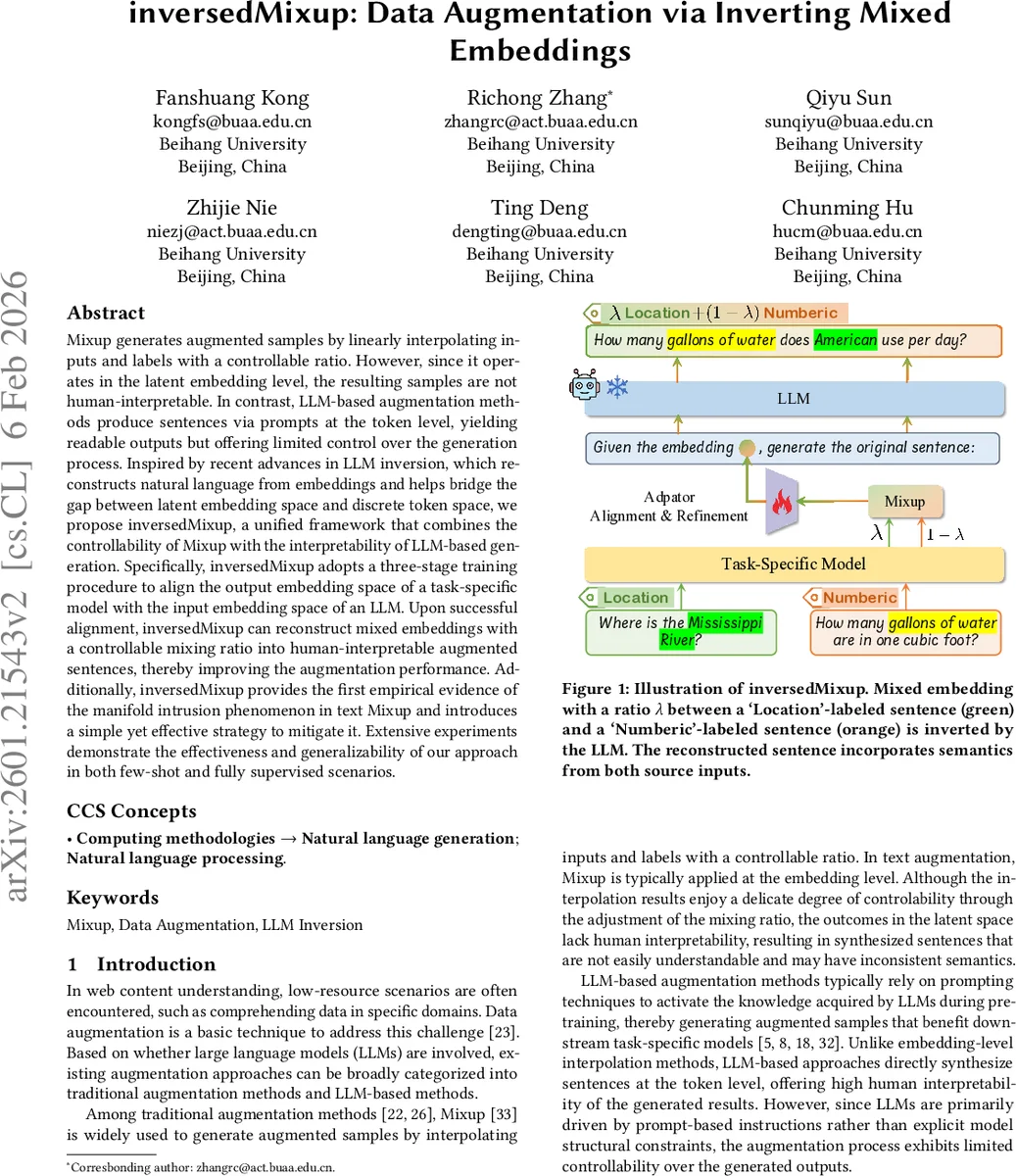

Mixup generates augmented samples by linearly interpolating inputs and labels with a controllable ratio. However, since it operates in the latent embedding level, the resulting samples are not human-interpretable. In contrast, LLM-based augmentation methods produce sentences via prompts at the token level, yielding readable outputs but offering limited control over the generation process. Inspired by recent advances in LLM inversion, which reconstructs natural language from embeddings and helps bridge the gap between latent embedding space and discrete token space, we propose inversedMixup, a unified framework that combines the controllability of Mixup with the interpretability of LLM-based generation. Specifically, inversedMixup adopts a three-stage training procedure to align the output embedding space of a task-specific model with the input embedding space of an LLM. Upon successful alignment, inversedMixup can reconstruct mixed embeddings with a controllable mixing ratio into human-interpretable augmented sentences, thereby improving the augmentation performance. Additionally, inversedMixup provides the first empirical evidence of the manifold intrusion phenomenon in text Mixup and introduces a simple yet effective strategy to mitigate it. Extensive experiments demonstrate the effectiveness and generalizability of our approach in both few-shot and fully supervised scenarios.

💡 Research Summary

The paper introduces inversedMixup, a novel text data‑augmentation framework that unifies the controllability of traditional Mixup with the interpretability of large‑language‑model (LLM) generation. Conventional Mixup creates virtual training examples by linearly interpolating input embeddings and their one‑hot labels with a mixing coefficient λ. While this provides fine‑grained control over the semantic blend, the resulting mixed embeddings live in a continuous latent space and cannot be directly read by humans. Conversely, LLM‑based augmentation generates readable sentences via prompt engineering, but offers limited control over the exact semantic composition or label proportion of the generated text.

To bridge this gap, the authors leverage recent advances in LLM inversion, which treat an embedding as a “soft token” that can be fed to a generative LLM to reconstruct the original sentence. The core technical contribution is a learnable adaptor (denoted Aφ) that maps the output embedding space of a task‑specific model Mθ (used for Mixup) into the input embedding space of a generative LLM Mψ. By aligning these spaces, mixed embeddings can be directly fed to the LLM, which then decodes them into human‑readable sentences that preserve the mixing ratio λ.

The training pipeline consists of three stages:

- Adaptor Alignment with Unlabeled Data – Using a large open‑domain corpus XU, the adaptor is trained in a self‑supervised manner. For each sentence x, the task model produces an embedding h = Mθ(x). The adaptor transforms h to Aφ(h), which is inserted into a fixed prompt (e.g., “Given the embedding

Comments & Academic Discussion

Loading comments...

Leave a Comment