MOOT: a Repository of Many Multi-Objective Optimization Tasks

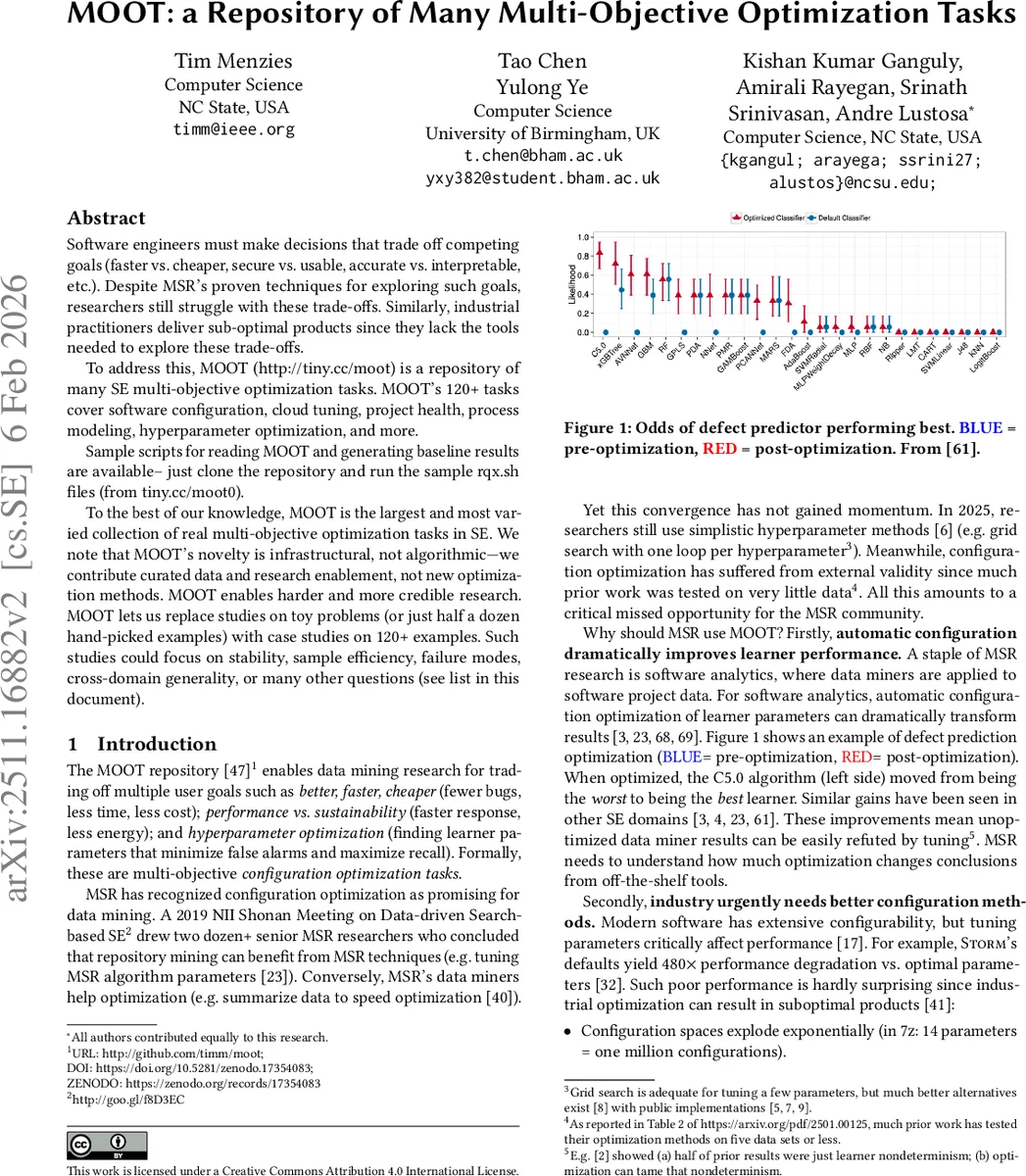

Software engineers must make decisions that trade off competing goals (faster vs. cheaper, secure vs. usable, accurate vs. interpretable, etc.). Despite MSR’s proven techniques for exploring such goals, researchers still struggle with these trade-offs. Similarly, industrial practitioners deliver sub-optimal products since they lack the tools needed to explore these trade-offs. To address this, MOOT (http://tiny.cc/moot) is a repository of many SE multi-objective optimization tasks. MOOT’s 120+ tasks cover software configuration, cloud tuning, project health, process modeling, hyperparameter optimization, and more. Sample scripts for reading MOOT and generating baseline results are available – just clone the repository and run the sample rqx.sh files (from tiny.cc/moot0). To the best of our knowledge, MOOT is the largest and most varied collection of real multi-objective optimization tasks in SE. We note that MOOT’s novelty is infrastructural, not algorithmic-we contribute curated data and research enablement, not new optimization methods. MOOT enables harder and more credible research. MOOT lets us replace studies on toy problems (or just half a dozen hand-picked examples) with case studies on 120+ examples. Such studies could focus on stability, sample efficiency, failure modes, cross-domain generality, or many other questions (see list in this document).

💡 Research Summary

The paper introduces MOOT, an open‑source repository that aggregates more than 120 real‑world multi‑objective optimization (MOO) tasks drawn from software engineering (SE) research and practice. Each task is provided as a CSV file with a standardized schema: independent variables (x) and dependent objectives (y), where the objective columns are annotated with “+” for maximization and “‑” for minimization. The repository covers a wide spectrum of domains, including software configuration tuning (e.g., database systems, compilers, streaming frameworks), cloud service optimization (Apache, SQL, video encoding), project health prediction, feature‑model configuration, process‑model simulation (NASA93, COC, POM3, XOMO), as well as non‑SE datasets such as financial churn, health statistics, reinforcement‑learning benchmarks, and sales forecasting.

The authors argue that SE research has suffered from a reliance on a handful of toy problems or tiny data collections, which limits external validity and hampers the development of robust optimization techniques. By curating a large, diverse, and peer‑reviewed set of tasks, MOOT aims to enable more credible, reproducible, and large‑scale empirical studies. Table 1 in the paper lists each dataset’s dimensionality (number of input variables and objectives), size (rows), and citation count, providing a quick overview for researchers to select appropriate tasks.

To demonstrate MOOT’s utility, the paper presents a simple baseline experiment focused on “label‑scarce” optimization. Using the provided rq2.sh script, the authors cluster the data, label a small number of points per cluster, and then predict labels for the remaining points based on Euclidean distance to the centroids of “best” and “rest” clusters. The experiment runs on 127 datasets with 20 repetitions each, varying the number of labeled instances (n₁ = 30 for training, n₂ = 10 for selection). Results (Figure 3) show that performance quickly saturates after roughly 40 labeled instances, suggesting that, contrary to the prevailing AI narrative, more data does not always translate into better optimization outcomes in SE contexts. This insight has practical implications for cost‑sensitive environments such as edge computing, cloud cost reduction, or human‑in‑the‑loop labeling pipelines.

The discussion section outlines a comprehensive research agenda organized into three thematic areas:

-

Optimization Strategies & Performance – investigating minimal sample budgets, algorithm selection (e.g., evolutionary, surrogate‑based, pool‑vs‑query‑based search), appropriate performance metrics (hypervolume, IGD, distance‑to‑heaven), ensemble methods, and causality‑aware optimization.

-

Human Factors & Interpretability – developing visual and textual explanations for multi‑objective solutions, facilitating stakeholder trade‑off analysis, assessing fairness and bias when optimizing toward specific goals, integrating domain knowledge, and studying human acceptance of algorithmic recommendations.

-

Industrial Deployment & Adoption – identifying high‑impact industrial problems, integrating MOOT‑driven optimization into CI/CD pipelines, establishing reproducible benchmarking practices, and fostering collaborations between academia and industry through annual ICSE workshops.

Each research question is marked as either near‑term (

Comments & Academic Discussion

Loading comments...

Leave a Comment