Concepts in Motion: Temporal Bottlenecks for Interpretable Video Classification

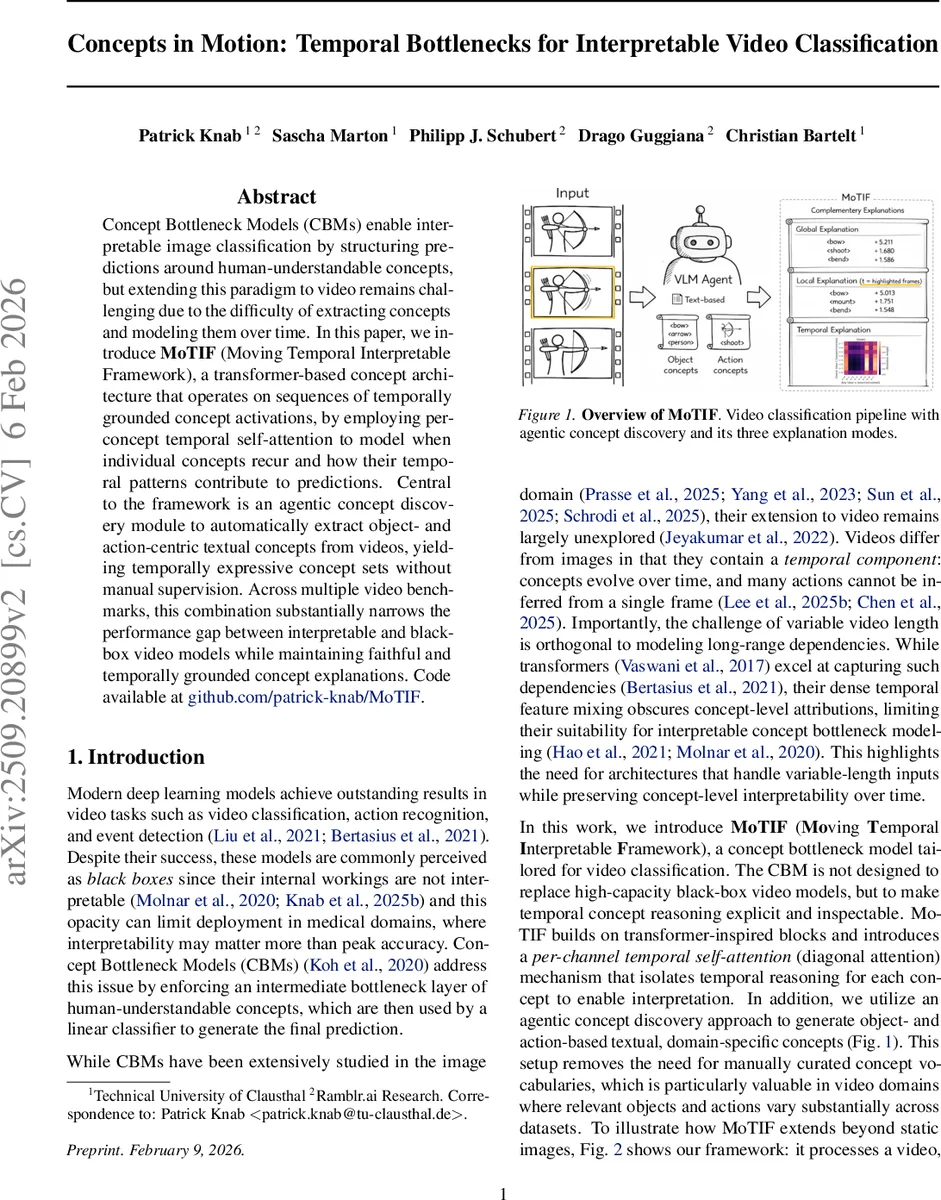

Concept Bottleneck Models (CBMs) enable interpretable image classification by structuring predictions around human-understandable concepts, but extending this paradigm to video remains challenging due to the difficulty of extracting concepts and modeling them over time. In this paper, we introduce $\textbf{MoTIF}$ (Moving Temporal Interpretable Framework), a transformer-based concept architecture that operates on sequences of temporally grounded concept activations, by employing per-concept temporal self-attention to model when individual concepts recur and how their temporal patterns contribute to predictions. Central to the framework is an agentic concept discovery module to automatically extract object- and action-centric textual concepts from videos, yielding temporally expressive concept sets without manual supervision. Across multiple video benchmarks, this combination substantially narrows the performance gap between interpretable and black-box video models while maintaining faithful and temporally grounded concept explanations. Code available at $\href{https://github.com/patrick-knab/MoTIF}{github.com/patrick-knab/MoTIF}$.

💡 Research Summary

The paper tackles the longstanding gap between high‑performing video classifiers and the need for human‑interpretable reasoning. While Concept Bottleneck Models (CBMs) have shown promise for images by forcing predictions to pass through a layer of semantically meaningful concepts, extending this paradigm to video is non‑trivial because concepts evolve over time and actions often require temporal context. To address these challenges, the authors introduce MoTIF (Moving Temporal Interpretable Framework), a novel architecture that combines (1) an agentic concept discovery pipeline and (2) a per‑channel temporal self‑attention mechanism.

Agentic concept discovery works without any manually curated vocabulary. Each video is split into overlapping temporal windows; a large multimodal language model (Qwen‑3 30B) processes each window and emits textual candidates describing objects, attributes, and actions. These candidates are embedded using the same CLIP‑style image‑text encoder as the downstream model, de‑duplicated by cosine similarity, and assembled into a global concept bank. For every window, visual embeddings (from CLIP‑ViT, SigLIP, or a video‑adapted Perception Encoder) are compared with the concept embeddings, producing a matrix of concept activations X∈ℝ^{T×C}, where T is the number of windows and C the number of discovered concepts. This step yields a fully automatic, dataset‑specific set of human‑readable concepts, eliminating the need for hand‑crafted lists.

The per‑channel temporal self‑attention (also called diagonal attention) preserves concept independence while still modeling temporal dynamics. Unlike standard transformers that mix channels in the Q‑K‑V projections, MoTIF applies depthwise 1×1 convolutions so that each concept c receives its own scalar Q, K, and V parameters, which are broadcast across the entire temporal dimension. Consequently, attention scores are computed separately for each concept as W_{c,t,u}=Q_{t,c}·K_{u,c}, followed by a softmax over u. The resulting T×T attention map for each concept acts as a learned, data‑dependent temporal filter, highlighting when a concept repeats, when it precedes another, or when it is most informative. After attention, a lightweight depthwise feed‑forward block refines the activations, which are then passed through a learnable scale γ_c, bias δ_c, and a Softplus nonlinearity to ensure non‑negative, differentiable concept signals.

A linear classifier W_k maps the refined concept vectors Z_{t}∈ℝ^{C} to per‑frame logits ℓ_t. Because videos have variable length, MoTIF aggregates across time using log‑sum‑exp (LSE) pooling, a smooth interpolation between mean and max pooling controlled by a temperature τ. This pooling also yields a time‑importance distribution π_t(k) that can be used for explanation. The training objective combines cross‑entropy on the pooled logits with two regularizers: an L1 penalty on the attention weights to encourage sparsity, and an L1 sparsity penalty on the concept activations to discourage unnecessary concepts.

MoTIF provides three complementary explanation modes: (1) global concept relevance (the τ‑weighted average of concept contributions over the whole video), (2) local concept relevance (highlighting windows with high π_t(k) and showing which concepts dominate there), and (3) temporal dependency maps (the per‑concept attention matrices that reveal how occurrences of a concept attend to earlier or later frames). These explanations make it possible to answer “what concepts mattered” and “when they mattered” in a single, coherent view.

The authors evaluate MoTIF on four widely used video benchmarks—Breakfast Actions, HMDB51, UCF101, and Something‑Something V2—using a variety of CLIP‑based backbones (RN‑50, ViT‑B/32, ViT‑L/14, SigLIP‑ViT‑L/14, and video‑adapted Perception Encoder variants). Compared to zero‑shot CLIP baselines, MoTIF improves top‑1 accuracy by roughly 10–30 percentage points, and it narrows the gap to black‑box models by 5–15 points relative to a global CBM that lacks temporal modeling. Ablation studies show that (i) allowing cross‑concept attention improves raw accuracy but degrades interpretability, (ii) the size and overlap of temporal windows directly affect the granularity of extracted concepts, and (iii) the number of concepts C trades off computational cost (O(C·T²) for diagonal attention) against performance gains.

In summary, MoTIF demonstrates that automatic, language‑driven concept extraction combined with per‑concept temporal self‑attention can deliver video classifiers that are both competitive with state‑of‑the‑art black‑box systems and fully transparent about which human‑understandable concepts drive predictions and at which moments. The work opens avenues for deploying interpretable video models in high‑stakes domains such as healthcare, surveillance, and autonomous driving, while also highlighting future challenges like richer modeling of inter‑concept interactions and more expressive concept embeddings.

Comments & Academic Discussion

Loading comments...

Leave a Comment