RMT-KD: Random Matrix Theoretic Causal Knowledge Distillation

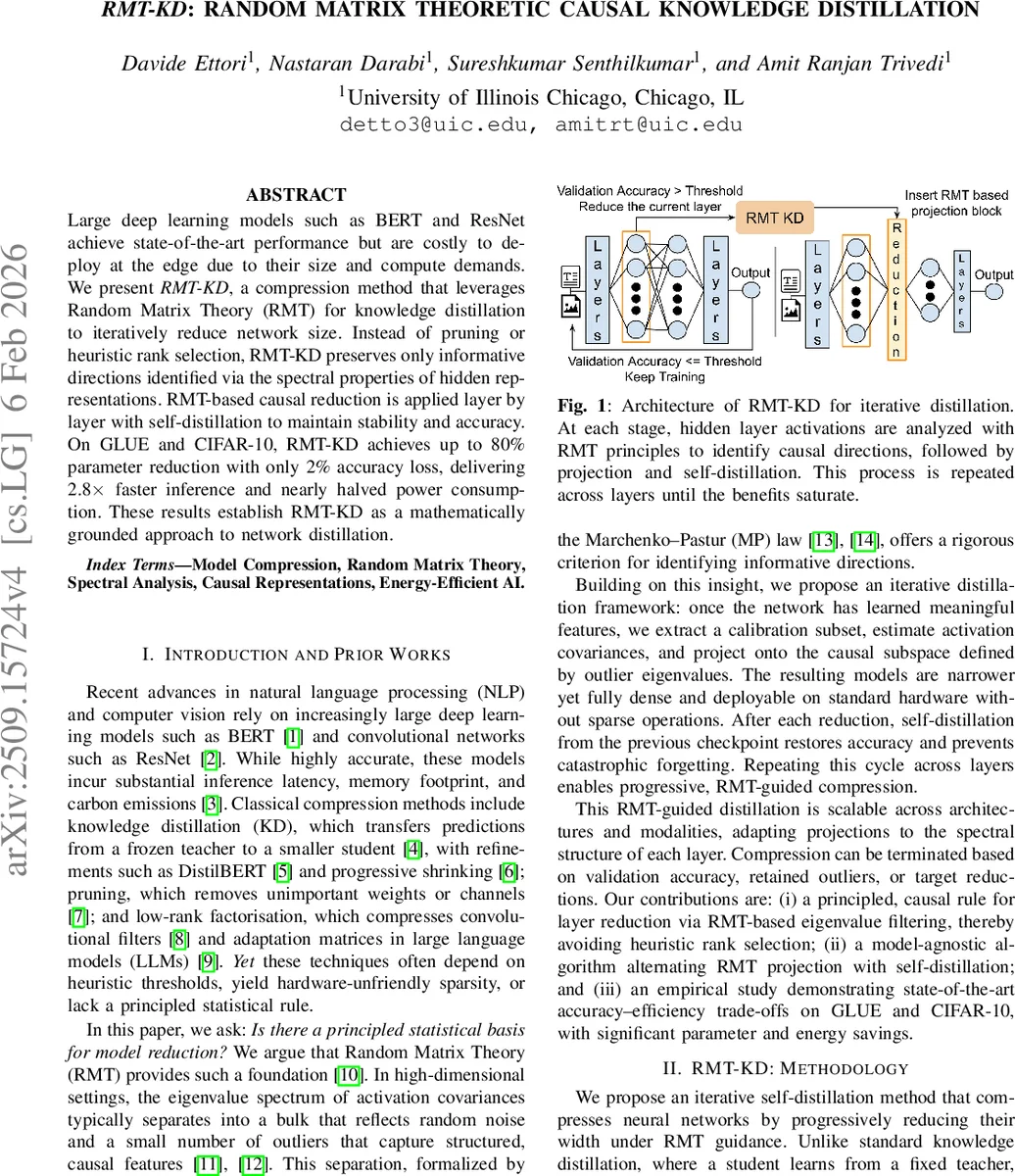

Large deep learning models such as BERT and ResNet achieve state-of-the-art performance but are costly to deploy at the edge due to their size and compute demands. We present RMT-KD, a compression method that leverages Random Matrix Theory (RMT) for knowledge distillation to iteratively reduce network size. Instead of pruning or heuristic rank selection, RMT-KD preserves only informative directions identified via the spectral properties of hidden representations. RMT-based causal reduction is applied layer by layer with self-distillation to maintain stability and accuracy. On GLUE and CIFAR-10, RMT-KD achieves up to 80% parameter reduction with only 2% accuracy loss, delivering 2.8x faster inference and nearly halved power consumption. These results establish RMT-KD as a mathematically grounded approach to network distillation.

💡 Research Summary

The paper introduces RMT‑KD, a novel model compression framework that leverages Random Matrix Theory (RMT) to guide knowledge distillation. The authors observe that in high‑dimensional hidden representations, the eigenvalue spectrum of the activation covariance matrix typically separates into a bulk that follows the Marchenko‑Pastur (MP) law (representing random noise) and a few outlier eigenvalues (spikes) that capture structured, causal information. By estimating the covariance on a small calibration subset (10 % of the training data) after each training phase, they compute the empirical spectrum, fit the MP distribution, and identify eigenvalues exceeding the MP upper edge λ₊ as informative directions.

These informative eigenvectors form a projection matrix P that reduces the layer width from d to k (k < d). After projection, the model undergoes self‑distillation: the current (larger) model acts as a teacher for its reduced counterpart, using a combined loss L = α·CE + (1‑α)·KL(p_teacher‖p_student). This regularizes the student, mitigates catastrophic forgetting, and allows the network to adapt to the lower‑dimensional subspace. The process is repeated layer‑by‑layer until a target compression ratio or validation‑accuracy threshold is reached. Compression aggressiveness is controlled by the quantile used to initialize the noise variance σ²; higher quantiles raise λ₊ and prune more dimensions, while lower quantiles preserve more capacity.

Complexity analysis shows that the dominant cost is the O(nd²) covariance construction and O(d³) eigen‑decomposition per reduced layer, which is negligible compared to full training because n is a small fraction of the dataset and d is bounded by layer width. The projection adds a dense linear layer, avoiding sparse kernels and preserving hardware efficiency on GPUs.

Experiments were conducted on BERT‑base (139 M parameters), TinyBERT (44 M), and ResNet‑50 (23 M) across GLUE (SST‑2, QQP, QNLI) and CIFAR‑10. RMT‑KD achieved up to 80 % parameter reduction for BERT‑base with only a 2 % drop in average GLUE accuracy (80.9 % retained), 58 % reduction for TinyBERT, and 48 % reduction for ResNet‑50. Inference speedup reached 2.8× for BERT‑base, with power consumption reduced by more than 50 %. Compared against state‑of‑the‑art distillation and compression methods (DistilBERT, Theseus, PKD, FitNet, CRD), RMT‑KD consistently delivered higher compression ratios while maintaining or slightly improving accuracy, thanks to its principled selection of causal dimensions.

Ablation studies varying the σ² quantile demonstrated the expected trade‑off: low quantiles preserve accuracy but limit compression; high quantiles increase compression at the cost of accuracy. The optimal operating point was around the 40‑50 % quantile, aligning with the median eigenvalue. Spectral analyses across layers revealed that early transformer layers exhibit spectra close to the MP bulk, allowing aggressive pruning, whereas deeper layers contain more spikes and thus require conservative reduction.

The authors acknowledge limitations: reliance on a representative calibration subset, the need for stronger validation that identified spikes truly correspond to causal features, and reduced effectiveness on already compact models such as ResNet‑50. Future work includes extending the method to massive language models via block‑wise decomposition or randomized eigensolvers, dynamic quantile scheduling, and incorporating statistical tests to validate spike significance.

Overall, RMT‑KD offers a mathematically grounded, data‑driven alternative to heuristic pruning and rank‑selection, delivering dense, hardware‑friendly compressed models with substantial gains in latency, memory, and energy consumption, particularly for over‑parameterized transformer architectures.

Comments & Academic Discussion

Loading comments...

Leave a Comment