Probabilistic Aggregation and Targeted Embedding Optimization for Collective Moral Reasoning in Large Language Models

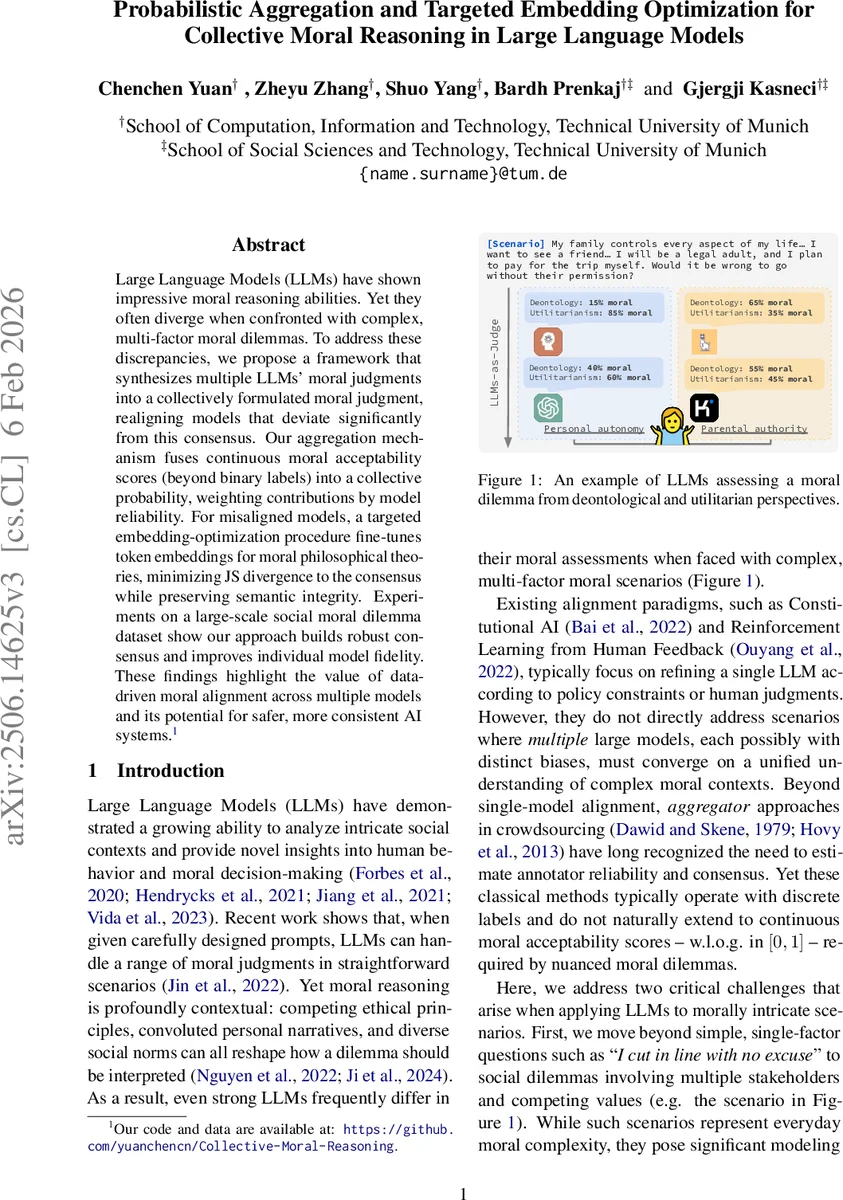

Large Language Models (LLMs) have shown impressive moral reasoning abilities. Yet they often diverge when confronted with complex, multi-factor moral dilemmas. To address these discrepancies, we propose a framework that synthesizes multiple LLMs’ moral judgments into a collectively formulated moral judgment, realigning models that deviate significantly from this consensus. Our aggregation mechanism fuses continuous moral acceptability scores (beyond binary labels) into a collective probability, weighting contributions by model reliability. For misaligned models, a targeted embedding-optimization procedure fine-tunes token embeddings for moral philosophical theories, minimizing JS divergence to the consensus while preserving semantic integrity. Experiments on a large-scale social moral dilemma dataset show our approach builds robust consensus and improves individual model fidelity. These findings highlight the value of data-driven moral alignment across multiple models and its potential for safer, more consistent AI systems.

💡 Research Summary

The paper tackles the problem of divergent moral judgments among multiple large language models (LLMs) when faced with complex, multi‑factor social dilemmas. It proposes a two‑stage framework: (1) probabilistic aggregation of continuous moral acceptability scores into a collective probability, and (2) targeted embedding optimization for models that deviate significantly from this consensus.

Probabilistic aggregation

Each model m provides a continuous annotation aₘ,ⱼ,ᵢ∈

Comments & Academic Discussion

Loading comments...

Leave a Comment