code_transformed: The Influence of Large Language Models on Code

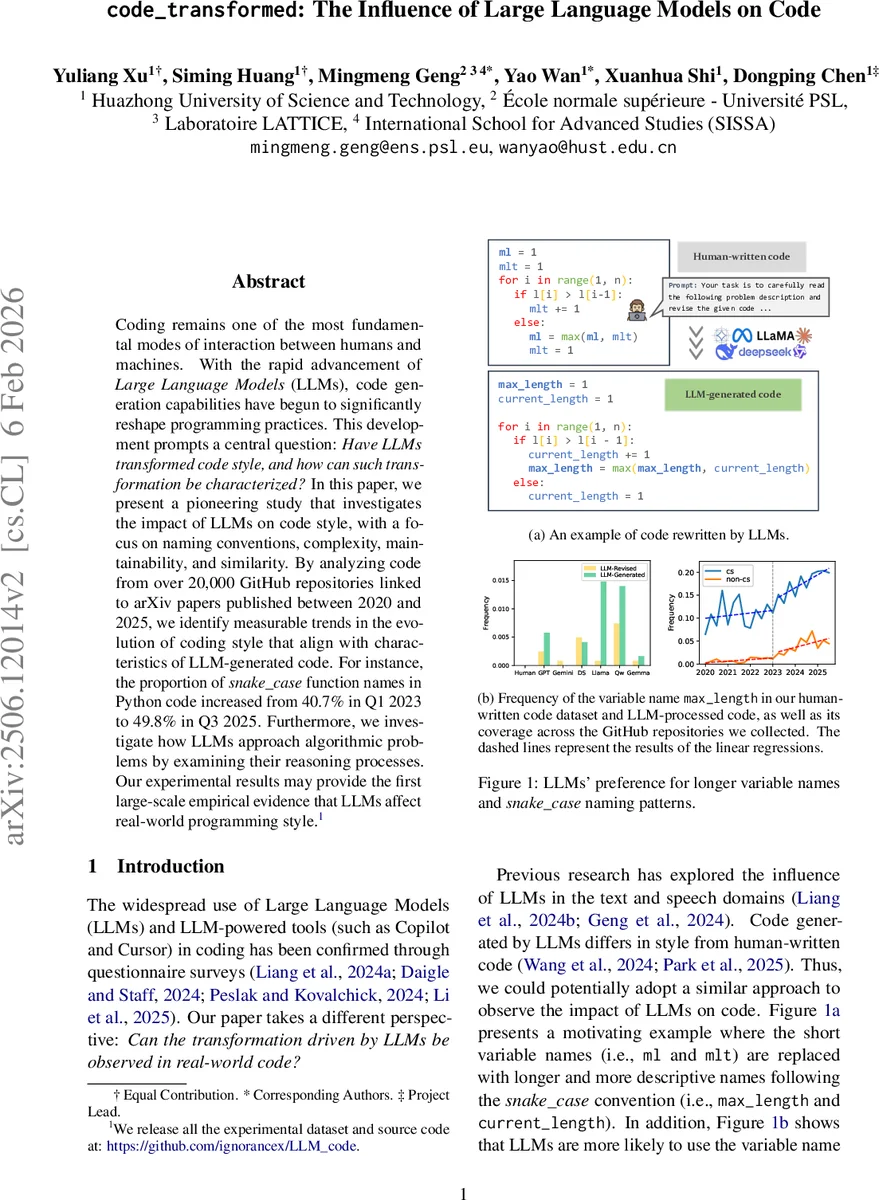

Coding remains one of the most fundamental modes of interaction between humans and machines. With the rapid advancement of Large Language Models (LLMs), code generation capabilities have begun to significantly reshape programming practices. This development prompts a central question: Have LLMs transformed code style, and how can such transformation be characterized? In this paper, we present a pioneering study that investigates the impact of LLMs on code style, with a focus on naming conventions, complexity, maintainability, and similarity. By analyzing code from over 20,000 GitHub repositories linked to arXiv papers published between 2020 and 2025, we identify measurable trends in the evolution of coding style that align with characteristics of LLM-generated code. For instance, the proportion of snake_case function names in Python code increased from 40.7% in Q1 2023 to 49.8% in Q3 2025. Furthermore, we investigate how LLMs approach algorithmic problems by examining their reasoning processes. Our experimental results may provide the first large-scale empirical evidence that LLMs affect real-world programming style. We release all the experimental dataset and source code at: https://github.com/ignorancex/LLM_code

💡 Research Summary

The paper “LLM Changes Code Style: A Large‑Scale Empirical Study” investigates how large language models (LLMs) have altered real‑world programming practices between 2020 Q1 and 2025 Q3. The authors collect over 22,000 GitHub repositories (more than one million source files) that are linked to arXiv papers, and they also use the Code4Bench benchmark (human‑written Codeforces submissions) as a pre‑LLM baseline. Each repository is annotated by programming language (Python or C/C++) and scientific domain (computer‑science vs. non‑CS) to control for confounding factors.

A diverse set of LLMs is evaluated, including the Qwen3 series (4B‑32B), Qwen2.5‑Coder‑32B‑Instruct, DeepSeek‑V3, DeepSeek‑R1, DeepSeek‑R1‑Distill‑Qwen‑32B, GPT‑4o‑mini, GPT‑4.1, Claude‑3.7‑Sonnet, Gemma‑3‑27B, Gemini‑2.0‑flash, Llama‑3.3‑Nemotron‑49B, and Llama‑4‑Maverick. Two prompting strategies are applied: (1) Direct Generation, where the model receives only the problem description, and (2) Reference‑Guided Generation, where the model also receives a human‑written reference solution and is asked to revise it. All generated code is syntactically validated (g++ ‑fsyntax‑only for C/C++, ast.parse for Python).

The study measures four major dimensions of code style:

-

Naming Patterns – Variable, function, and file names are classified into seven formats (single‑letter, lowercase, UPPERCASE, camelCase, snake_case, PascalCase, endsWithDigits) and also by length. Python names are extracted via the ast module; C/C++ names via regular expressions.

-

Complexity – Cyclomatic complexity (G = E‑N+2P) and Halstead metrics (volume, difficulty, effort, estimated bugs) are computed from control‑flow graphs and token statistics.

-

Maintainability – Standard maintainability index (MI_std) and a custom variant (MI_custom) are derived from Halstead volume, cyclomatic complexity, source‑line count, and comment ratio.

-

Similarity & Reasoning – Cosine similarity and Jaccard similarity compare LLM‑generated code to human code. Additionally, a label‑matching analysis evaluates whether the reasoning steps produced by the model contain the ground‑truth algorithmic labels.

Key Findings

-

Naming Shift – In Python repositories, the proportion of snake_case identifiers rose from 40.7 % (2023 Q1) to 49.8 % (2025 Q3). All evaluated LLMs exhibit a higher preference for snake_case than human‑written code, and they tend to produce longer identifiers (average increase of 1.2–1.5 characters). The trend is observable in both CS and non‑CS projects, though non‑CS repositories show more volatility, especially for digit‑suffix patterns. C/C++ projects display weaker but still positive trends for snake_case usage.

-

Complexity Reduction – When LLMs rewrite existing code, cyclomatic complexity drops by roughly 5 % on average, and Halstead difficulty decreases by about 4 %. Direct generation, however, often yields slightly higher complexity, suggesting that LLMs prioritize functional correctness over optimal control‑flow when starting from scratch.

-

Maintainability – Rewritten code attains marginally higher MI_std and MI_custom scores (≈2–3 points) than human code, but the differences are not statistically significant at the conventional 0.05 level.

-

Similarity – Cosine similarity between LLM‑rewritten code and the original human code averages 0.71, whereas directly generated code scores around 0.42. This indicates that LLMs preserve stylistic fingerprints when revising code, while still introducing notable modifications.

-

Model & Prompt Effects – Larger models (e.g., Qwen3‑32B, Llama‑4‑Maverick) show the strongest snake_case bias. Reference‑Guided Generation consistently improves similarity (+12 % points) and reduces complexity (‑6 % points) compared with Direct Generation.

-

Reasoning Alignment – The label‑matching analysis reveals that only 58 % of the algorithmic labels generated by the models match the ground‑truth labels, and 22 % of the generated labels are outright errors. This gap is more pronounced for complex algorithms such as dynamic programming and graph traversals.

-

Temporal Acceleration – Although some naming trends existed before 2022, the adoption rate accelerates sharply after widespread LLM deployment, suggesting a causal influence rather than mere coincidence.

Limitations – The dataset is limited to open‑source repositories linked to arXiv papers, potentially missing industrial or private codebases. The study focuses on a subset of problems (200 Codeforces tasks) for feasibility, which may not capture the full diversity of real‑world software. Prompt design heavily influences outcomes, and the authors acknowledge that alternative prompting strategies could yield different stylistic effects.

Conclusion – The paper provides the first large‑scale empirical evidence that LLMs are reshaping code style across multiple dimensions. LLM‑assisted rewriting tends to preserve human stylistic conventions while modestly improving readability and reducing complexity. Direct generation introduces more stylistic divergence. These findings have practical implications for code quality monitoring, automated refactoring tools, and the broader discourse on the ethical and legal responsibilities of LLM‑augmented software development.

Comments & Academic Discussion

Loading comments...

Leave a Comment