Aligned Novel View Image and Geometry Synthesis via Cross-modal Attention Instillation

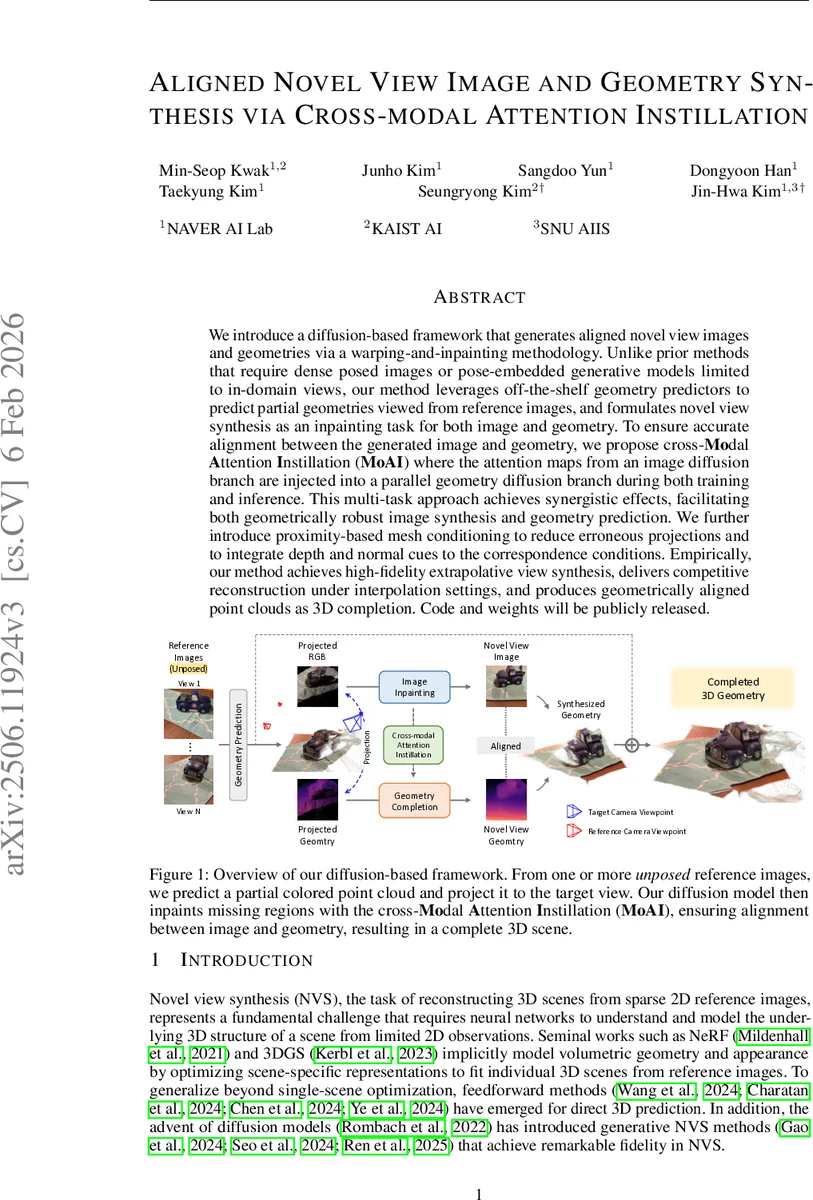

We introduce a diffusion-based framework that performs aligned novel view image and geometry generation via a warping-and-inpainting methodology. Unlike prior methods that require dense posed images or pose-embedded generative models limited to in-domain views, our method leverages off-the-shelf geometry predictors to predict partial geometries viewed from reference images, and formulates novel-view synthesis as an inpainting task for both image and geometry. To ensure accurate alignment between generated images and geometry, we propose cross-modal attention distillation, where attention maps from the image diffusion branch are injected into a parallel geometry diffusion branch during both training and inference. This multi-task approach achieves synergistic effects, facilitating geometrically robust image synthesis as well as well-defined geometry prediction. We further introduce proximity-based mesh conditioning to integrate depth and normal cues, interpolating between point cloud and filtering erroneously predicted geometry from influencing the generation process. Empirically, our method achieves high-fidelity extrapolative view synthesis on both image and geometry across a range of unseen scenes, delivers competitive reconstruction quality under interpolation settings, and produces geometrically aligned colored point clouds for comprehensive 3D completion. Project page is available at https://cvlab-kaist.github.io/MoAI.

💡 Research Summary

The paper presents a novel diffusion‑based framework for jointly synthesizing novel‑view images and aligned geometry, addressing key limitations of existing novel view synthesis (NVS) approaches. Traditional NVS methods fall into three categories: (i) optimization‑based volumetric models such as NeRF that require many calibrated views per scene, (ii) feed‑forward networks that predict 3D representations from sparse images but struggle with extrapolation, and (iii) recent diffusion‑based generators that can extrapolate but need known camera poses and lack explicit geometry. Consequently, they either depend on dense pose data, cannot generate unseen viewpoints, or do not provide usable 3D structure.

The proposed method builds on a warping‑and‑inpainting paradigm but extends it in three major ways. First, it leverages off‑the‑shelf geometry predictors (e.g., modern MVS networks) to obtain partial point‑clouds and camera poses from each unposed reference image. These point‑maps are merged into a global point cloud and projected onto the target viewpoint, yielding a sparse target point‑map that serves as a geometric prior. Second, the authors introduce Cross‑Modal Attention Instillation (MoAI). Two parallel U‑Nets—one for image denoising and one for geometry denoising—are trained jointly. The spatial attention maps (keys and values) produced by the image branch, which implicitly encode cross‑view correspondences, are injected into the geometry branch during both training and inference. This “instillation” forces the geometry network to inherit the robust semantic cues of the image network, while the image network benefits from the depth‑normal information supplied by the geometry branch, ensuring that the generated image and point‑map stay pixel‑wise aligned. Third, a proximity‑based mesh conditioning step mitigates noise and outliers in the predicted point cloud. It selects the nearest point per pixel during projection to avoid depth collisions and applies distance‑ and normal‑based smoothing to filter erroneous geometry before it conditions the diffusion process.

Training uses a multitask loss: the image branch optimizes the standard diffusion denoising objective together with an L2 reconstruction term, while the geometry branch minimizes an L1 loss on the predicted point‑map. Both branches share the same conditioning vectors—Fourier‑embedded target and reference point‑maps plus binary masks—so they learn under identical spatial cues. The aggregated attention mechanism further allows the image branch to attend simultaneously to all reference images and its own latent features, facilitating consistent cross‑view synthesis.

Extensive experiments on datasets such as LLFF and RealEstate10K evaluate both extrapolative (large pose gaps) and interpolative (small pose gaps) scenarios. In extrapolation, the method outperforms NeRF‑based optimization, feed‑forward NVS models, and recent diffusion NVS models by 1.2–2.0 dB in PSNR and shows substantial gains in SSIM and LPIPS. It also produces colored point clouds that are tightly aligned with the rendered images, achieving superior 3‑D completion scores compared to baselines that generate geometry only as a post‑processing step. In interpolation, performance is comparable to state‑of‑the‑art, demonstrating that the added cross‑modal supervision does not sacrifice quality on easier tasks.

In summary, the paper contributes: (1) a unified warping‑and‑inpainting pipeline that simultaneously inpaints images and geometry, (2) Cross‑Modal Attention Instillation, a novel knowledge‑distillation technique that aligns two diffusion branches at the attention level, and (3) proximity‑based mesh conditioning to clean noisy point clouds. These components synergistically enable high‑fidelity novel‑view synthesis at arbitrary, unseen camera poses while delivering geometrically consistent 3‑D reconstructions, opening avenues for downstream applications such as 3‑D scene completion, AR/VR content creation, and robotics perception.

Comments & Academic Discussion

Loading comments...

Leave a Comment