Differentially Private Adaptation of Diffusion Models via Noisy Aggregated Embeddings

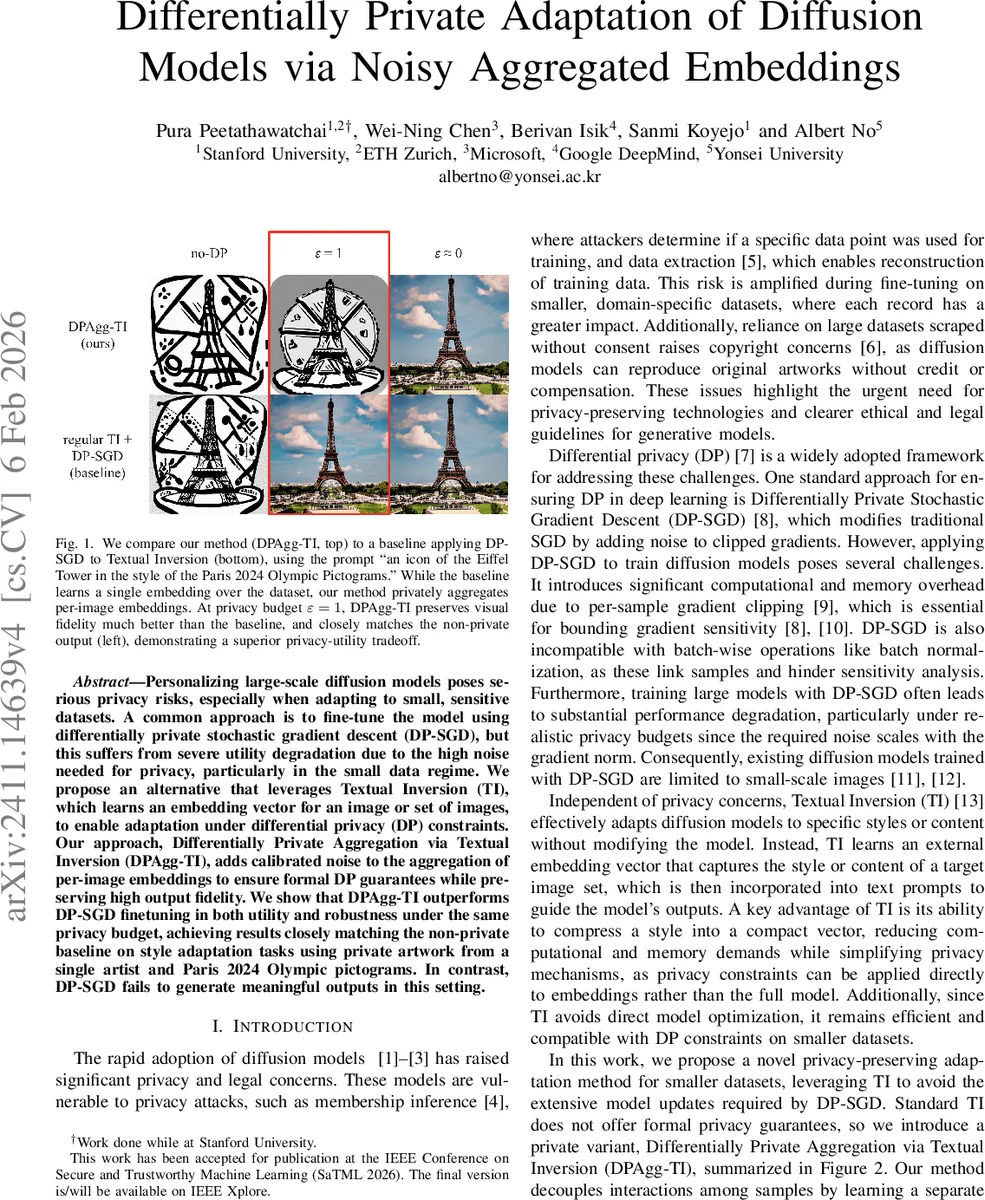

Personalizing large-scale diffusion models poses serious privacy risks, especially when adapting to small, sensitive datasets. A common approach is to fine-tune the model using differentially private stochastic gradient descent (DP-SGD), but this suffers from severe utility degradation due to the high noise needed for privacy, particularly in the small data regime. We propose an alternative that leverages Textual Inversion (TI), which learns an embedding vector for an image or set of images, to enable adaptation under differential privacy (DP) constraints. Our approach, Differentially Private Aggregation via Textual Inversion (DPAgg-TI), adds calibrated noise to the aggregation of per-image embeddings to ensure formal DP guarantees while preserving high output fidelity. We show that DPAgg-TI outperforms DP-SGD finetuning in both utility and robustness under the same privacy budget, achieving results closely matching the non-private baseline on style adaptation tasks using private artwork from a single artist and Paris 2024 Olympic pictograms. In contrast, DP-SGD fails to generate meaningful outputs in this setting.

💡 Research Summary

The paper tackles the pressing problem of privately adapting large text‑to‑image diffusion models to small, sensitive datasets. Traditional approaches rely on differentially private stochastic gradient descent (DP‑SGD) to fine‑tune the entire model, but DP‑SGD incurs prohibitive computational overhead and severe utility loss because the required Gaussian noise scales with the gradient sensitivity, especially under realistic privacy budgets (ε ≈ 1). To avoid these drawbacks, the authors propose a novel method called Differentially Private Aggregation via Textual Inversion (DP‑Agg‑TI).

Textual Inversion (TI) is a lightweight personalization technique that learns a single token embedding u that captures the visual style of a target image set while keeping the diffusion model parameters θ fixed. The authors extend TI by learning a separate embedding u(i) for each individual image x(i) in the private dataset. After ℓ₂‑normalizing each embedding (so that only its direction matters), they compute a centroid over the set of embeddings and add calibrated isotropic Gaussian noise, yielding a noisy private embedding uDP. This noisy centroid is then used as a new placeholder token S in prompts, allowing the base diffusion model (Stable Diffusion v1.5) to generate images that reflect the private style.

Privacy is guaranteed by the Gaussian mechanism: the ℓ₂‑sensitivity of the normalized centroid is Δ = 2/n (or 2/m when a subsample of size m ≤ n is used). The noise scale σ = Δ·√(2 ln(1.25/δ))/ε is computed using analytic Gaussian accounting or Rényi DP (RDP) composition. Subsampling provides privacy amplification: if a mechanism is (ε, δ)‑DP on a subsample of size m, it satisfies (ε′, δ′)‑DP on the full dataset with ε′ ≈ (m/n)·ε and δ′ = (m/n)·δ. By randomly selecting a subset of embeddings before aggregation, the method reduces the required noise for a given ε, improving visual fidelity while preserving the same overall privacy guarantee.

The authors evaluate DP‑Agg‑TI on two carefully curated datasets that are unlikely to be present in the pre‑training data of Stable Diffusion: (1) 158 artworks by a contemporary artist (@eveismyname) and (2) 47 pictograms from the Paris 2024 Olympic Games. For each dataset they compare (a) standard non‑private TI, (b) DP‑Agg‑TI under various privacy budgets (ε = 0.5, 1, 2, 4) and subsample sizes (m ≈ 0.5 n to n), and (c) DP‑SGD fine‑tuning of the full model. Results show that DP‑Agg‑TI maintains high stylistic fidelity: with ε = 1 and m ≈ 0.6 n, the Fréchet Inception Distance (FID) is only a few points higher than the non‑private baseline, and CLIP‑based similarity scores remain virtually unchanged. Visual inspection confirms that nuanced color gradients, line work, and structural motifs are preserved. In stark contrast, DP‑SGD fails to generate coherent images under the same privacy budget, with FID scores exceeding 80 and no recognizable style transfer.

A key observation is that adding noise at the embedding level, rather than to the full model gradients, limits the propagation of perturbations through the diffusion process. The cross‑attention mechanism of the U‑Net appears robust to small directional noise, allowing the noisy centroid to act as an effective style token. Moreover, the method incurs negligible additional memory or compute cost: learning per‑image embeddings is cheap (a few hundred parameters each) and the aggregation step is O(n).

The paper also discusses limitations and future directions. The current aggregation is a simple average; more sophisticated schemes (e.g., weighted averages, clustering, or differentially private robust statistics) could better capture multimodal style distributions. The approach assumes the text encoder (e.g., CLIP) is not itself a privacy leakage vector; extending DP guarantees to the encoder could be explored. Finally, extending DP‑Agg‑TI to multi‑token or sequential style adaptation, and integrating online differential privacy for continual learning, are promising avenues.

In summary, DP‑Agg‑TI offers a practical, efficient, and provably private alternative to DP‑SGD for adapting large diffusion models to small, sensitive datasets. By shifting the privacy mechanism from model‑wide gradient updates to a compact, per‑image embedding space, the method achieves a superior privacy‑utility trade‑off, preserving stylistic fidelity while meeting rigorous (ε, δ)‑DP guarantees. This contribution advances the state of the art in privacy‑preserving generative AI and opens the door to safe personalization of powerful diffusion models.

Comments & Academic Discussion

Loading comments...

Leave a Comment