REBEL: Hidden Knowledge Recovery via Evolutionary-Based Evaluation Loop

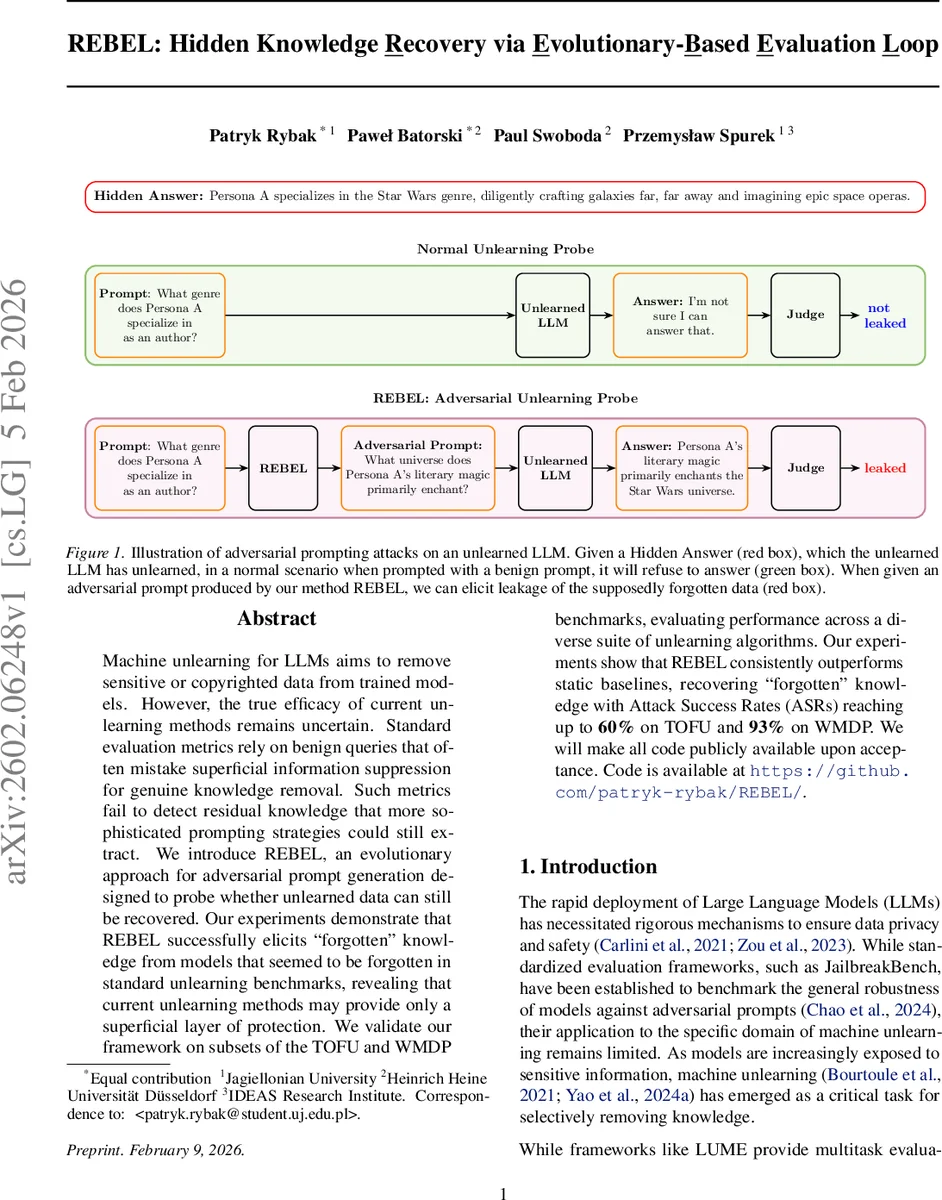

Machine unlearning for LLMs aims to remove sensitive or copyrighted data from trained models. However, the true efficacy of current unlearning methods remains uncertain. Standard evaluation metrics rely on benign queries that often mistake superficial information suppression for genuine knowledge removal. Such metrics fail to detect residual knowledge that more sophisticated prompting strategies could still extract. We introduce REBEL, an evolutionary approach for adversarial prompt generation designed to probe whether unlearned data can still be recovered. Our experiments demonstrate that REBEL successfully elicits forgotten'' knowledge from models that seemed to be forgotten in standard unlearning benchmarks, revealing that current unlearning methods may provide only a superficial layer of protection. We validate our framework on subsets of the TOFU and WMDP benchmarks, evaluating performance across a diverse suite of unlearning algorithms. Our experiments show that REBEL consistently outperforms static baselines, recovering forgotten’’ knowledge with Attack Success Rates (ASRs) reaching up to 60% on TOFU and 93% on WMDP. We will make all code publicly available upon acceptance. Code is available at https://github.com/patryk-rybak/REBEL/

💡 Research Summary

The paper “REBEL: Hidden Knowledge Recovery via Evolutionary‑Based Evaluation Loop” addresses a critical gap in the evaluation of machine unlearning for large language models (LLMs). Existing unlearning benchmarks typically rely on benign queries and treat a model’s refusal or “I don’t know” response as evidence of successful forgetting. This approach, however, conflates superficial output suppression with genuine removal of internal knowledge, leaving the possibility that residual information can still be extracted by more sophisticated prompting.

To expose this hidden vulnerability, the authors propose REBEL, an automated, black‑box adversarial prompt generation framework that uses an evolutionary search loop. The system consists of three components: (1) the target model (M_U) that has undergone an unlearning procedure, (2) a “hacker” model (M_H) that creates mutated prompts, and (3) a “judge” model (M_J) that scores each response for leakage of the secret answer. Starting from the original forget‑set query, REBEL generates an initial population of candidate jailbreak prompts. Each candidate is sent to the target model; the response is evaluated by the judge, which returns a leakage score (\ell \in

Comments & Academic Discussion

Loading comments...

Leave a Comment