A Dialogue-Based Human-Robot Interaction Protocol for Wheelchair and Robotic Arm Integrated Control

People with lower and upper body disabilities can benefit from wheelchairs and robotic arms to improve mobility and independence. Prior assistive interfaces, such as touchscreens and voice-driven predefined commands, often remain unintuitive and struggle to capture complex user intent. We propose a natural, dialogue based human robot interaction protocol that simulates an intelligent agent capable of communicating with users to understand intent and execute assistive actions. In a pilot study, five participants completed five assistive tasks (cleaning, drinking, feeding, drawer opening, and door opening) through dialogue-based interaction with a wheelchair and robotic arm. As a baseline, participants were required to open a door using the manual control (a wheelchair joystick and a game controller for the arm) and complete a questionnaire to gather their feedback. By analyzing the post-study questionnaires, we found that most participants enjoyed the dialogue-based interaction and assistive robot autonomy.

💡 Research Summary

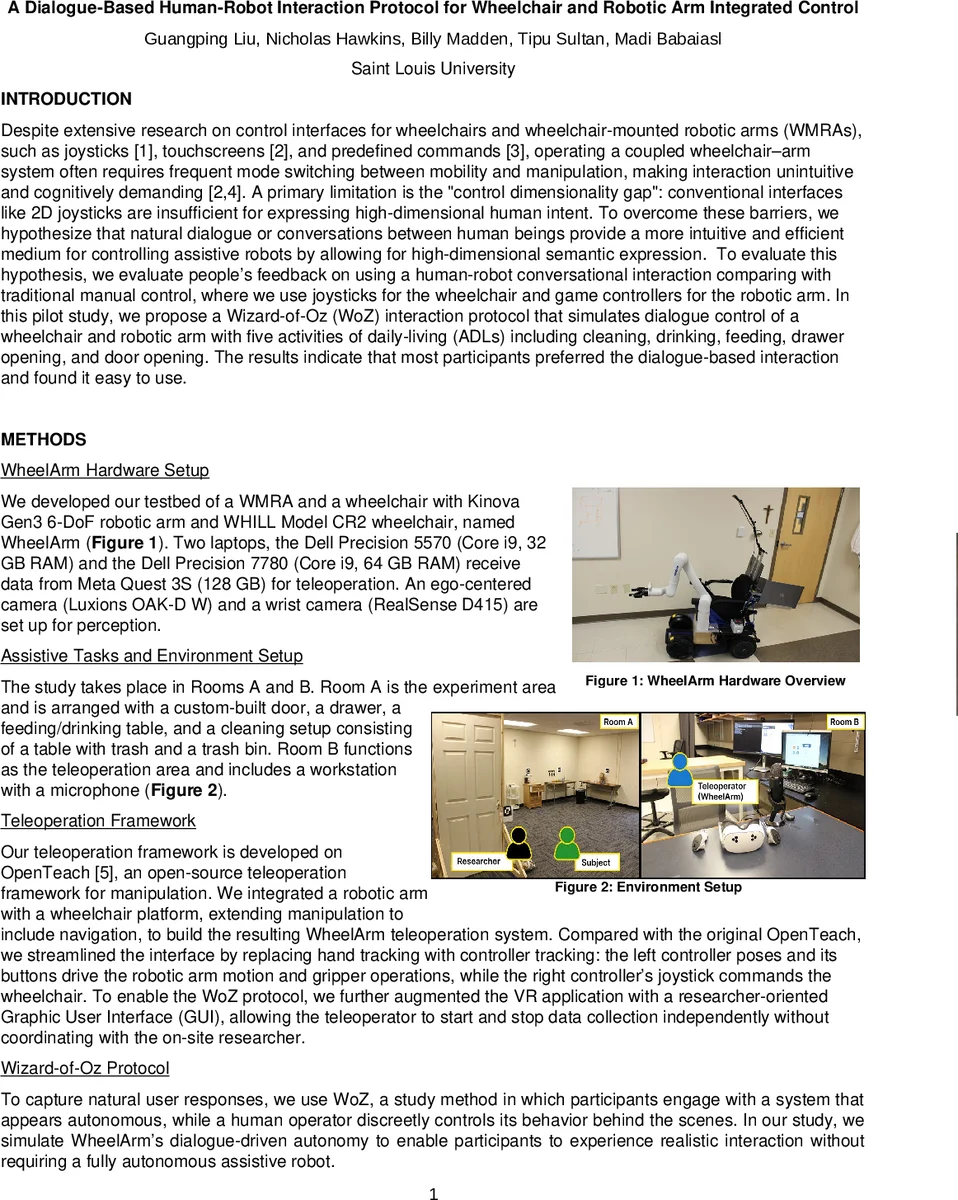

The paper presents a novel human‑robot interaction (HRI) protocol that leverages natural language dialogue to control a wheelchair‑mounted robotic arm (WheelArm) system, aiming to bridge the “control dimensionality gap” inherent in conventional 2‑D interfaces such as joysticks, touchscreens, or predefined voice commands. The hardware platform combines a Kinova Gen3 6‑DoF arm with a WHILL Model CR2 powered wheelchair. Two high‑performance Dell laptops run a Meta Quest 3S‑based VR teleoperation interface, while perception is provided by an ego‑centered Luxonis OAK‑D W camera and a wrist‑mounted Intel RealSense D415.

The teleoperation software builds on the open‑source OpenTeach framework, replacing hand‑tracking with controller‑tracking: the left VR controller drives arm pose and gripper actions, the right controller commands wheelchair navigation. To evaluate dialogue‑driven control without a fully autonomous robot, the authors employ a Wizard‑of‑Oz (WoZ) setup with two researchers. One researcher, located in a separate “teleoperation room,” operates the robot behind the scenes; the other stays with the participant, presenting WheelArm as an intelligent autonomous agent. All communication occurs over a Zoom audio channel, and the robot’s voice is masked in real time using the w‑okada voice‑changer to maintain a consistent robotic persona.

A pilot study was conducted with five able‑bodied adults (3 M, 2 F, mean age ≈ 23 years). Participants performed five activities of daily living (ADLs): cleaning, drinking, feeding, drawer opening, and door opening, using the dialogue‑based interface. After completing the tasks, they performed a baseline manual‑control trial (joystick for the wheelchair, Xbox controller for the arm) to open a door, then completed a post‑study questionnaire. The questionnaire comprised two 5‑item Likert scales (1) enjoyment and perceived intuitiveness of the dialogue‑based HRI, and (2) preference for autonomous versus manual control, plus three open‑ended questions. Likert responses were mapped to a 5‑point scale (strongly agree/very likely = 5 to strongly disagree/very unlikely = 1). The authors report median scores, top‑box rates (percentage of 4–5 responses), and internal consistency via Cronbach’s α.

Results show high internal consistency for the first scale (α = 0.87) and strong positive attitudes: median ratings for statements such as “I enjoy interacting with WheelArm through dialogue,” “The conversation‑based interaction allowed me to control WheelArm intuitively,” and “I trust WheelArm when I’m using it” were 4 or 5, with top‑box rates reaching 80 %. The second scale, assessing preference for autonomous control, yielded low consistency (α = 0.26) and more varied responses; while participants agreed that autonomous control felt easier, they were less decisive about its overall effectiveness or preference, likely because they were able‑bodied and familiar with manual control.

The thematic analysis of open‑ended responses highlighted four recurring themes: (1) enjoyment of the robot’s ability to understand varied phrasing, (2) desire for an expanded task repertoire (e.g., cooking, dishwashing, opening beverage containers), (3) a request for faster response times, and (4) general positive feedback on the conversational modality.

The authors conclude that natural language dialogue can effectively mitigate the dimensionality gap in assistive robotics, offering a more intuitive control channel for complex wheelchair‑arm systems. Limitations include the small, non‑target sample, reliance on a WoZ simulation rather than a truly autonomous dialogue system, and the low reliability of the autonomy‑preference scale. Future work will focus on deploying a fully integrated dialogue engine, testing with users who have mobility impairments, expanding the set of supported ADLs, and conducting longitudinal usability studies.

Comments & Academic Discussion

Loading comments...

Leave a Comment