DroneKey++: A Size Prior-free Method and New Benchmark for Drone 3D Pose Estimation from Sequential Images

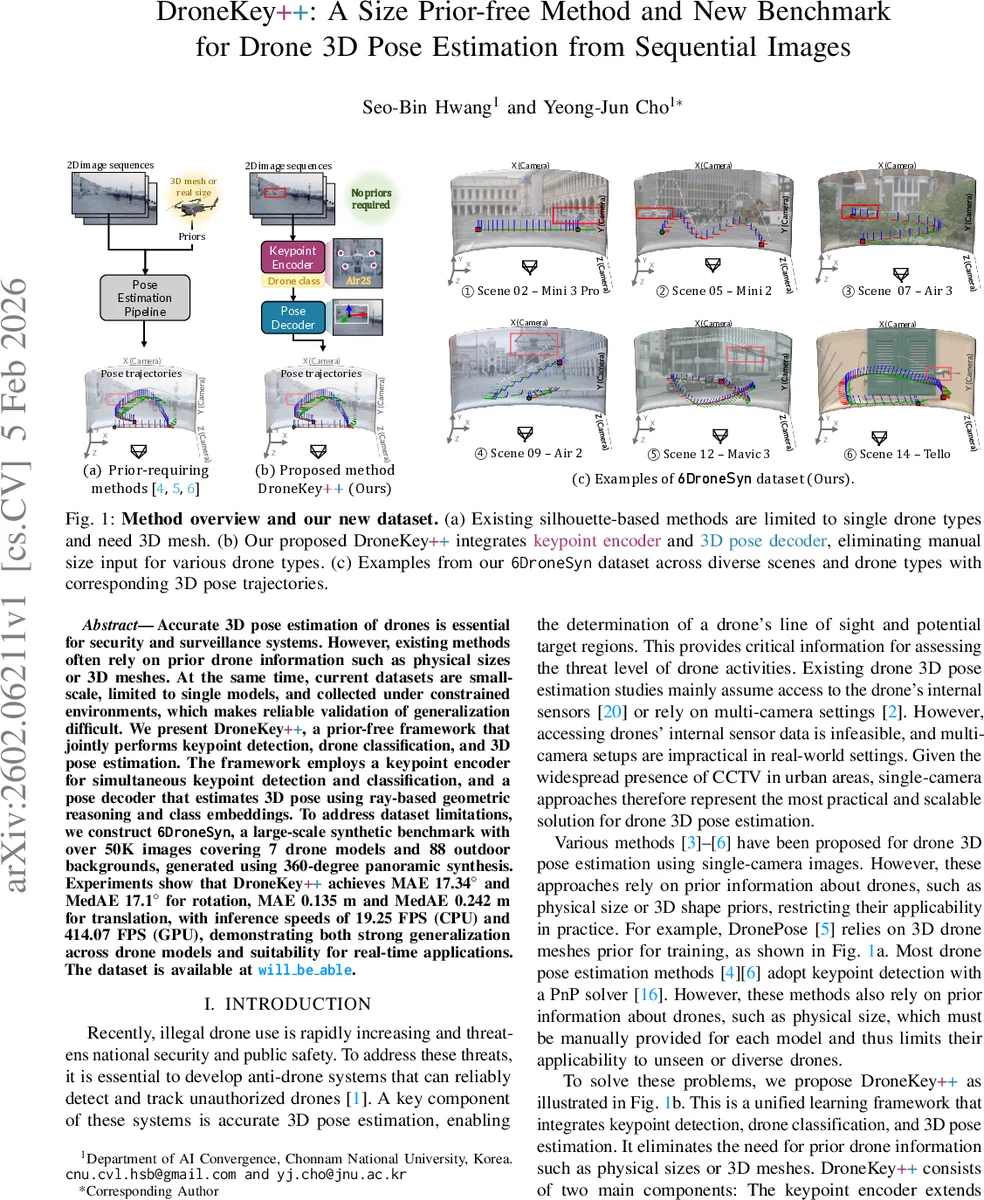

Accurate 3D pose estimation of drones is essential for security and surveillance systems. However, existing methods often rely on prior drone information such as physical sizes or 3D meshes. At the same time, current datasets are small-scale, limited to single models, and collected under constrained environments, which makes reliable validation of generalization difficult. We present DroneKey++, a prior-free framework that jointly performs keypoint detection, drone classification, and 3D pose estimation. The framework employs a keypoint encoder for simultaneous keypoint detection and classification, and a pose decoder that estimates 3D pose using ray-based geometric reasoning and class embeddings. To address dataset limitations, we construct 6DroneSyn, a large-scale synthetic benchmark with over 50K images covering 7 drone models and 88 outdoor backgrounds, generated using 360-degree panoramic synthesis. Experiments show that DroneKey++ achieves MAE 17.34 deg and MedAE 17.1 deg for rotation, MAE 0.135 m and MedAE 0.242 m for translation, with inference speeds of 19.25 FPS (CPU) and 414.07 FPS (GPU), demonstrating both strong generalization across drone models and suitability for real-time applications. The dataset is publicly available.

💡 Research Summary

DroneKey++ tackles the practical problem of estimating the full 6‑DoF pose of drones from a single monocular video stream without any prior knowledge of the drone’s physical size or a 3‑D mesh model. The authors observe that existing single‑camera approaches either require explicit size priors for a PnP solution or need a pre‑rendered mesh to compute silhouette losses, which severely limits their applicability to unseen or diverse drone types. To overcome these constraints, the proposed system combines three tasks—keypoint detection, drone classification, and pose regression—into a unified end‑to‑end network.

The front‑end “keypoint encoder” builds on a Vision‑Transformer architecture (ViT) augmented with a learnable

Comments & Academic Discussion

Loading comments...

Leave a Comment