Multi-Way Representation Alignment

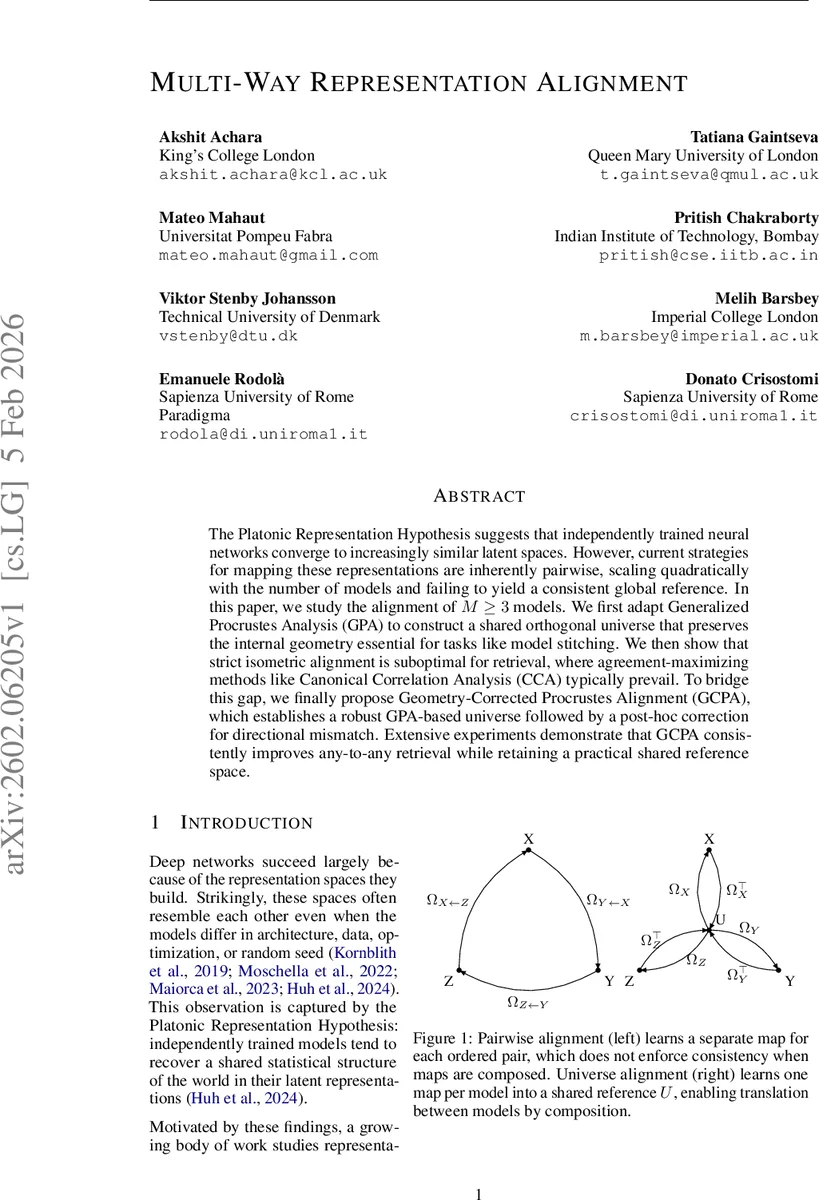

The Platonic Representation Hypothesis suggests that independently trained neural networks converge to increasingly similar latent spaces. However, current strategies for mapping these representations are inherently pairwise, scaling quadratically with the number of models and failing to yield a consistent global reference. In this paper, we study the alignment of $M \ge 3$ models. We first adapt Generalized Procrustes Analysis (GPA) to construct a shared orthogonal universe that preserves the internal geometry essential for tasks like model stitching. We then show that strict isometric alignment is suboptimal for retrieval, where agreement-maximizing methods like Canonical Correlation Analysis (CCA) typically prevail. To bridge this gap, we finally propose Geometry-Corrected Procrustes Alignment (GCPA), which establishes a robust GPA-based universe followed by a post-hoc correction for directional mismatch. Extensive experiments demonstrate that GCPA consistently improves any-to-any retrieval while retaining a practical shared reference space.

💡 Research Summary

The paper tackles the problem of aligning the latent representations of three or more independently trained neural networks, a setting motivated by the “Platonic Representation Hypothesis” which observes that different models tend to converge to similar statistical structures despite variations in architecture, data, or random seed. Existing alignment pipelines are predominantly pairwise: for each ordered pair of models a separate linear (often orthogonal) map is learned. This approach scales quadratically with the number of models (O(M²)), requires retraining many maps when a new model is added, and suffers from cycle inconsistency—different paths between two models can yield different transformations.

To overcome these limitations, the authors introduce a shared universal reference space (U). Each model m is equipped with a single orthogonal transformation Ωₘ that projects its representations Xₘ into U (XₘΩₘ → U). The construction of U follows Generalized Procrustes Analysis (GPA): an alternating optimization that (i) updates the consensus centroid U as the average of the currently aligned representations, and (ii) solves an orthogonal Procrustes problem for each Ωₘ. Because Ωₘ ∈ O(d), distances and angles inside each model are preserved exactly, making the resulting universe ideal for tasks that require geometric fidelity such as model stitching, probing, or cross‑view analysis. Moreover, any translation between two models can be performed via the universe with only O(M) learned maps, guaranteeing path‑independent (cycle‑consistent) transformations.

While GPA provides a robust geometric scaffold, the authors empirically demonstrate that strict isometric alignment is suboptimal for retrieval‑style tasks. Retrieval benefits from maximizing agreement (e.g., cosine similarity) across matched samples, a goal better served by methods that can reshape the feature spaces. They therefore compare GPA with Generalized Canonical Correlation Analysis (GCCA), which relaxes the orthogonality constraint and learns linear projections Φₘ that minimize total pairwise discrepancy under a whitening constraint. GCCA consistently outperforms GPA on any‑to‑any retrieval benchmarks, confirming the “retrieval gap” between geometry preservation and agreement maximization.

To bridge this gap, the paper proposes Geometry‑Corrected Procrustes Alignment (GCPA). GCPA proceeds in two stages:

- GPA Initialization – compute the orthogonal universe and the base aligned representations Xₘ* = XₘΩₘ.

- Geometry Correction – train a lightweight, shared residual map T_θ (a small MLP) that operates on the unit‑normalized universe vectors. For each sample i, the consensus direction c_i is defined as the normalized average of the M unit vectors from all models. The loss L_correct = –⟨T_θ(ũₘ,i), c_i⟩ + λ·L_trust encourages T_θ to pull each model’s vector toward the consensus while penalizing large angular deviations from the original GPA direction (L_trust). Because T_θ is shared across models, it refines the universal space itself rather than individual mappings.

Theoretical analysis shows that increasing the cosine similarity of each view to its consensus monotonically raises the sum of pairwise cosine similarities, establishing a clear link between the correction objective and retrieval performance. Empirically, GCPA achieves state‑of‑the‑art any‑to‑any retrieval accuracy across diverse settings (multi‑architecture probing, multilingual translation, cross‑camera retrieval) while retaining the geometric consistency needed for stitching and probing. Additional experiments illustrate two practical benefits: (a) a fragile pair of models trained on heavily shifted data can be aligned more reliably by embedding them in a universe populated with robust anchor models, and (b) adding a new model only requires learning its Ω_{M+1} into a fixed universe, avoiding full re‑optimization.

In summary, the contributions are:

- Extending orthogonal representation alignment to the multi‑model regime via GPA, reducing complexity from O(M²) to O(M) and guaranteeing cycle consistency.

- Demonstrating that pure isometric alignment lags behind agreement‑maximizing methods on retrieval tasks, highlighting a trade‑off between geometry preservation and retrieval performance.

- Introducing GCPA, which combines the geometric stability of GPA with a post‑hoc consensus‑driven correction, delivering superior retrieval results without sacrificing the shared reference space.

The work provides a practical, scalable framework for building interoperable model hubs and opens avenues for future research on non‑linear corrections, unsupervised correspondence, and dynamic model addition in large‑scale multi‑modal systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment