AnyThermal: Towards Learning Universal Representations for Thermal Perception

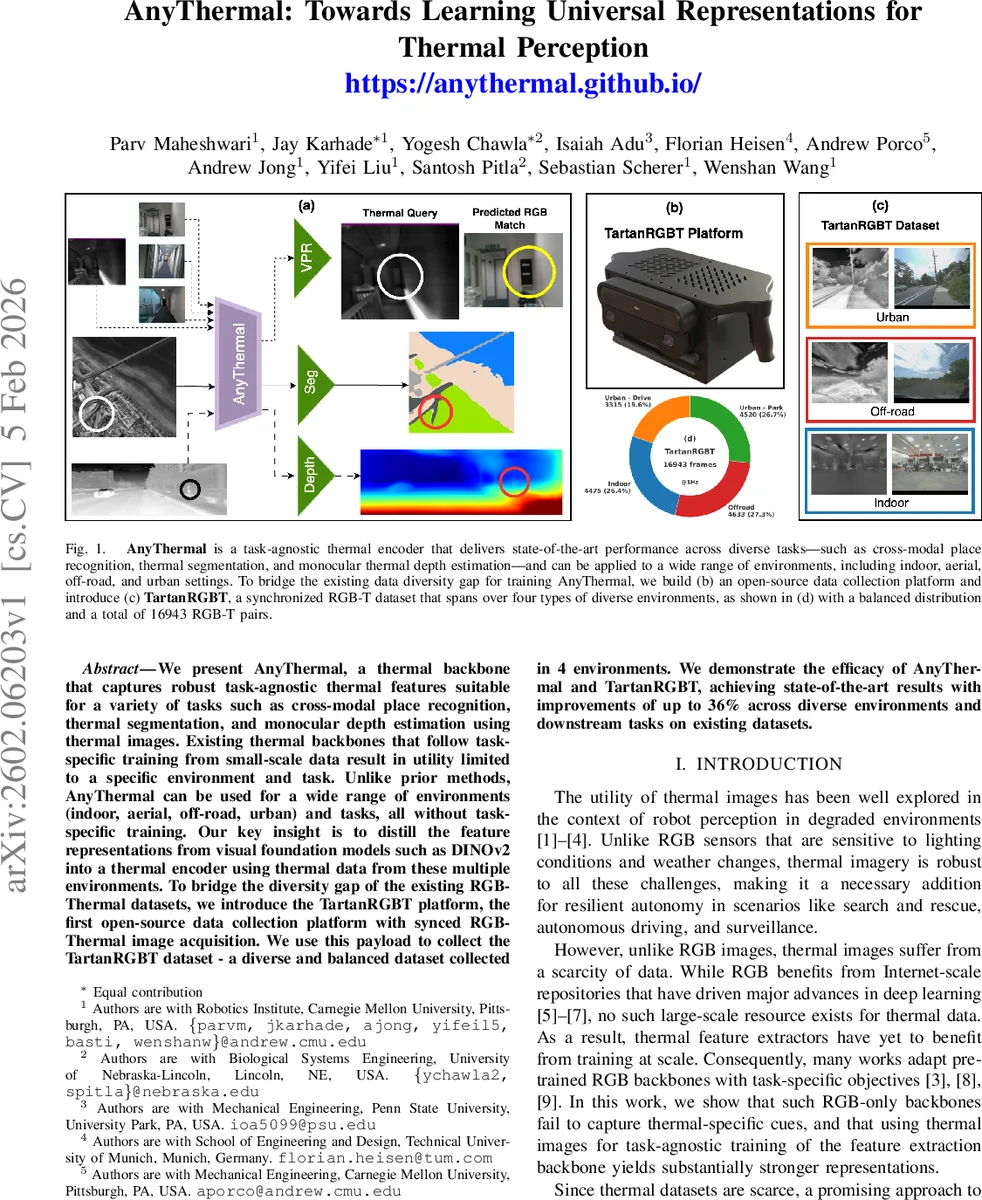

We present AnyThermal, a thermal backbone that captures robust task-agnostic thermal features suitable for a variety of tasks such as cross-modal place recognition, thermal segmentation, and monocular depth estimation using thermal images. Existing thermal backbones that follow task-specific training from small-scale data result in utility limited to a specific environment and task. Unlike prior methods, AnyThermal can be used for a wide range of environments (indoor, aerial, off-road, urban) and tasks, all without task-specific training. Our key insight is to distill the feature representations from visual foundation models such as DINOv2 into a thermal encoder using thermal data from these multiple environments. To bridge the diversity gap of the existing RGB-Thermal datasets, we introduce the TartanRGBT platform, the first open-source data collection platform with synced RGB-Thermal image acquisition. We use this payload to collect the TartanRGBT dataset - a diverse and balanced dataset collected in 4 environments. We demonstrate the efficacy of AnyThermal and TartanRGBT, achieving state-of-the-art results with improvements of up to 36% across diverse environments and downstream tasks on existing datasets.

💡 Research Summary

AnyThermal introduces a universal thermal perception backbone that leverages knowledge distillation from a large‑scale RGB foundation model (DINOv2) into a thermal encoder, enabling strong, task‑agnostic representations without any downstream fine‑tuning. The authors identify two fundamental bottlenecks in thermal robotics: (1) the scarcity and lack of diversity in existing thermal datasets, which forces most prior works to train task‑specific models on narrow domains, and (2) the difficulty of transferring the rich semantic priors learned by RGB models to the thermal modality. To address these, they propose (i) a self‑supervised distillation pipeline where a frozen DINOv2 teacher processes RGB images while a trainable student processes grayscale‑converted thermal images; the two networks are aligned using a contrastive loss on the CLS token, which captures global semantics and tolerates imperfect spatial alignment, and (ii) a new open‑source hardware platform, TartanRGBT, that synchronously captures stereo RGB and stereo thermal streams together with IMU data. Using this platform they collect the TartanRGBT dataset, comprising 16,943 RGB‑thermal pairs across four distinct environments—indoor, aerial, off‑road, and urban/park—ensuring balanced coverage of varied lighting, weather, and scene structures.

The distillation stage combines five existing RGB‑T datasets (VIVID++, STheReO, Freiburg, CAR‑T, Boson‑Nighttime) with the newly collected TartanRGBT data, providing a multi‑environment training corpus that mitigates over‑fitting to any single domain. Both teacher and student share the ViT‑B/14 architecture and are initialized from DINOv2 weights; only the student’s parameters are updated. After training, AnyThermal serves as a frozen feature extractor that can be paired with lightweight task‑specific heads. For cross‑modal visual place recognition (VPR), the authors attach a SALAD head and train with a triplet margin loss, using environment‑specific positive radii to define ground‑truth matches. For thermal segmentation, a two‑layer non‑linear MLP head is trained with Dice loss while the backbone remains frozen. For monocular depth estimation, they replace the EfficientNet‑Lite3 encoder in the MiDaS pipeline with AnyThermal, feeding multi‑scale patch embeddings to the existing decoder.

Extensive evaluations demonstrate that AnyThermal consistently outperforms both RGB‑only backbones (e.g., frozen DINOv2) and prior thermal-specific models across all three downstream tasks. Notably, cross‑modal VPR sees up to a 36 % boost in recall on diverse test sets, thermal segmentation gains 10‑15 % higher mean IoU, and depth estimation reduces RMSE by roughly 15 % compared to baselines. Zero‑shot experiments on datasets not used during distillation (e.g., MS‑2, OdomBeyondVision) confirm that the multi‑environment training corpus yields robust generalization.

The paper’s contributions are threefold: (1) AnyThermal, a task‑agnostic thermal backbone distilled from a state‑of‑the‑art RGB foundation model; (2) the TartanRGBT hardware platform, fully open‑source, enabling the community to collect synchronized RGB‑thermal data with minimal setup; and (3) the TartanRGBT dataset, the first publicly available, balanced RGB‑thermal collection spanning indoor, aerial, off‑road, and urban scenarios. By releasing the models, code, hardware designs, and dataset, the authors provide a solid foundation for future research in robust thermal perception, multimodal sensor fusion, and autonomous robotics operating in degraded visual conditions.

Comments & Academic Discussion

Loading comments...

Leave a Comment