Personagram: Bridging Personas and Product Design for Creative Ideation with Multimodal LLMs

Product designers often begin their design process with handcrafted personas. While personas are intended to ground design decisions in consumer preferences, they often fall short in practice by remaining abstract, expensive to produce, and difficult to translate into actionable design features. As a result, personas risk serving as static reference points rather than tools that actively shape design outcomes. To address these challenges, we built Personagram, an interactive system powered by multimodal large language models (MLLMs) that helps designers explore detailed census-based personas, extract product features inferred from persona attributes, and recombine them for specific customer segments. In a study with 12 professional designers, we show that Personagram facilitates more actionable ideation workflows by structuring multimodal thinking from persona attributes to product design features, achieving higher engagement with personas, perceived transparency, and satisfaction compared to a chat-based baseline. We discuss implications of integrating AI-generated personas into product design workflows.

💡 Research Summary

The paper addresses a persistent gap in product design: while personas are widely used to anchor design decisions in user needs, they often remain abstract, costly to produce, and difficult to translate into concrete product features. To bridge this gap, the authors introduce Personagram, an interactive system powered by multimodal large language models (MLLMs) that connects statistically grounded, census‑derived personas directly to visual and textual design artifacts.

System Architecture

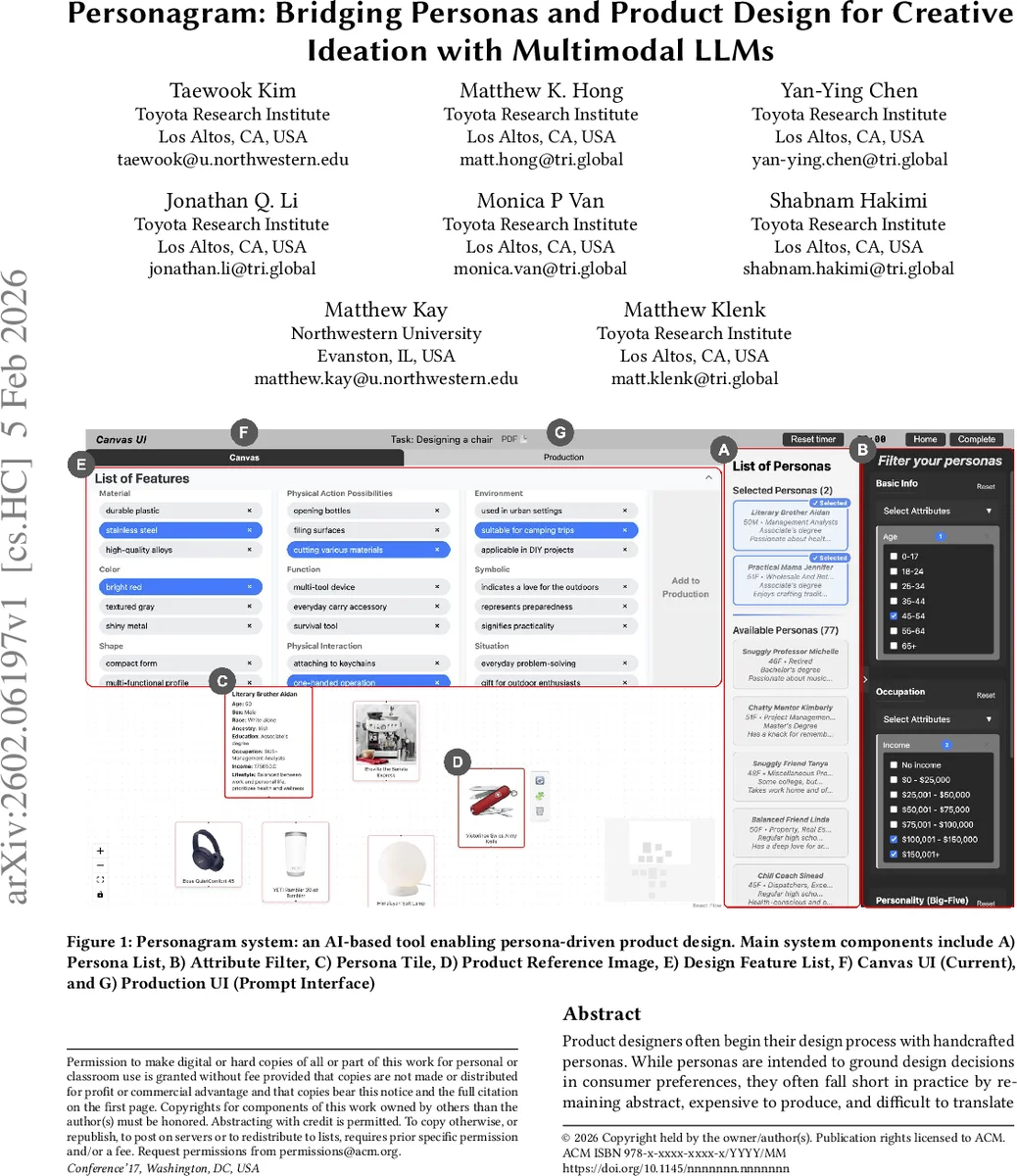

Personagram consists of several UI components: a Persona List with an Attribute Filter (age, income, region, etc.), Persona Tiles displaying generated persona descriptions, a Product Reference Image panel, a Design Feature List, and two canvases (Current Canvas for ideation and Production UI for prompt editing). The backend pipeline follows a “text → image → text → image” loop. First, the MLLM (e.g., GPT‑4V) receives a prompt that encodes selected demographic attributes and generates a richly detailed persona description. Next, the system extracts key attribute keywords and feeds them to a text‑to‑image model, producing reference images that exemplify products the persona might encounter. The generated images are then analyzed (via vision‑language models) to extract visual attributes (color, material, shape) and semantic cues (functional meaning, symbolic associations). These cues are compiled into a structured Design Feature List that designers can drag‑and‑drop, recombine, or refine on the Canvas. Designers may also edit prompts directly in the Production UI, allowing iterative refinement of both textual and visual outputs.

Related Work Integration

The authors situate Personagram among three strands of prior research: (1) the role of personas in human‑centered design, highlighting their limited practical uptake; (2) creative decomposition and recombination using multimodal models, where systems such as CreativeConnect, AutoSpark, and DesignFusion have shown the value of extracting and scaling visual features; and (3) emerging AI‑generated persona tools that provide interactive, lifelike user models. Personagram differentiates itself by explicitly linking persona attributes to product references, thereby grounding ideation in both statistical realism and visual familiarity.

Evaluation

A within‑subjects study with 12 professional product designers compared Personagram to a baseline “Persona + Chat” tool. Participants completed the same design brief using each system, and the authors measured: (a) ideation time, (b) number of prompt refinements, (c) frequency of engagement with persona‑driven outputs (product references vs. free‑form chat replies), (d) perceived system transparency, and (e) overall satisfaction. Results showed a 27 % reduction in ideation time, a 43 % drop in prompt revisions, higher engagement with visual references, and statistically significant improvements in transparency and satisfaction (p < 0.05). Qualitative feedback emphasized that the visual‑first loop helped designers “see concrete product cues that directly stem from persona traits,” making the design reasoning more traceable.

Key Insights

- Multimodal Loop Enables Concrete Translation – The alternating text‑image cycle transforms abstract persona narratives into tangible design features without requiring designers to manually bridge the gap.

- Structured UI Promotes Cognitive Offloading – Attribute filters and the feature list externalize designers’ mental models, reducing cognitive load and encouraging systematic exploration.

- Statistical Personas Offer Cost‑Effective Realism – By grounding personas in census data, the system provides realistic user archetypes quickly, sidestepping expensive ethnographic studies.

- Transparency Gains from Visible Reasoning – Designers can trace each design feature back to a specific persona attribute and visual reference, increasing trust in AI‑generated suggestions.

Limitations and Future Work

The current implementation relies on U.S. demographic datasets, limiting cross‑cultural applicability; extending to global datasets will require prompt redesign and possible model fine‑tuning. Generated images may raise copyright and quality concerns, necessitating provenance tracking. The participant pool is small and confined to consumer‑product design, so broader validation across domains (e.g., automotive, medical devices) is needed. Future directions include multilingual persona generation, real‑time collaborative canvases, and end‑to‑end pipelines that carry the output all the way to prototype or CAD models, as well as systematic evaluation of ethical implications of AI‑generated user representations.

Conclusion

Personagram demonstrates that multimodal LLMs can effectively close the abstraction gap between personas and product design. By providing a structured, visual‑centric workflow, the system enables designers to generate, recombine, and refine product concepts that are directly anchored in statistically grounded user archetypes, leading to faster, more transparent, and more satisfying ideation sessions.

Comments & Academic Discussion

Loading comments...

Leave a Comment