Stop the Flip-Flop: Context-Preserving Verification for Fast Revocable Diffusion Decoding

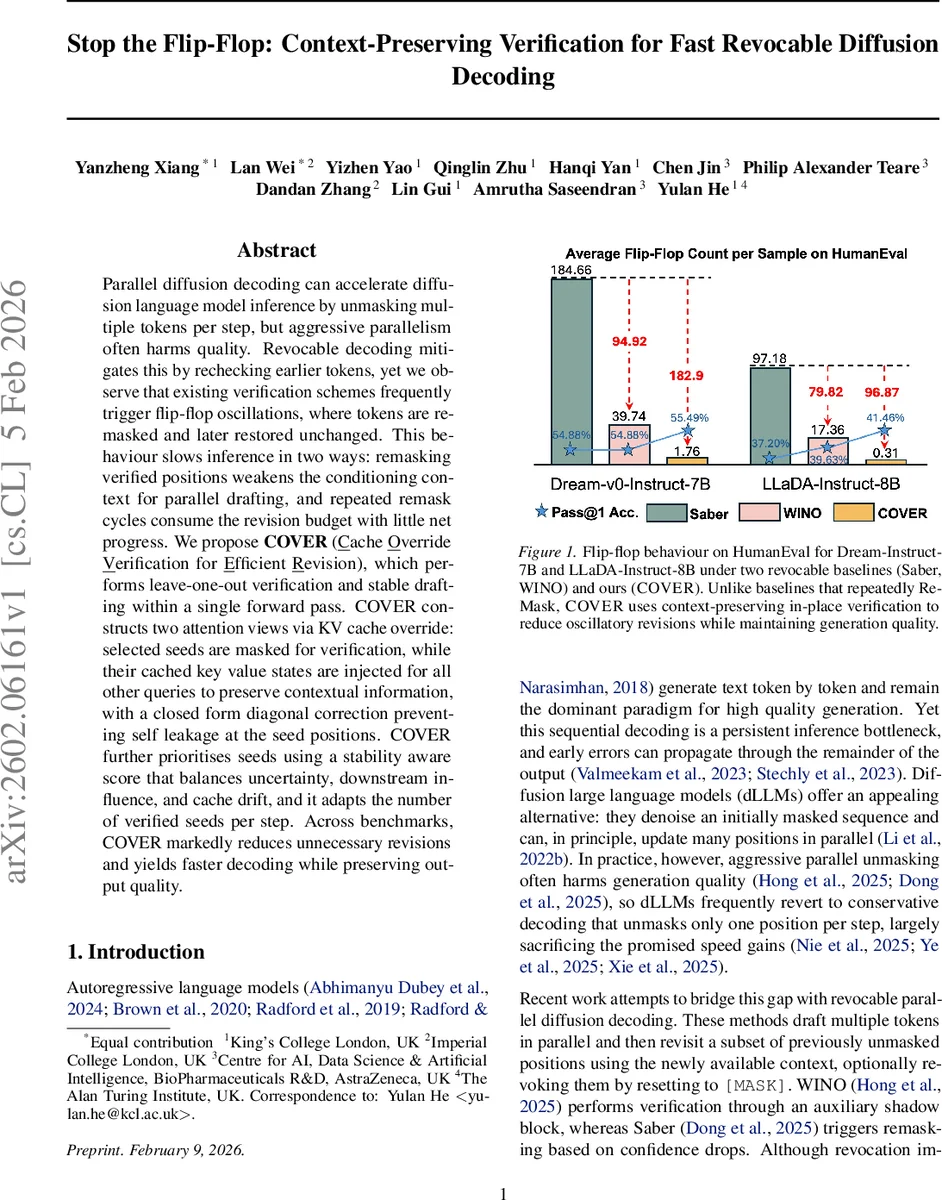

Parallel diffusion decoding can accelerate diffusion language model inference by unmasking multiple tokens per step, but aggressive parallelism often harms quality. Revocable decoding mitigates this by rechecking earlier tokens, yet we observe that existing verification schemes frequently trigger flip-flop oscillations, where tokens are remasked and later restored unchanged. This behaviour slows inference in two ways: remasking verified positions weakens the conditioning context for parallel drafting, and repeated remask cycles consume the revision budget with little net progress. We propose COVER (Cache Override Verification for Efficient Revision), which performs leave-one-out verification and stable drafting within a single forward pass. COVER constructs two attention views via KV cache override: selected seeds are masked for verification, while their cached key value states are injected for all other queries to preserve contextual information, with a closed form diagonal correction preventing self leakage at the seed positions. COVER further prioritises seeds using a stability aware score that balances uncertainty, downstream influence, and cache drift, and it adapts the number of verified seeds per step. Across benchmarks, COVER markedly reduces unnecessary revisions and yields faster decoding while preserving output quality.

💡 Research Summary

The paper addresses a critical inefficiency in revocable parallel diffusion decoding for large language models (dLLMs). While parallel unmasking can dramatically speed up inference, existing verification mechanisms such as WINO and Saber rely on explicit remasking of previously generated tokens. This remasking often triggers “flip‑flop” oscillations: a token is unmasked, later remasked, and then unmasked again to the exact same value. These oscillations waste both the conditioning context—because the content‑bearing embedding is temporarily replaced by a

Comments & Academic Discussion

Loading comments...

Leave a Comment