Tempora: Characterising the Time-Contingent Utility of Online Test-Time Adaptation

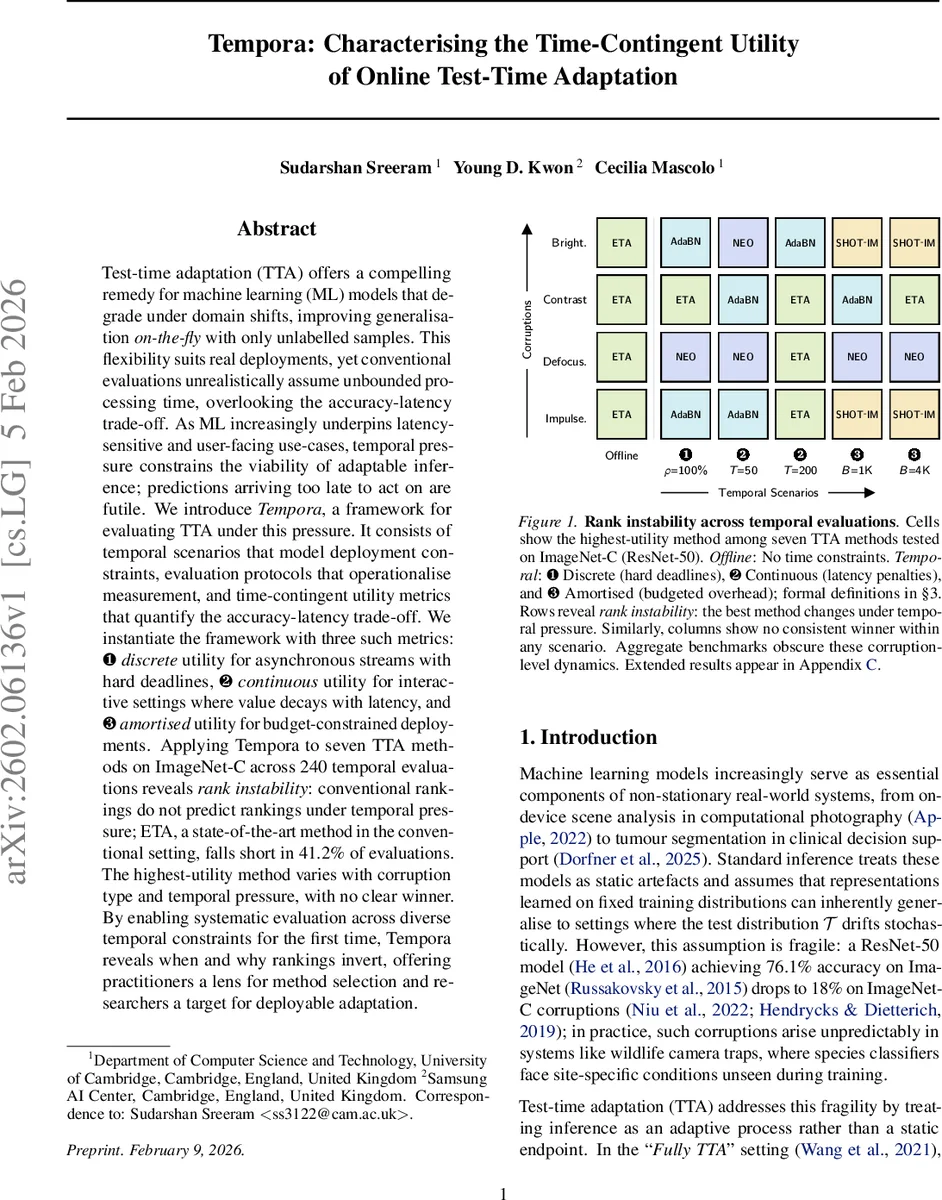

Test-time adaptation (TTA) offers a compelling remedy for machine learning (ML) models that degrade under domain shifts, improving generalisation on-the-fly with only unlabelled samples. This flexibility suits real deployments, yet conventional evaluations unrealistically assume unbounded processing time, overlooking the accuracy-latency trade-off. As ML increasingly underpins latency-sensitive and user-facing use-cases, temporal pressure constrains the viability of adaptable inference; predictions arriving too late to act on are futile. We introduce Tempora, a framework for evaluating TTA under this pressure. It consists of temporal scenarios that model deployment constraints, evaluation protocols that operationalise measurement, and time-contingent utility metrics that quantify the accuracy-latency trade-off. We instantiate the framework with three such metrics: (1) discrete utility for asynchronous streams with hard deadlines, (2) continuous utility for interactive settings where value decays with latency, and (3) amortised utility for budget-constrained deployments. Applying Tempora to seven TTA methods on ImageNet-C across 240 temporal evaluations reveals rank instability: conventional rankings do not predict rankings under temporal pressure; ETA, a state-of-the-art method in the conventional setting, falls short in 41.2% of evaluations. The highest-utility method varies with corruption type and temporal pressure, with no clear winner. By enabling systematic evaluation across diverse temporal constraints for the first time, Tempora reveals when and why rankings invert, offering practitioners a lens for method selection and researchers a target for deployable adaptation.

💡 Research Summary

**

The paper introduces Tempora, a systematic framework for evaluating test‑time adaptation (TTA) methods under realistic temporal constraints. Traditional TTA research evaluates methods solely on offline accuracy, implicitly assuming unlimited processing time. This overlooks the fact that many real‑world deployments—such as mobile photography, autonomous sensing, or interactive user interfaces—require predictions to be delivered within strict latency budgets; delayed predictions are either useless or degrade user experience.

Tempora addresses this gap by (1) formalising the data stream as a sequence of tuples ((x_i, t_i)) where (t_i) is the arrival time of batch (i), and (2) defining three archetypal temporal scenarios, each with its own evaluation protocol and utility metric that couples correctness with timeliness:

-

Discrete Utility (hard deadlines) – Batches arrive at fixed intervals (\gamma). If the inference pipeline is busy, intermediate batches are dropped. Utility is defined as the product of accuracy and availability (\alpha) (the fraction of batches actually processed). The protocol adds a buffer to avoid the “forced idling” problem of earlier work.

-

Continuous Utility (latency‑penalised) – Models user‑driven interactive settings where later answers are still valuable but less so. Utility is a hyperbolic function (\sum_i a_i \frac{\kappa}{\delta_i+\kappa}), where (a_i) is the binary correctness of prediction (i), (\delta_i) its latency, and (\kappa) controls the decay rate.

-

Amortised Utility (budget‑constrained) – The system has a total compute budget (B). TTA is applied until the budget is exhausted; thereafter the model falls back to a frozen baseline. Utility is a weighted average of the accuracy achieved during the adaptation phase and the baseline phase.

For each scenario, the authors provide a precise simulation of processing events ((s_j, f_j)) (start and finish times of each adaptation step), ensuring that batch starvation, idle time, and overlapping arrivals are measured accurately.

The experimental study evaluates seven representative Fully‑TTA methods (ETA, AdaBN, NEO, SHOT‑IM, etc.) on ImageNet‑C (15 corruption types) using a ResNet‑50 backbone. Sixteen temporal‑parameter configurations (different (\gamma), (\kappa), and (B) values) generate 240 distinct evaluations.

Key findings:

-

Rank Instability – The offline “state‑of‑the‑art” method ETA loses its top‑utility position in 41.2 % of the temporal evaluations (99 out of 240) and its average utility drops to 19.3 % of the offline winner’s utility. Thus, offline accuracy rankings are poor predictors of real‑time performance.

-

Corruption‑Specific Trade‑offs – In the continuous scenario with a 50 ms response window, the Spearman correlation between offline ranking and utility varies dramatically across corruptions (e.g., –0.74 for brightness, +0.21 for Gaussian noise). This reflects that different corruptions affect feature representations in distinct ways, while most TTA algorithms allocate a uniform amount of compute regardless of difficulty.

-

Pressure‑Specific Trade‑offs – Under tight budget constraints (B = 1 K), SHOT‑IM yields the highest amortised utility, but when the budget is relaxed (B = 4 K) ETA regains superiority. Correlation with offline ranking rises from 0.32 to 0.96 as the temporal pressure eases, indicating that the more relaxed the constraint, the more traditional rankings become relevant.

The authors also decompose utility loss into three components—missed batches, latency penalties, and wasted overhead—providing a diagnostic view of why each method fails under specific constraints.

Tempora is deliberately modular: the three scenarios and their metrics can be plugged into existing TTA pipelines, and the framework can be extended to other backbones, datasets, or even non‑covariate shifts.

In conclusion, Tempora offers the first comprehensive, time‑aware evaluation methodology for test‑time adaptation. By revealing systematic rank inversions and highlighting corruption‑ and pressure‑dependent trade‑offs, it equips practitioners with a practical tool for method selection and guides researchers toward designing adaptation algorithms that are not only accurate but also temporally efficient. The work underscores that future progress in TTA must jointly optimise for accuracy and latency to be truly deployable.

Comments & Academic Discussion

Loading comments...

Leave a Comment