Compressing LLMs with MoP: Mixture of Pruners

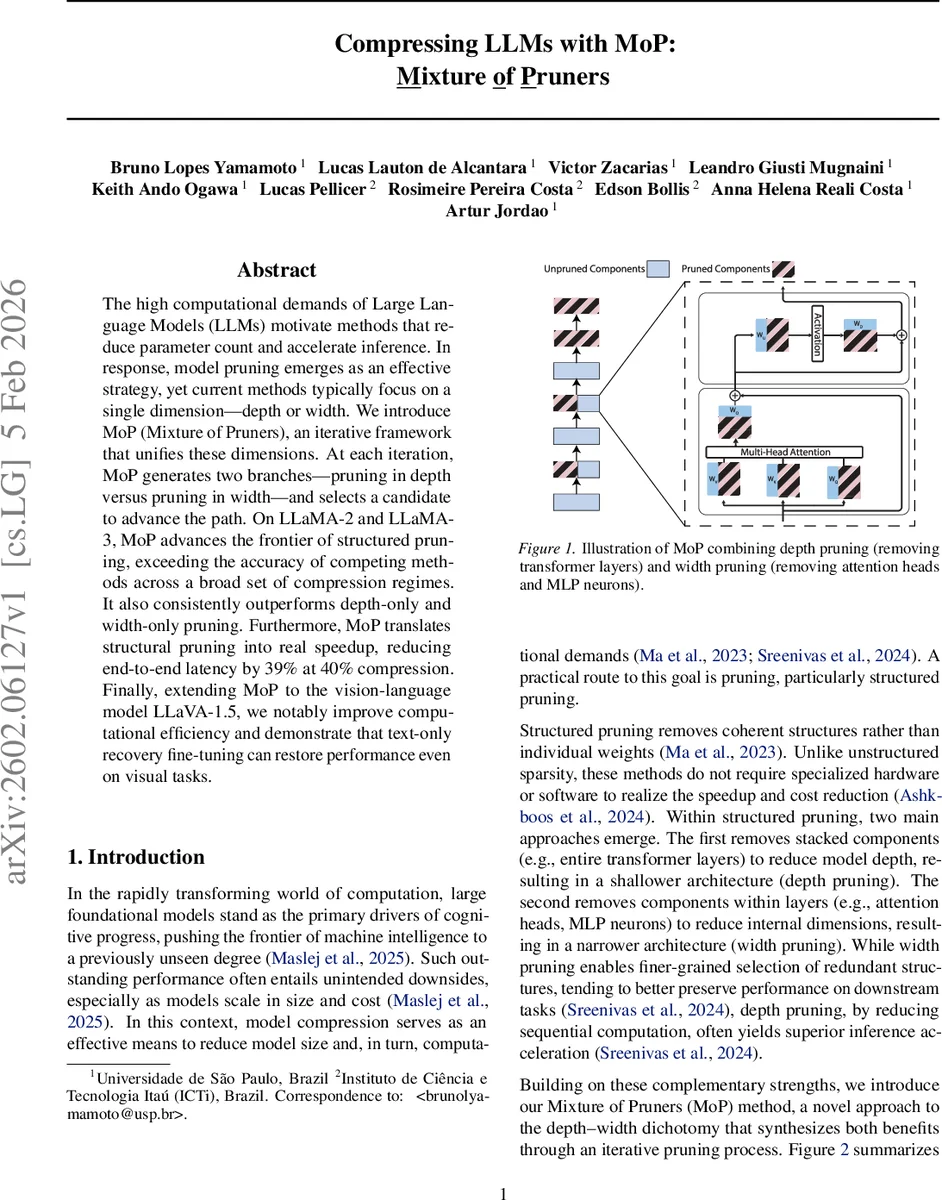

The high computational demands of Large Language Models (LLMs) motivate methods that reduce parameter count and accelerate inference. In response, model pruning emerges as an effective strategy, yet current methods typically focus on a single dimension-depth or width. We introduce MoP (Mixture of Pruners), an iterative framework that unifies these dimensions. At each iteration, MoP generates two branches-pruning in depth versus pruning in width-and selects a candidate to advance the path. On LLaMA-2 and LLaMA-3, MoP advances the frontier of structured pruning, exceeding the accuracy of competing methods across a broad set of compression regimes. It also consistently outperforms depth-only and width-only pruning. Furthermore, MoP translates structural pruning into real speedup, reducing end-to-end latency by 39% at 40% compression. Finally, extending MoP to the vision-language model LLaVA-1.5, we notably improve computational efficiency and demonstrate that text-only recovery fine-tuning can restore performance even on visual tasks.

💡 Research Summary

The paper introduces MoP (Mixture of Pruners), a novel iterative structured‑pruning framework that simultaneously explores depth (layer removal) and width (attention‑head and MLP‑neuron removal) for large language models. At each iteration MoP creates two candidate models: a depth‑pruned model that drops a single transformer layer, and a width‑pruned model that removes a set of internal units whose total parameter count matches that of the dropped layer. Both candidates undergo a short fine‑tuning step on a sampled subset of the training data, after which a path‑selection criterion P evaluates how closely each candidate’s outputs resemble those of the original unpruned model. Three concrete criteria are examined—cosine similarity of logits, KL‑divergence of output distributions, and perplexity on a calibration set—plus a random baseline. The candidate with the lower deviation score is kept for the next iteration, and the process repeats until a target compression ratio ρ is reached. Finally, the fully compressed model is fine‑tuned on the complete training set.

Key design choices include: (1) using the third‑to‑last layer as the default removal target, ensuring the final two layers remain intact; (2) adopting AMP as the width‑pruning scorer, which uniformly ranks attention heads and MLP neurons by activation magnitude, allowing fine‑grained control of the parameter budget; (3) matching the parameter reduction of the width candidate to that of the removed layer (cₜ), guaranteeing a fair comparison between depth and width actions. The modular nature of MoP permits swapping in newer depth or width criteria without altering the overall algorithm.

Empirical evaluation is performed on LLaMA‑2 7B, LLaMA‑3 8B, and the multimodal LLaVA‑1.5 model. Across compression levels from 20 % to 60 %, MoP consistently outperforms depth‑only baselines (e.g., LLM‑Streamline, LaCo) and width‑only baselines (e.g., AMP, PruneNet). At a 40 % compression ratio, MoP achieves a 39 % reduction in end‑to‑end latency while maintaining higher average accuracy on five commonsense benchmarks (WinoGrande, HellaSwag, ARC‑e, ARC‑c, PIQA) than any single‑dimension method. Table 1 shows that cosine similarity and perplexity as path criteria yield the best trade‑offs, though differences among the three metrics are modest.

When extending MoP to LLaVA‑1.5, the authors prune both the language and vision branches using the same depth‑width mixture. Remarkably, a text‑only recovery fine‑tuning stage restores most of the lost performance on visual tasks (VQA, image captioning), demonstrating that the pruned visual encoder retains sufficient representational capacity when the language side is re‑aligned.

The paper highlights several broader implications: (i) the depth‑width trade‑off can be dynamically navigated rather than statically chosen; (ii) fine‑grained width pruning can be calibrated to match the coarse granularity of layer removal, enabling fair head‑to‑head comparisons; (iii) the short‑fine‑tuning evaluation step provides a low‑cost proxy for full model quality, dramatically reducing the computational burden of searching pruning trajectories; (iv) MoP’s modularity opens the door for future integration of learned pruning policies, hardware‑aware cost models, or reinforcement‑learning‑based path selectors.

In summary, MoP advances the state of the art in structured pruning by unifying depth and width reductions within a single, iterative decision‑making loop. It delivers superior compression‑accuracy trade‑offs, measurable inference speedups, and demonstrates that even multimodal models can be efficiently compressed with text‑only recovery. The authors release code and pretrained checkpoints, facilitating reproducibility and further research on mixed‑dimension model compression.

Comments & Academic Discussion

Loading comments...

Leave a Comment