InterPrior: Scaling Generative Control for Physics-Based Human-Object Interactions

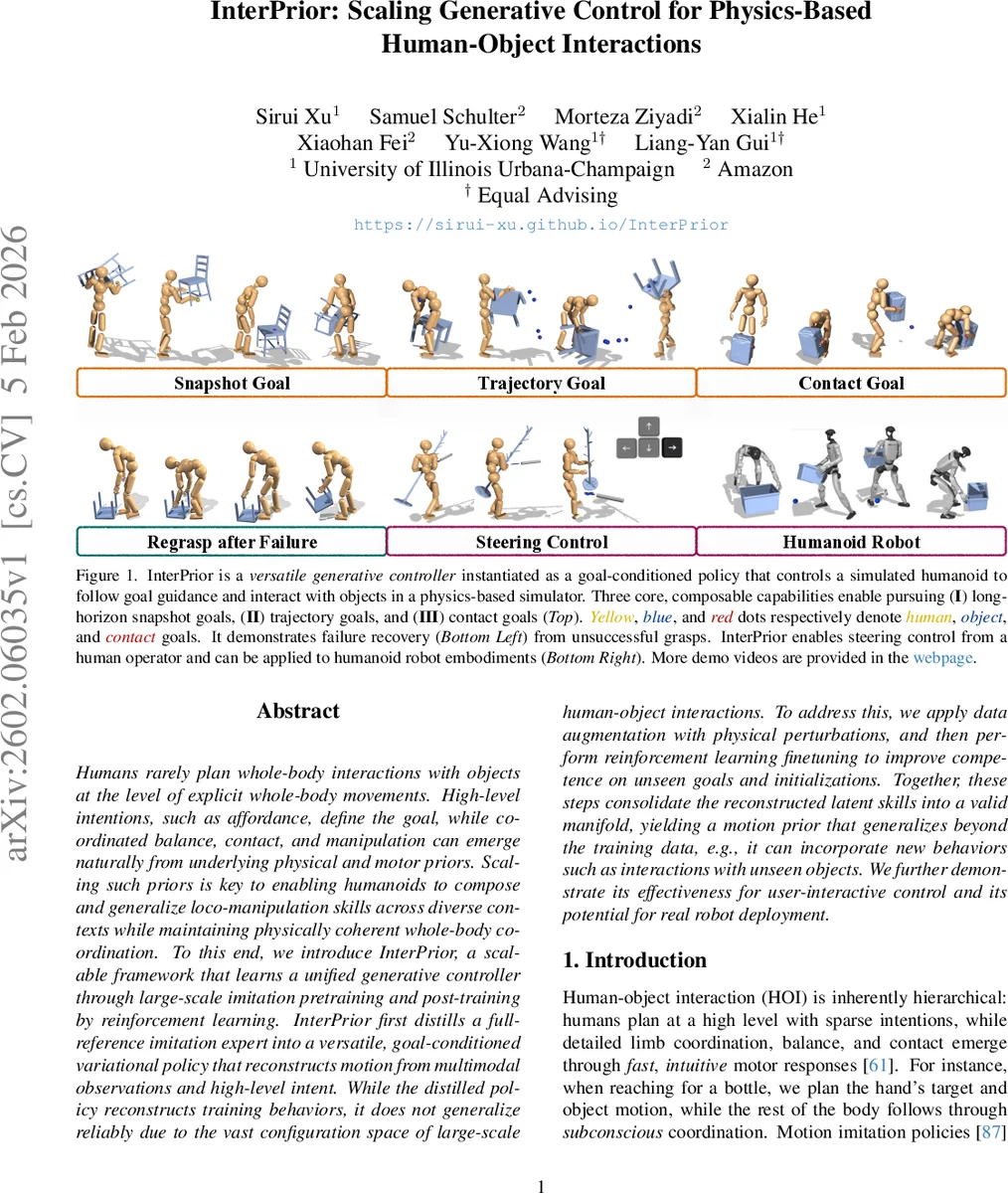

Humans rarely plan whole-body interactions with objects at the level of explicit whole-body movements. High-level intentions, such as affordance, define the goal, while coordinated balance, contact, and manipulation can emerge naturally from underlying physical and motor priors. Scaling such priors is key to enabling humanoids to compose and generalize loco-manipulation skills across diverse contexts while maintaining physically coherent whole-body coordination. To this end, we introduce InterPrior, a scalable framework that learns a unified generative controller through large-scale imitation pretraining and post-training by reinforcement learning. InterPrior first distills a full-reference imitation expert into a versatile, goal-conditioned variational policy that reconstructs motion from multimodal observations and high-level intent. While the distilled policy reconstructs training behaviors, it does not generalize reliably due to the vast configuration space of large-scale human-object interactions. To address this, we apply data augmentation with physical perturbations, and then perform reinforcement learning finetuning to improve competence on unseen goals and initializations. Together, these steps consolidate the reconstructed latent skills into a valid manifold, yielding a motion prior that generalizes beyond the training data, e.g., it can incorporate new behaviors such as interactions with unseen objects. We further demonstrate its effectiveness for user-interactive control and its potential for real robot deployment.

💡 Research Summary

**

InterPrior introduces a scalable, physics‑based generative controller for human‑object interaction (HOI) that can handle a wide variety of high‑level intents while producing physically plausible whole‑body motions. The framework consists of three stages. First, a full‑reference imitation expert (π_E) is trained on large‑scale HOI datasets using Proximal Policy Optimization (PPO). To reduce over‑reliance on exact reference trajectories, the authors augment the data with random physical perturbations (impulse disturbances to the pelvis and objects), randomize object properties (mass, inertia, friction), and add a termination penalty that discourages falls or large deviations. This “InterMimic+” expert learns to track references accurately while beginning to develop robustness to unseen dynamics.

Second, the expert is distilled into a masked conditional variational policy (π). The observation vector x_t contains normalized human kinematics (joint positions, velocities, signed distances to objects, binary contacts) and object state, amounting to roughly 90 dimensions for the SMPL avatar and 70 for the Unitree G1 robot. Goal conditioning is performed through a set of future targets G_t that combine short‑horizon preview windows (e.g., the next 0.5 s) with a long‑horizon snapshot (e.g., a desired pose 2 s ahead). Each goal component is optionally masked, allowing the policy to receive only sparse information such as “hand should be at this location” or “object should be upright”. The encoder maps the masked residual (Δy – x) into a latent skill embedding z; the decoder then generates a multimodal action distribution π(a_t|x_t, G_t, z). KL‑regularization keeps the latent space close to a unit Gaussian, ensuring smooth interpolation between demonstrated behaviors.

Third, the variational policy is fine‑tuned with reinforcement learning. The RL stage introduces failure‑state resets (e.g., after a dropped object) and rewards that explicitly encourage recovery actions such as re‑approach and re‑grasp. The total reward combines goal achievement, energy efficiency, and a recovery bonus, while a KL‑penalty preserves the knowledge acquired during distillation. This combination allows the policy to expand beyond the distribution of the original demonstrations without drifting into reward‑hacking behaviors.

Experiments are conducted on two embodiments: a simulated SMPL humanoid and the Unitree G1 robot. The authors evaluate three goal types—snapshot, trajectory, and contact goals—under a battery of conditions: mid‑trajectory goal switching, random physical property changes, unseen object geometries, and intentional perturbations. Compared to baseline methods (pure imitation trackers, adversarial imitation, and non‑distilled RL policies), InterPrior achieves higher success rates (15–30 % improvement) and more natural motion trajectories, especially when dealing with thin or irregular objects where pure imitation tends to fail. The system also supports real‑time user steering via a keyboard interface, demonstrating that high‑level commands can be injected on‑the‑fly without destabilizing the controller. A sim‑to‑sim transfer to a real‑world G1 robot shows that the learned policy remains stable under real dynamics, suggesting promising sim‑to‑real potential.

Key contributions are: (1) a unified generative controller that can handle multiple goal formulations with a single policy; (2) a novel training pipeline that couples large‑scale imitation, extensive physical data augmentation, and RL fine‑tuning to achieve both breadth (many skills) and depth (robustness to out‑of‑distribution situations); (3) demonstration of failure recovery and mid‑trajectory goal re‑specification, which are essential for interactive applications; and (4) evidence of embodiment flexibility, as the same framework works for both a virtual human model and a physical humanoid robot.

Limitations include the focus on rigid objects (no deformable or articulated items), the absence of sensor noise or latency modeling in the current experiments, and the black‑box nature of the latent skill embedding, which limits interpretability. Future work could extend the approach to soft‑object manipulation, incorporate realistic perception pipelines, and explore language‑based intent interfaces that map natural language commands to the masked goal representation.

In summary, InterPrior demonstrates that a carefully staged combination of imitation distillation and reinforcement learning can produce a scalable, generalizable motion prior for physics‑based human‑object interaction, paving the way for more agile, adaptable, and user‑controllable humanoid robots in complex real‑world environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment