GUARDIAN: Safety Filtering for Systems with Perception Models Subject to Adversarial Attacks

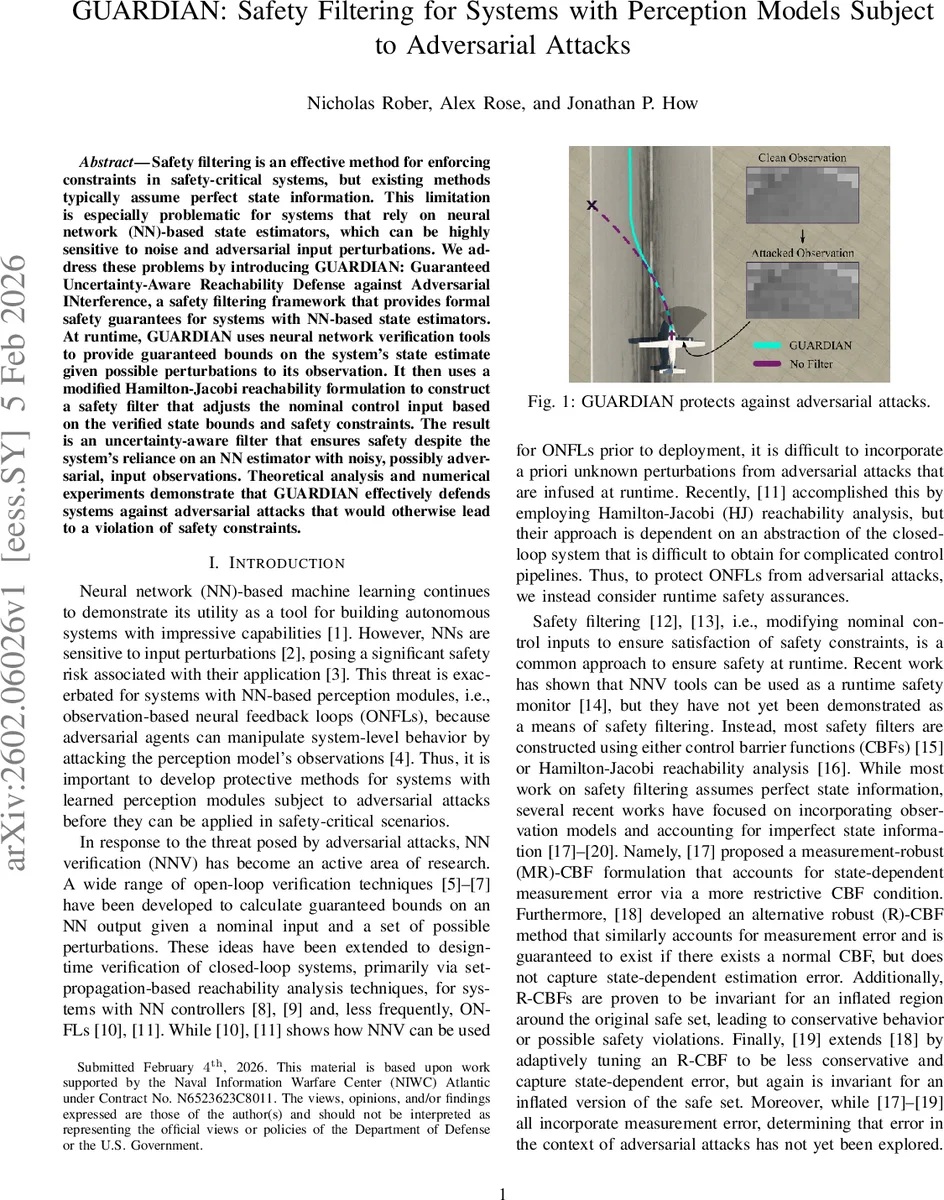

Safety filtering is an effective method for enforcing constraints in safety-critical systems, but existing methods typically assume perfect state information. This limitation is especially problematic for systems that rely on neural network (NN)-based state estimators, which can be highly sensitive to noise and adversarial input perturbations. We address these problems by introducing GUARDIAN: Guaranteed Uncertainty-Aware Reachability Defense against Adversarial INterference, a safety filtering framework that provides formal safety guarantees for systems with NN-based state estimators. At runtime, GUARDIAN uses neural network verification tools to provide guaranteed bounds on the system’s state estimate given possible perturbations to its observation. It then uses a modified Hamilton-Jacobi reachability formulation to construct a safety filter that adjusts the nominal control input based on the verified state bounds and safety constraints. The result is an uncertainty-aware filter that ensures safety despite the system’s reliance on an NN estimator with noisy, possibly adversarial, input observations. Theoretical analysis and numerical experiments demonstrate that GUARDIAN effectively defends systems against adversarial attacks that would otherwise lead to a violation of safety constraints.

💡 Research Summary

The paper tackles a critical gap in safety‑critical control: existing safety‑filtering methods assume perfect state information, yet many modern systems rely on neural‑network (NN) based state estimators that are highly vulnerable to sensor noise and adversarial perturbations. To bridge this gap, the authors introduce GUARDIAN (Guaranteed Uncertainty‑Aware Reachability Defense against Adversarial INterference), a runtime safety‑filtering framework that provides formal safety guarantees even when the perception module is under attack.

GUARDIAN operates in two stages. First, it employs state‑of‑the‑art neural network verification (NNV) tools—such as CROWN, auto‑LiRPA, or jax‑verify—to compute guaranteed output bounds for the NN estimator (L_{\theta}) given a perturbed observation (\tilde y_t). Assuming an (\ell_{\infty}) attack budget (\epsilon), the set of possible true observations is (\bar Y_t = {y \mid |y-\tilde y_t|_{\infty}\le\epsilon}). NNV yields interval bounds (

Comments & Academic Discussion

Loading comments...

Leave a Comment