Multi-Token Prediction via Self-Distillation

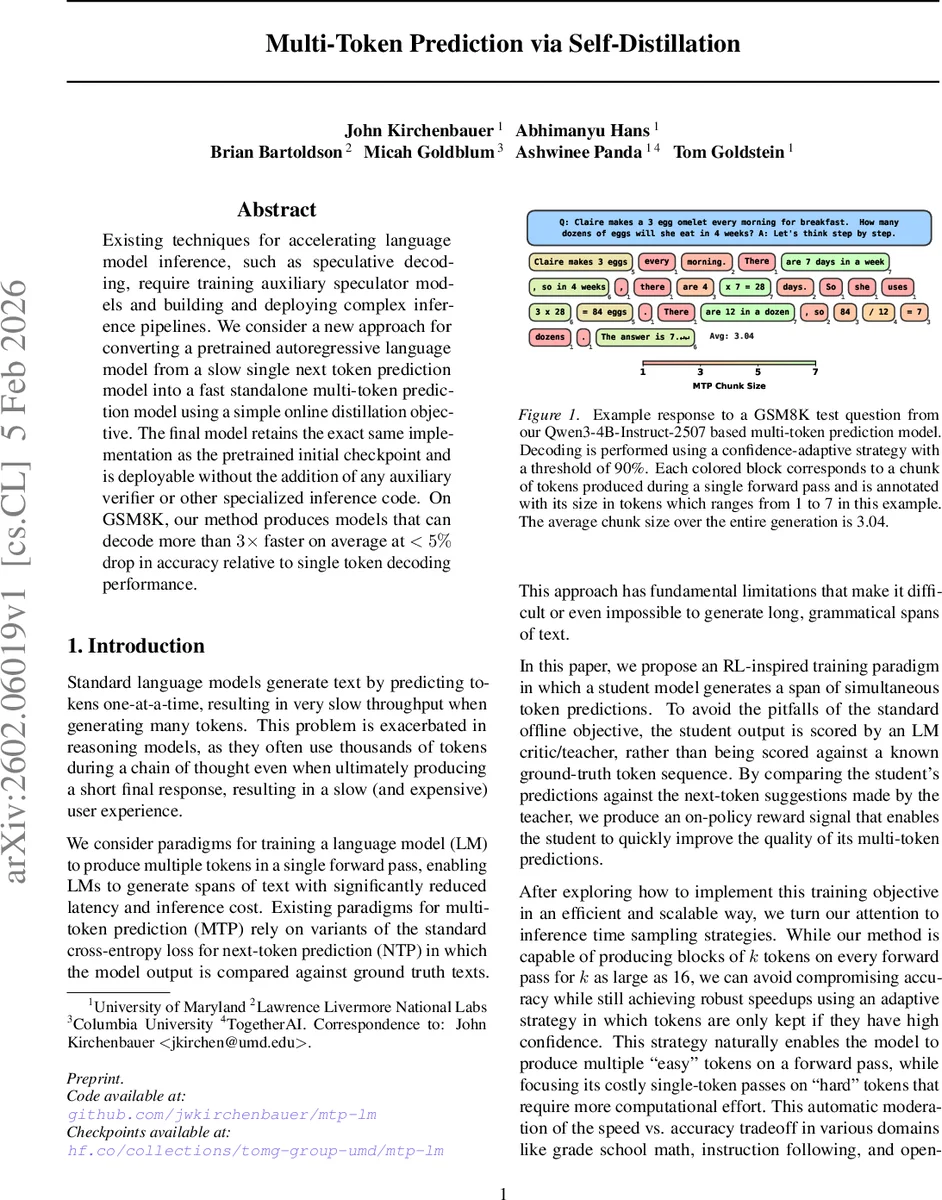

Existing techniques for accelerating language model inference, such as speculative decoding, require training auxiliary speculator models and building and deploying complex inference pipelines. We consider a new approach for converting a pretrained autoregressive language model from a slow single next token prediction model into a fast standalone multi-token prediction model using a simple online distillation objective. The final model retains the exact same implementation as the pretrained initial checkpoint and is deployable without the addition of any auxiliary verifier or other specialized inference code. On GSM8K, our method produces models that can decode more than $3\times$ faster on average at $<5%$ drop in accuracy relative to single token decoding performance.

💡 Research Summary

The paper introduces a simple yet effective method for converting a pretrained autoregressive language model into a fast multi‑token prediction (MTP) model without altering its architecture or adding auxiliary verifier components. The authors observe that conventional next‑token prediction (NTP) models are trained to minimize cross‑entropy on marginal token distributions, which does not guarantee coherent joint distributions when multiple tokens are sampled simultaneously. To address this, they propose an online self‑distillation objective that treats a strong NTP model as a teacher (critic) and the MTP model as a student. During training, the student generates a block of k tokens in a single forward pass using deterministic argmax decoding. This candidate sequence is then evaluated by the teacher, which computes the joint likelihood of the block via the chain rule. The loss is the KL‑divergence between the teacher‑assigned probability of the student’s block and the student’s own distribution over that block, effectively providing an on‑policy reward that encourages coherent multi‑token outputs.

A “hard teacher” variant further forces the teacher’s distribution to a delta function (argmax only), driving the student’s entropy down and sharpening its predictions. Both student and teacher are initialized from the same pretrained checkpoint, ensuring that early‑stage loss is near zero and training remains stable.

At inference time, the model can either greedily output k tokens (argmax) or sample from the softmax distribution. To balance speed and quality, the authors introduce an adaptive confidence‑threshold strategy: tokens whose confidence exceeds a preset threshold (e.g., 90 %) are accepted as a block, while lower‑confidence tokens trigger a fallback to standard single‑token decoding. This mechanism automatically allocates computational effort to “hard” tokens and batches “easy” tokens, achieving substantial latency reductions without a large drop in accuracy.

Experiments on the GSM8K math‑reasoning benchmark demonstrate that the method yields an average chunk size of 3.04 tokens, translating to 2×–5× faster decoding. Accuracy loss relative to single‑token decoding is under 5 %, comparable to or better than speculative decoding approaches that require separate draft models and verification pipelines. The paper also discusses related work on multi‑token architectures, speculative decoding, and knowledge distillation, positioning the proposed self‑distillation framework as a lightweight alternative that can be applied to any pretrained autoregressive model.

In summary, the authors provide a practical recipe for accelerating language‑model inference: use the same pretrained checkpoint as both teacher and student, train with the KL‑based online self‑distillation loss, and employ confidence‑aware adaptive decoding. This approach delivers multi‑token generation with minimal engineering overhead, making it attractive for real‑world deployments where inference speed and simplicity are paramount.

Comments & Academic Discussion

Loading comments...

Leave a Comment