Visuo-Tactile World Models

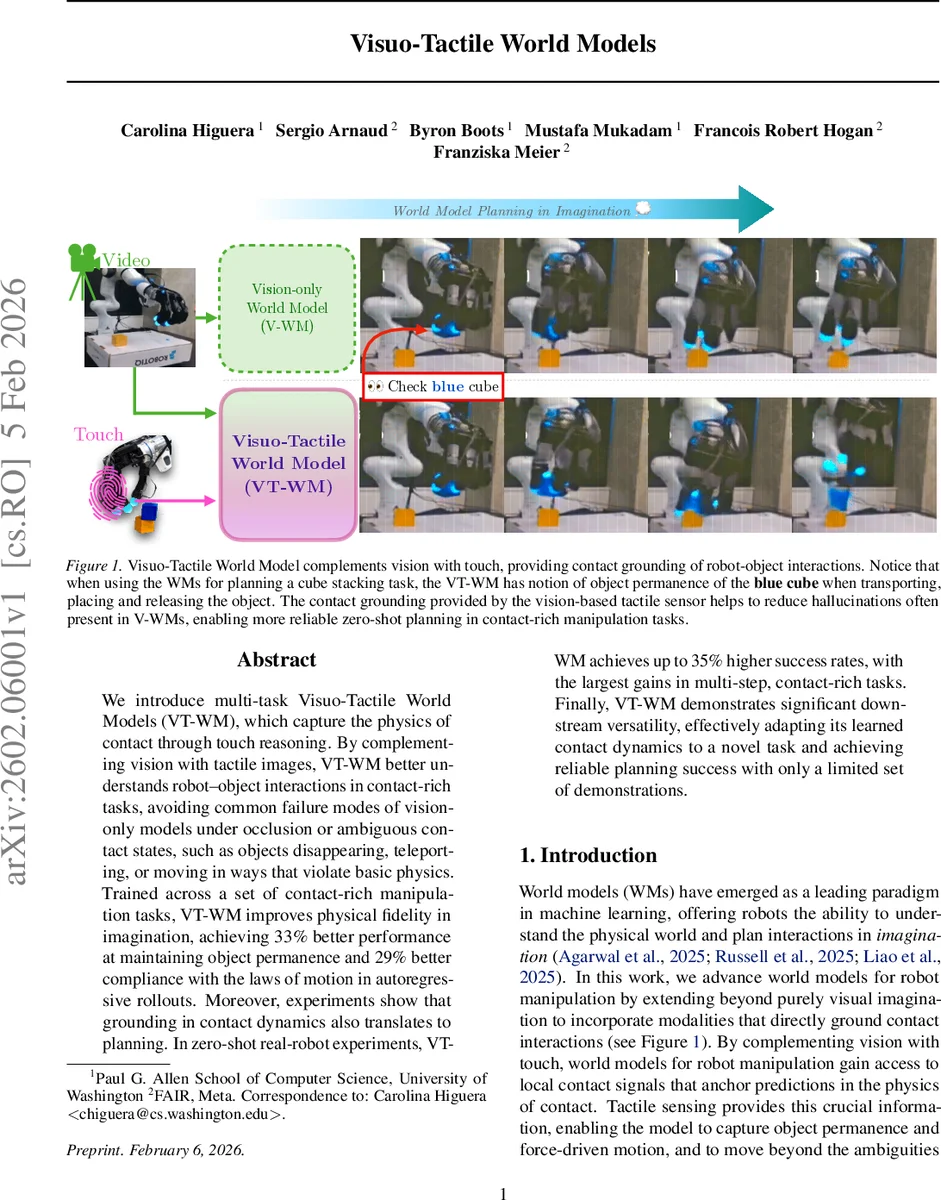

We introduce multi-task Visuo-Tactile World Models (VT-WM), which capture the physics of contact through touch reasoning. By complementing vision with tactile sensing, VT-WM better understands robot-object interactions in contact-rich tasks, avoiding common failure modes of vision-only models under occlusion or ambiguous contact states, such as objects disappearing, teleporting, or moving in ways that violate basic physics. Trained across a set of contact-rich manipulation tasks, VT-WM improves physical fidelity in imagination, achieving 33% better performance at maintaining object permanence and 29% better compliance with the laws of motion in autoregressive rollouts. Moreover, experiments show that grounding in contact dynamics also translates to planning. In zero-shot real-robot experiments, VT-WM achieves up to 35% higher success rates, with the largest gains in multi-step, contact-rich tasks. Finally, VT-WM demonstrates significant downstream versatility, effectively adapting its learned contact dynamics to a novel task and achieving reliable planning success with only a limited set of demonstrations.

💡 Research Summary

The paper introduces Visuo‑Tactile World Models (VT‑WM), a multi‑task, action‑conditioned world model that fuses exocentric visual observations with high‑frequency tactile feedback to capture contact dynamics in robot manipulation. The authors argue that vision‑only world models (V‑WM) suffer from hallucinations such as object disappearance, teleportation, or motion that violates Newtonian physics, especially under occlusions or visually ambiguous states. By integrating tactile sensing—specifically Digit 360 vision‑based tactile sensors processed through the Sparsh‑X encoder—the model gains a local, physics‑grounded signal that disambiguates visually identical frames based on whether contact is present, how strong it is, and whether slip occurs.

The architecture consists of three components: a vision encoder (Cosmos tokenizer) that produces latent visual tokens sₖ, a tactile encoder that yields latent tactile tokens tₖ, and a 12‑layer transformer predictor. The transformer employs factorized spatial‑temporal self‑attention with Rotary Position Embeddings, followed by cross‑attention to action tokens aₖ, enabling the model to predict the next‑step latent pair (sₖ₊₁, tₖ₊₁). Training combines a teacher‑forcing loss (dense next‑step supervision) with a sampling loss (autoregressive rollout supervision) to balance short‑term accuracy and long‑term coherence. Input sequences span 1.5 seconds of video (9 frames at 6 fps) and 0.16 seconds of tactile data (2 frames per sensor), reflecting the slower global context of vision and the faster, local nature of touch.

Evaluation is performed on seven contact‑rich manipulation tasks (fruit placement, pushing, cloth wiping, cube stacking, marker scribbling, etc.). In imagination tests, VT‑WM improves object permanence by 33 % and compliance with physical laws by 29 % relative to V‑WM, as measured by the frequency of hallucinated object loss or unforced motion. For planning, the model is used as a simulator inside a Cross‑Entropy Method (CEM) optimizer. The planner samples action sequences, rolls out latent futures with the transformer, and scores each trajectory by the ℓ₂ distance between the predicted final visual latent and a goal image latent. Although tactile information is not part of the cost function, its presence in the initial state leads to more accurate rollouts, better cost estimation, and consequently higher‑quality plans.

Zero‑shot real‑robot experiments show that VT‑WM achieves up to a 35 % increase in success rates on contact‑heavy tasks compared with V‑WM, with the largest gains observed in multi‑step tasks such as cube stacking. Moreover, the model demonstrates downstream versatility: when fine‑tuned with only a few demonstrations on a novel task, it still produces reliable plans, outperforming vision‑only baselines.

Key contributions are: (1) the first multi‑task visuo‑tactile world model that jointly learns global visual context and local contact dynamics; (2) a training regime that blends teacher‑forcing and autoregressive sampling to improve both short‑term prediction fidelity and long‑horizon consistency; (3) empirical evidence that tactile grounding substantially boosts both imagination fidelity and zero‑shot planning performance on real robots.

The authors suggest future work on incorporating tactile goals directly into the planning objective, scaling to higher‑resolution tactile sensors, and enabling online re‑planning with real‑time touch feedback. Overall, the paper convincingly demonstrates that grounding world models in contact physics via tactile sensing can overcome fundamental limitations of vision‑only approaches and open new avenues for robust, generalizable robot manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment