Learning to Share: Selective Memory for Efficient Parallel Agentic Systems

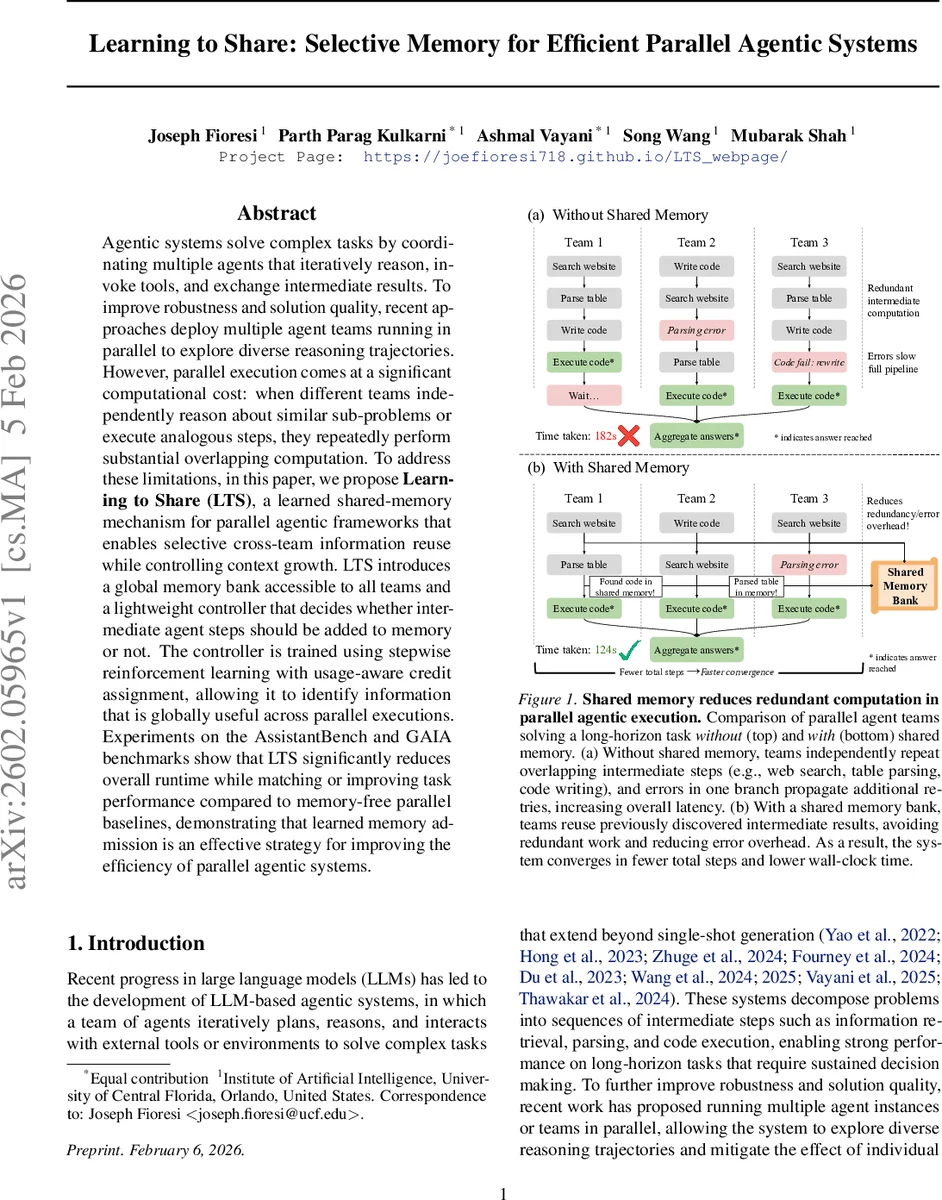

Agentic systems solve complex tasks by coordinating multiple agents that iteratively reason, invoke tools, and exchange intermediate results. To improve robustness and solution quality, recent approaches deploy multiple agent teams running in parallel to explore diverse reasoning trajectories. However, parallel execution comes at a significant computational cost: when different teams independently reason about similar sub-problems or execute analogous steps, they repeatedly perform substantial overlapping computation. To address these limitations, in this paper, we propose Learning to Share (LTS), a learned shared-memory mechanism for parallel agentic frameworks that enables selective cross-team information reuse while controlling context growth. LTS introduces a global memory bank accessible to all teams and a lightweight controller that decides whether intermediate agent steps should be added to memory or not. The controller is trained using stepwise reinforcement learning with usage-aware credit assignment, allowing it to identify information that is globally useful across parallel executions. Experiments on the AssistantBench and GAIA benchmarks show that LTS significantly reduces overall runtime while matching or improving task performance compared to memory-free parallel baselines, demonstrating that learned memory admission is an effective strategy for improving the efficiency of parallel agentic systems. Project page: https://joefioresi718.github.io/LTS_webpage/

💡 Research Summary

The paper addresses a critical inefficiency in modern large‑language‑model (LLM) based agentic systems that run multiple agent teams in parallel. While parallel execution improves robustness and final‑answer quality, it also leads to substantial duplicated work: different teams repeatedly perform the same sub‑tasks such as web searches, table parsing, or code generation, and errors in one branch cause extra retries, inflating wall‑clock time. To mitigate this, the authors propose Learning to Share (LTS), a framework that equips parallel agentic architectures with a global shared‑memory bank and a lightweight controller that decides, on a step‑by‑step basis, whether an intermediate agent output should be admitted to the memory.

The memory bank stores entries as (key, value) pairs, where the key is a concise natural‑language summary of the step and the value is the raw agent output. Teams are only exposed to the set of keys; they may retrieve the full value of a selected key when it is useful. This design enables cross‑team reuse without forcing full trajectory merging or unbounded context growth. However, indiscriminately storing every step would clutter the memory with failed tool calls, partial code attempts, or otherwise irrelevant information, degrading retrieval efficiency.

To learn a selective admission policy, the controller is modeled as a small language model that emits a single binary token (“YES” to store, “NO” to skip) after each step. Because there is no ground‑truth label for each decision, the authors formulate a stepwise reinforcement‑learning objective with usage‑aware reward shaping. Positive reward is given when a stored entry is later retrieved by another team and contributes to a successful final answer; negative reward is assigned when an entry is never used or when storing it leads to context bloat or error propagation. This credit‑assignment scheme allows the controller to discover globally useful intermediate results while explicitly controlling memory size.

Experiments are conducted on two demanding benchmarks: GAIA, which features long‑horizon, tool‑intensive tasks, and AssistantBench, a web‑interaction benchmark. The baseline is M1‑Parallel, a state‑of‑the‑art system that runs multiple independent MagneticOne‑style agent teams in parallel and aggregates their final outputs. LTS is integrated on top of the same underlying agents and aggregation mechanism. Results show that LTS reduces overall runtime by roughly 30 % on average, while matching or modestly improving task success rates (typically +1–2 percentage points). Detailed analysis reveals that redundant steps are cut by 20–25 %: teams reuse previously discovered web results, parsed tables, and correct code snippets instead of recomputing them.

Ablation studies compare LTS against a naïve “store everything” memory policy. The naïve approach suffers from increased context length, slower retrieval, and even performance degradation, confirming that selective sharing is essential. Sensitivity experiments on memory‑size caps and reward‑shaping coefficients demonstrate that reasonable hyper‑parameter choices yield stable gains.

In summary, Learning to Share introduces a principled, learned shared‑memory mechanism that preserves the exploratory benefits of parallel reasoning while dramatically cutting duplicated computation. The work opens avenues for scaling multi‑agent collaboration, real‑time tool use, and memory‑augmented meta‑learning, suggesting that learned, selective memory admission is a key component for future efficient agentic AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment