Discrete diffusion samplers and bridges: Off-policy algorithms and applications in latent spaces

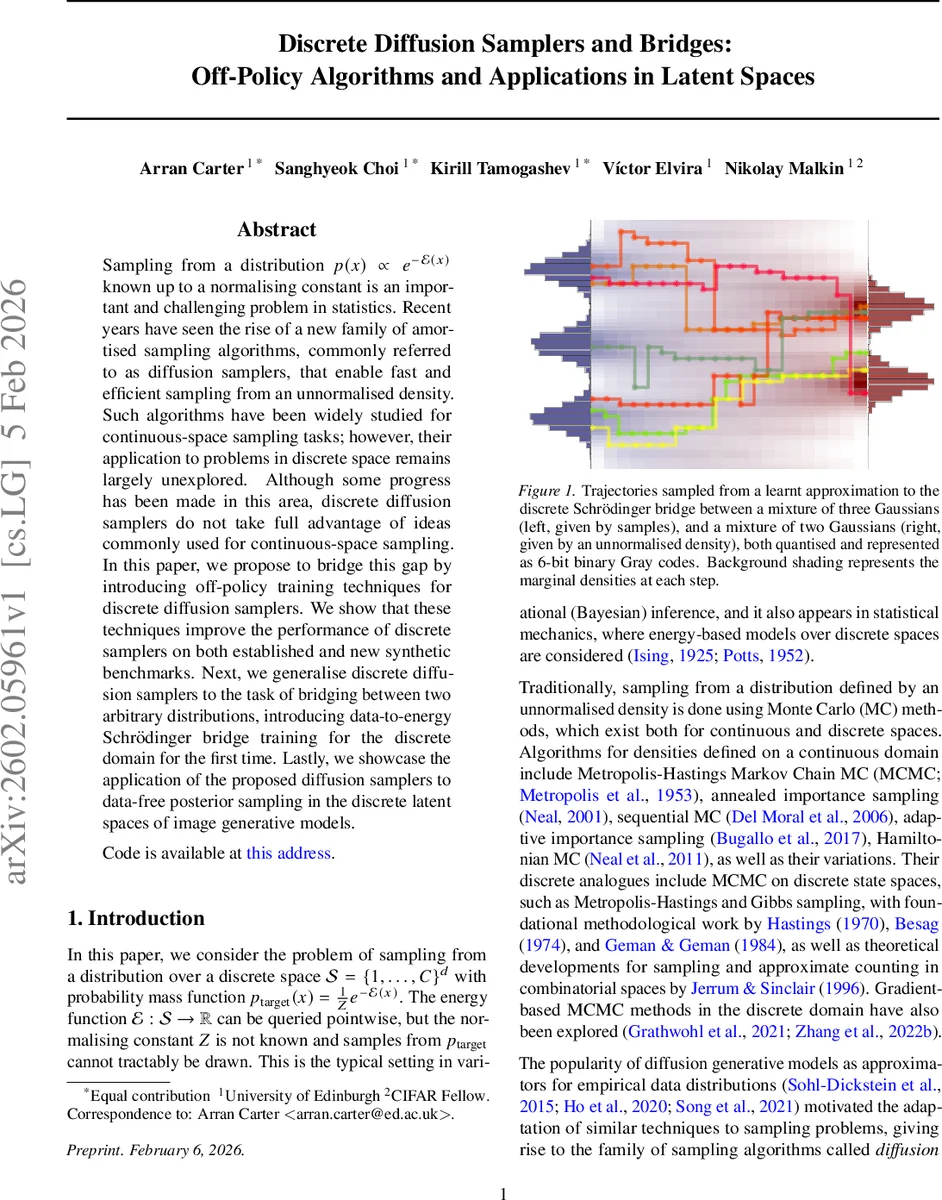

Sampling from a distribution $p(x) \propto e^{-\mathcal{E}(x)}$ known up to a normalising constant is an important and challenging problem in statistics. Recent years have seen the rise of a new family of amortised sampling algorithms, commonly referred to as diffusion samplers, that enable fast and efficient sampling from an unnormalised density. Such algorithms have been widely studied for continuous-space sampling tasks; however, their application to problems in discrete space remains largely unexplored. Although some progress has been made in this area, discrete diffusion samplers do not take full advantage of ideas commonly used for continuous-space sampling. In this paper, we propose to bridge this gap by introducing off-policy training techniques for discrete diffusion samplers. We show that these techniques improve the performance of discrete samplers on both established and new synthetic benchmarks. Next, we generalise discrete diffusion samplers to the task of bridging between two arbitrary distributions, introducing data-to-energy Schrödinger bridge training for the discrete domain for the first time. Lastly, we showcase the application of the proposed diffusion samplers to data-free posterior sampling in the discrete latent spaces of image generative models.

💡 Research Summary

This paper addresses three major gaps in the current landscape of discrete diffusion sampling. First, while continuous‑time diffusion samplers have successfully incorporated off‑policy reinforcement‑learning (RL) techniques to improve exploration and mode coverage, discrete diffusion samplers have largely remained on‑policy. The authors formalize discrete diffusion as a Markov chain (X_0 \rightarrow X_1 \rightarrow \dots \rightarrow X_N) with forward kernels (p_{\theta}(X_{n+1}\mid X_n)) and backward (noising) kernels (q(X_n\mid X_{n+1})). They introduce a trajectory‑level loss (L_P) that measures the squared log‑ratio between the forward‑induced trajectory distribution and the backward‑induced one, where (P) denotes the distribution over trajectories used for training. By allowing (P) to differ from the on‑policy distribution, they unlock off‑policy training strategies that dramatically improve sample quality.

Three off‑policy mechanisms are proposed. (1) A replay buffer stores final states (X_N) from previous policy rollouts; during training, trajectories are regenerated from these states using the backward kernel, mixing old and new experience. (2) Importance‑weighted prioritisation assigns each buffer entry a weight (w = \frac{e^{-E(X_N)}\prod q}{p_0\prod p_{\theta}}), focusing learning on trajectories that have high target probability but low model probability. (3) MCMC exploration refines buffer samples with a few steps of a Metropolis‑Hastings or Gibbs kernel whose stationary distribution is the target energy (p_{\text{target}}(x) \propto e^{-E(x)}). Since MCMC only requires energy evaluations, it is computationally cheap compared with full model rollouts. The combination of these three components—dubbed Hybrid‑Off‑Policy—is detailed in Algorithm 1 and shown to accelerate convergence and increase mode coverage on synthetic benchmarks.

The second contribution extends the diffusion framework to the Schrödinger bridge (SB) problem when one of the endpoint distributions is given only as an unnormalised energy function (data‑to‑energy setting). Classical SB methods assume samples from both endpoints; here the authors adapt the iterative proportional fitting (IPF) procedure to discrete spaces and employ the same off‑policy trajectory loss to alternately update forward and backward kernels. The bridge seeks a pair ((p_{\theta}, q_{\phi})) that transports an arbitrary prior (p_0) to the target energy‑defined distribution while minimizing KL divergence to a reference process (Q) (typically a simple diffusion). This yields the discrete analogue of the continuous‑time SB and provides a principled way to learn both forward and reverse dynamics when only an energy oracle is available.

The third contribution demonstrates a practical application: posterior sampling in the discrete latent spaces of pretrained image generative models (e.g., VQ‑VAE, discrete auto‑encoders). The posterior (p(z\mid y) \propto p_{\text{prior}}(z) p_{\text{likelihood}}(y\mid z)) is expressed as an energy (E(z) = -\log p_{\text{prior}}(z) - \log p_{\text{likelihood}}(y\mid z)). Using a uniform token distribution as (p_0) and training a data‑to‑energy SB, the authors obtain high‑quality latent samples without any observed data from the posterior. Compared with standard MCMC or Gibbs sampling in the latent space, the proposed bridge yields 2–3× higher effective sample size and produces reconstructions with better Fréchet Inception Distance (FID) and Inception Score (IS). Qualitative visualizations confirm that the samples capture diverse modes of the posterior, which is often a failure point for vanilla Gibbs chains.

Extensive experiments validate each claim. On synthetic multimodal discrete energies, Hybrid‑Off‑Policy outperforms on‑policy baselines and recent discrete diffusion samplers (masked diffusion, energy‑based diffusion) in terms of convergence speed, KL to the target, and mode coverage. Ablation studies isolate the contribution of each off‑policy component, showing that importance‑weighted replay and MCMC refinement are both essential for the observed gains. In the latent‑space posterior task, the authors report quantitative improvements (e.g., FID reduction from 45 to 28) and demonstrate that the learned bridge can be sampled with a variable number of diffusion steps, preserving sample quality even when the number of steps exceeds the training horizon.

In summary, the paper makes three novel contributions: (1) systematic integration of off‑policy RL techniques into discrete diffusion samplers, (2) the first formulation and algorithm for data‑to‑energy Schrödinger bridges in discrete spaces, and (3) a compelling real‑world use case—data‑free posterior inference in discrete latent spaces of image generators. The work bridges a methodological gap between continuous and discrete diffusion models, offers a theoretically grounded bridge framework for energy‑only targets, and provides empirical evidence of practical utility. All code and experimental details are released at the provided URL, facilitating reproducibility and future research.

Comments & Academic Discussion

Loading comments...

Leave a Comment