Better Source, Better Flow: Learning Condition-Dependent Source Distribution for Flow Matching

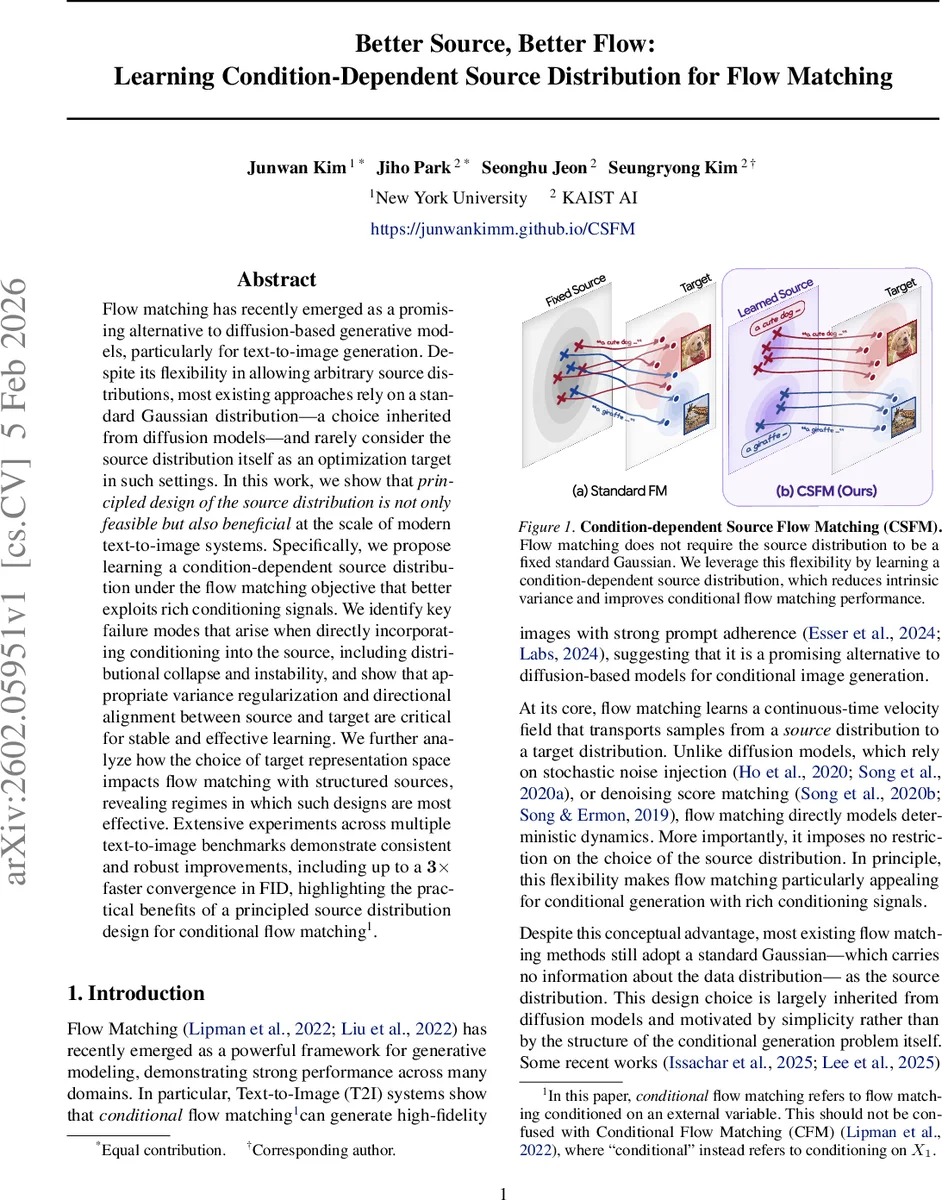

Flow matching has recently emerged as a promising alternative to diffusion-based generative models, particularly for text-to-image generation. Despite its flexibility in allowing arbitrary source distributions, most existing approaches rely on a standard Gaussian distribution, a choice inherited from diffusion models, and rarely consider the source distribution itself as an optimization target in such settings. In this work, we show that principled design of the source distribution is not only feasible but also beneficial at the scale of modern text-to-image systems. Specifically, we propose learning a condition-dependent source distribution under flow matching objective that better exploit rich conditioning signals. We identify key failure modes that arise when directly incorporating conditioning into the source, including distributional collapse and instability, and show that appropriate variance regularization and directional alignment between source and target are critical for stable and effective learning. We further analyze how the choice of target representation space impacts flow matching with structured sources, revealing regimes in which such designs are most effective. Extensive experiments across multiple text-to-image benchmarks demonstrate consistent and robust improvements, including up to a 3x faster convergence in FID, highlighting the practical benefits of a principled source distribution design for conditional flow matching.

💡 Research Summary

Flow matching has emerged as a powerful alternative to diffusion‑based generative modeling, offering a deterministic ODE formulation that transports samples from a source distribution p₀ to a target distribution p₁(C) conditioned on an external variable C (e.g., a text prompt). While the theory permits any source, most recent works inherit the standard Gaussian N(0, I) from diffusion models, thereby discarding valuable conditioning information at the very beginning of the generation process.

The authors propose Condition‑dependent Source Flow Matching (CSFM), which learns a conditional Gaussian source pϕ(X₀|C)=𝒩(μϕ(C), σ²ϕ(C)I). The source generator gϕ(C) produces samples X₀ that are fed into the same ODE as the target, and both the flow field vθ(·) and the source parameters ϕ are jointly optimized under the standard flow‑matching loss:

L_FM(θ, ϕ)=E_{t,πϕ}

Comments & Academic Discussion

Loading comments...

Leave a Comment