Tuning Out-of-Distribution (OOD) Detectors Without Given OOD Data

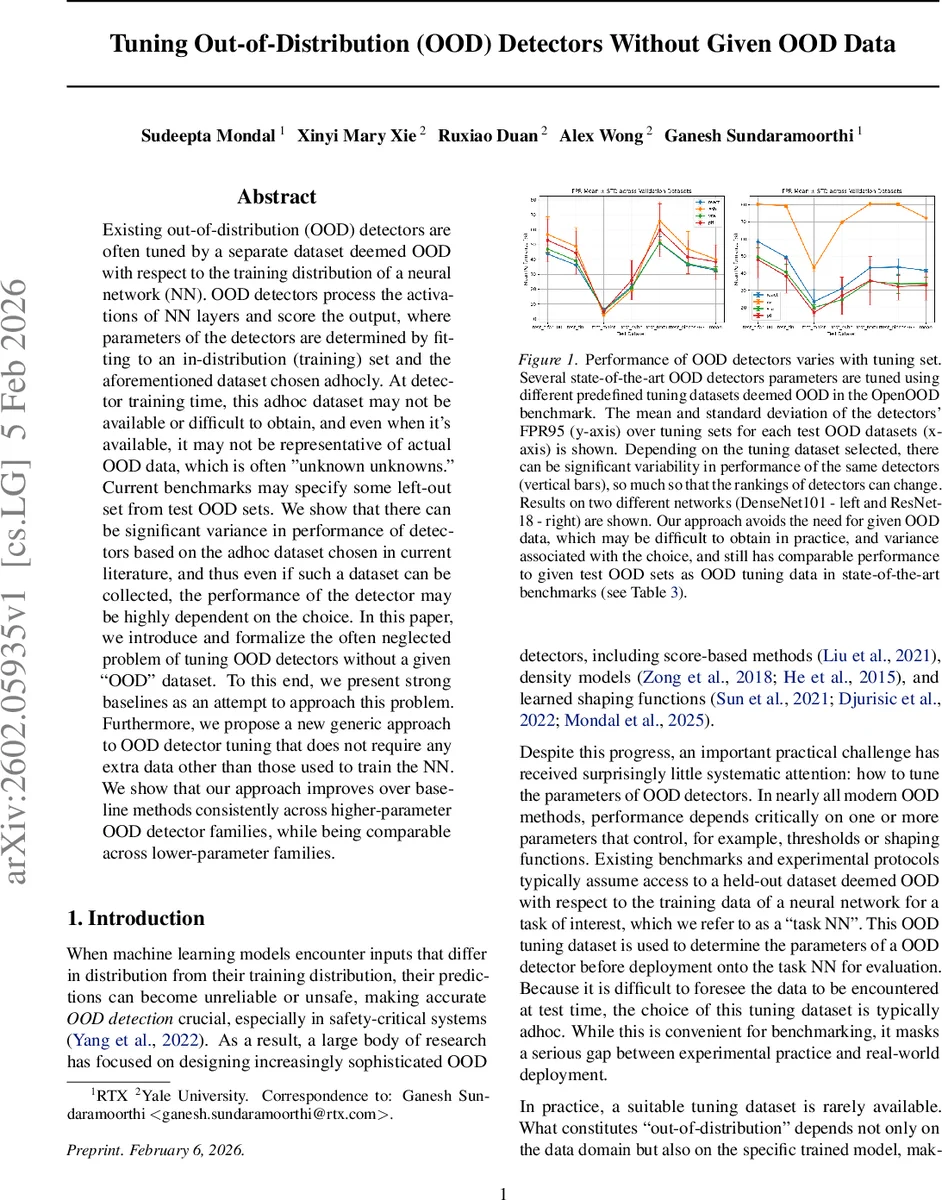

Existing out-of-distribution (OOD) detectors are often tuned by a separate dataset deemed OOD with respect to the training distribution of a neural network (NN). OOD detectors process the activations of NN layers and score the output, where parameters of the detectors are determined by fitting to an in-distribution (training) set and the aforementioned dataset chosen adhocly. At detector training time, this adhoc dataset may not be available or difficult to obtain, and even when it’s available, it may not be representative of actual OOD data, which is often ‘‘unknown unknowns." Current benchmarks may specify some left-out set from test OOD sets. We show that there can be significant variance in performance of detectors based on the adhoc dataset chosen in current literature, and thus even if such a dataset can be collected, the performance of the detector may be highly dependent on the choice. In this paper, we introduce and formalize the often neglected problem of tuning OOD detectors without a given ``OOD’’ dataset. To this end, we present strong baselines as an attempt to approach this problem. Furthermore, we propose a new generic approach to OOD detector tuning that does not require any extra data other than those used to train the NN. We show that our approach improves over baseline methods consistently across higher-parameter OOD detector families, while being comparable across lower-parameter families.

💡 Research Summary

The paper addresses a critical gap in out‑of‑distribution (OOD) detection: most modern OOD detectors require a separate “tuning” dataset that is assumed to be OOD with respect to the training distribution. In practice such a dataset is often unavailable, expensive to collect, or unrepresentative of the unknown OOD inputs that will appear at deployment. The authors first demonstrate that detector performance varies dramatically depending on which ad‑hoc OOD set is used for tuning, sometimes even changing the ranking of methods.

To study the problem formally, they benchmark two simple synthetic baselines that have appeared in prior work: (i) Gaussian‑noise images and (ii) adversarially perturbed in‑distribution samples. While these baselines can help in limited settings (e.g., ImageNet), they do not consistently improve performance, especially for high‑parameter detector families that rely on feature shaping (e.g., ReAct, ASH, VRA).

The core contribution is a novel, fully self‑contained tuning framework that eliminates the need for any external OOD data. The idea is to generate “simulated OOD” from the training set itself by randomly leaving out a subset of classes (M out of n). For each random split, a network is retrained on the remaining n‑M classes, producing N variants. The excluded classes constitute simulated OOD examples, while the retained classes serve as simulated in‑distribution data. From each variant, S validation splits are sampled, yielding a collection of simulated ID/OOD pairs.

A loss function ℓ(ϕ|M) averages the detection loss over all N networks and S validation splits, thereby reducing dependence on any particular split. The detector parameters ϕ are optimized by Bayesian optimization to minimize this loss. The number of left‑out classes M is treated as a hyper‑parameter; after finding optimal ϕ for each M, a separate validation step re‑estimates ℓ to select the best M*. The final tuned detector ϕ* is then combined with the original network trained on the full dataset.

Experiments span CIFAR‑10/100, ImageNet‑1K, iNaturalist and use DenseNet‑101 and ResNet‑18 backbones. Evaluation metrics include FPR95 and AUROC. Results show that the proposed method consistently outperforms the Gaussian‑noise and adversarial baselines for high‑parameter detectors, reducing FPR95 by 3–5 percentage points and improving AUROC by 1–2 points. For low‑parameter detectors (MSP, ODIN, Energy) performance is comparable, but the method removes the need for any tuning data altogether. Moreover, variance across different OOD tuning sets is dramatically reduced, indicating more stable real‑world behavior.

In summary, the paper introduces the first general framework for OOD detector tuning without external OOD data, leverages the structure of the training distribution itself, and demonstrates robust, dataset‑agnostic improvements. This work paves the way for cost‑effective, privacy‑preserving deployment of OOD detection in safety‑critical applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment