Polyglots or Multitudes? Multilingual LLM Answers to Value-laden Multiple-Choice Questions

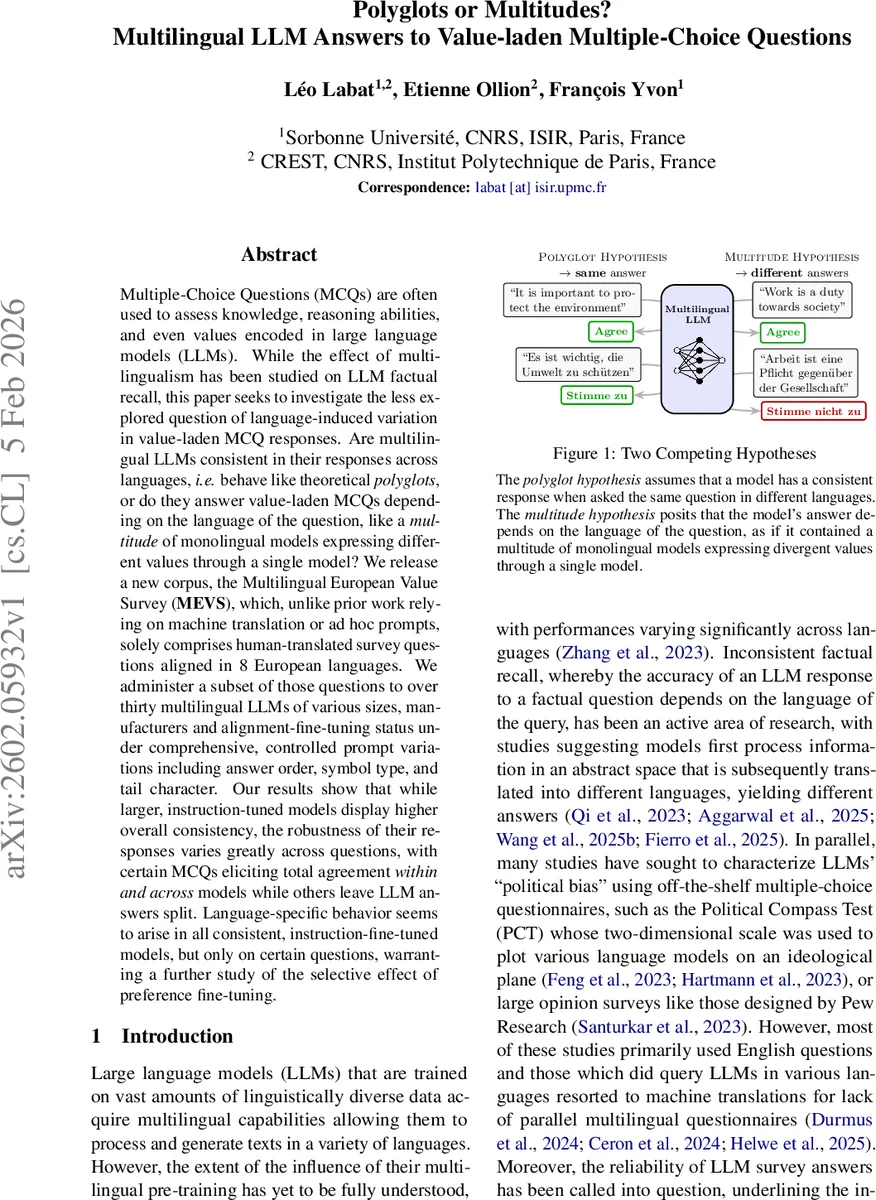

Multiple-Choice Questions (MCQs) are often used to assess knowledge, reasoning abilities, and even values encoded in large language models (LLMs). While the effect of multilingualism has been studied on LLM factual recall, this paper seeks to investigate the less explored question of language-induced variation in value-laden MCQ responses. Are multilingual LLMs consistent in their responses across languages, i.e. behave like theoretical polyglots, or do they answer value-laden MCQs depending on the language of the question, like a multitude of monolingual models expressing different values through a single model? We release a new corpus, the Multilingual European Value Survey (MEVS), which, unlike prior work relying on machine translation or ad hoc prompts, solely comprises human-translated survey questions aligned in 8 European languages. We administer a subset of those questions to over thirty multilingual LLMs of various sizes, manufacturers and alignment-fine-tuning status under comprehensive, controlled prompt variations including answer order, symbol type, and tail character. Our results show that while larger, instruction-tuned models display higher overall consistency, the robustness of their responses varies greatly across questions, with certain MCQs eliciting total agreement within and across models while others leave LLM answers split. Language-specific behavior seems to arise in all consistent, instruction-fine-tuned models, but only on certain questions, warranting a further study of the selective effect of preference fine-tuning.

💡 Research Summary

This paper investigates whether multilingual large language models (LLMs) give consistent answers to value‑laden multiple‑choice questions (MCQs) across different languages, or whether their responses vary by language as if each language were handled by a separate monolingual model. To avoid the pitfalls of machine‑translated questionnaires, the authors construct a new dataset, the Multilingual European Value Survey (MEVS), which consists of human‑translated survey items aligned across eight European languages (Czech, English, French, German, Norwegian, Portuguese, Russian, and Spanish). After correcting systematic alignment errors in the source Multilingual Corpus of Survey Questionnaires, the authors release 142 questions with identical meaning and five‑point Likert scales in all languages.

The experimental protocol evaluates more than thirty open‑source multilingual LLMs of varying size, architecture, and fine‑tuning status (including EuroLLM, LLaMA 3, Qwen 2.5, Mistral Nemo, Salamandra, Aya, Gemma 2, etc.). For each model, a development set of ten questions is administered, and the seven most consistent models are subsequently tested on an additional fourteen questions. To probe robustness, the authors generate exhaustive prompt variations for every question: all 5! = 120 possible answer orderings, two symbol types (letters vs. numbers), and three tail‑character conditions (no extra character, a space, or a newline). This yields 720 prompt variants per language, resulting in 5,760 prompts per question across the eight languages.

Answers are extracted using the Multiple‑Choice Prompting (MCP) method: the model’s log‑probabilities for each answer token (e.g., “A”, “B”, …) are collected in a single forward pass, and the token with the highest log‑probability is taken as the response. No sampling, temperature, or top‑k is involved, ensuring deterministic outputs.

Consistency is quantified with two complementary metrics: Proportion of Plurality Agreement (PPA), which is the frequency of the most common answer, and Rényi‑type entropies (min‑entropy and Shannon entropy). Both metrics are computed on the full five‑point scale and on an aggregated three‑point scale (Agree, Neutral, Disagree) to capture semantically meaningful divergences.

Key findings include: (1) Larger, instruction‑fine‑tuned models achieve higher overall consistency (average PPA > 85 % and Shannon entropy ≈ 0.3 bits), confirming prior observations that scale and alignment fine‑tuning improve stability. (2) Consistency is highly question‑dependent; some items elicit near‑perfect agreement across all models and languages, while others produce split answers even among the most consistent models. (3) Language‑specific effects are not uniform: for certain questions, German and French responses cluster on “Strongly Agree,” whereas English and Spanish responses tend toward “Neutral.” This suggests cultural or linguistic framing influences value judgments. (4) Prompt variations reveal pronounced order bias: specific answer orderings can flip the highest‑probability token, confirming earlier work on MCQ fragility. Symbol type and tail character also affect probabilities, albeit to a lesser extent.

The authors conclude that neither the “polyglot hypothesis” (language‑independent answers) nor the “multitude hypothesis” (language‑specific answers) holds universally; instead, LLM behavior lies somewhere in between, with model size and instruction tuning pushing toward polyglot‑like consistency, but question semantics and prompt nuances re‑introducing language‑specific divergence. They argue that future work should explore how preference‑fine‑tuning can mitigate language‑specific bias, and how LLM answer distributions align with human survey data across cultures. The study underscores the necessity of rigorous prompt standardization and multilingual benchmark design for reliable evaluation of LLM values.

Comments & Academic Discussion

Loading comments...

Leave a Comment