Stop Rewarding Hallucinated Steps: Faithfulness-Aware Step-Level Reinforcement Learning for Small Reasoning Models

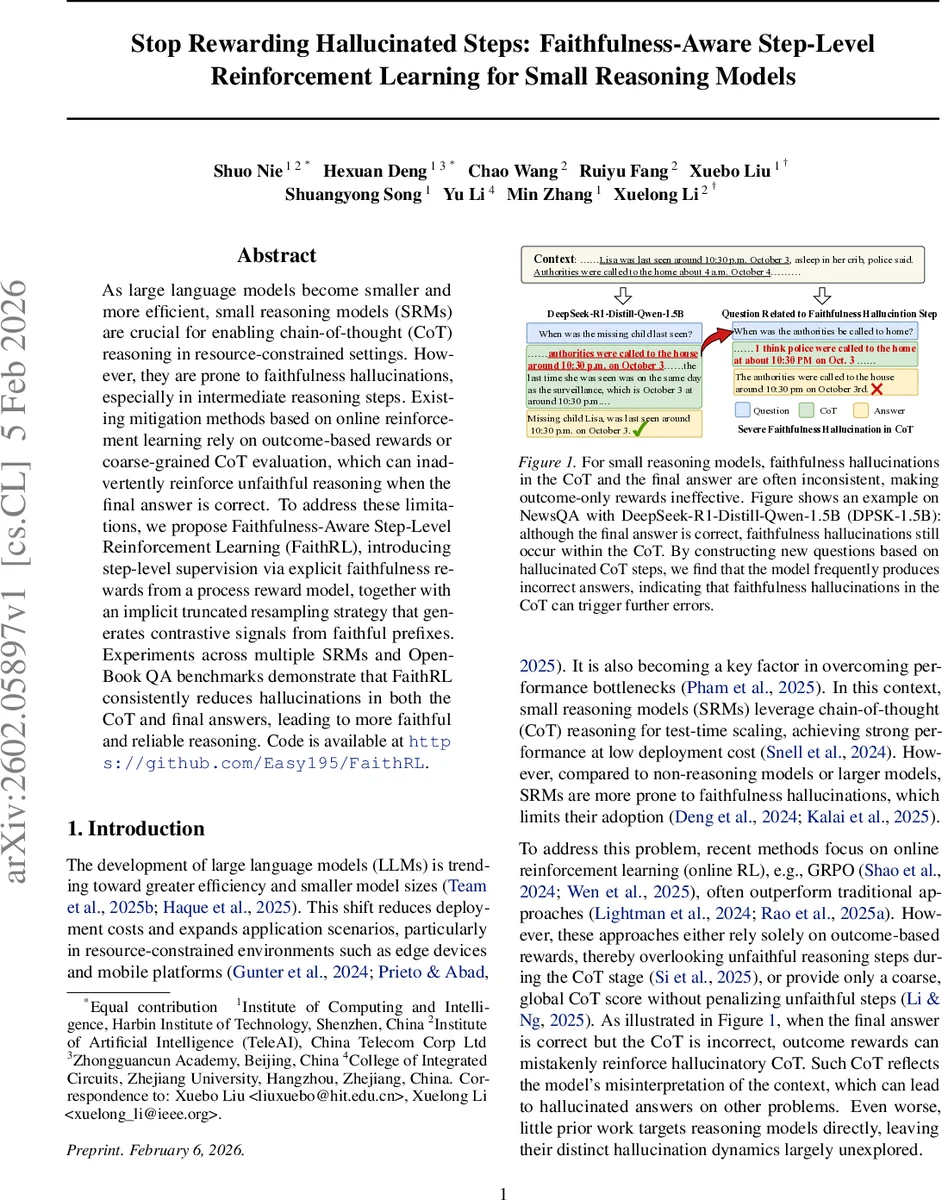

As large language models become smaller and more efficient, small reasoning models (SRMs) are crucial for enabling chain-of-thought (CoT) reasoning in resource-constrained settings. However, they are prone to faithfulness hallucinations, especially in intermediate reasoning steps. Existing mitigation methods based on online reinforcement learning rely on outcome-based rewards or coarse-grained CoT evaluation, which can inadvertently reinforce unfaithful reasoning when the final answer is correct. To address these limitations, we propose Faithfulness-Aware Step-Level Reinforcement Learning (FaithRL), introducing step-level supervision via explicit faithfulness rewards from a process reward model, together with an implicit truncated resampling strategy that generates contrastive signals from faithful prefixes. Experiments across multiple SRMs and Open-Book QA benchmarks demonstrate that FaithRL consistently reduces hallucinations in both the CoT and final answers, leading to more faithful and reliable reasoning. Code is available at https://github.com/Easy195/FaithRL.

💡 Research Summary

The paper tackles a critical problem in the emerging class of Small Reasoning Models (SRMs): while they enable chain‑of‑thought (CoT) reasoning at low computational cost, they frequently generate “faithfulness hallucinations” – intermediate reasoning steps that are not grounded in the provided context. Existing online reinforcement‑learning (RL) approaches such as GRPO, Dual‑GRPO, or FSPO typically reward only the final answer or use a coarse global CoT score. Consequently, when a model produces a correct answer despite an unfaithful CoT, the RL signal unintentionally reinforces the faulty reasoning, leading to hidden vulnerabilities that can be exploited by adversarial prompts.

To remedy this, the authors propose Faithfulness‑Aware Step‑Level Reinforcement Learning (FaithRL). FaithRL introduces two complementary mechanisms for step‑level supervision: (1) explicit rewards derived from a Process Reward Model (PRM) that evaluates the faithfulness of each CoT step against the context, and (2) implicit rewards generated through Dynamic Truncated Resampling (DTR), a tree‑structured sampling strategy that truncates a rollout as soon as an unfaithful step is detected, then regenerates the continuation from the faithful prefix. The original and regenerated rollouts form a contrastive pair; the difference in their returns provides a fine‑grained penalty for the unfaithful step. To prevent reward hacking, the PRM’s output is clipped, noise‑perturbed, and combined with the final‑answer reward using a tunable weighting λ.

The authors conduct extensive analyses on five open‑book QA datasets (SQuAD, NewsQA, TriviaQA, Natural Questions, HotpotQA) using several SRMs of varying sizes (e.g., DPSK‑1.5B, Qwen‑3‑0.6B) and larger baselines (DPSK‑7B, Qwen‑3‑8B). They introduce three metrics: (i) Faithful Rate (percentage of answers judged faithful by an LLM‑as‑a‑judge), (ii) CoT Faith (percentage of samples whose entire CoT contains no hallucinated step), and (iii) Key CoT Faith (faithfulness of the most influential reasoning path identified via a recursive perplexity‑based ablation). Results reveal that SRMs suffer far more severe CoT hallucinations than answer hallucinations (e.g., CoT Faith as low as 6 % for DPSK‑1.5B while answer Faith is 67 %). An attack experiment demonstrates that hallucinated CoT steps can be leveraged to craft new questions that cause the model to answer incorrectly with a success rate of ~60 %, confirming that correct answers alone are insufficient supervision.

FaithRL is evaluated against strong baselines (GRPO, Dual‑GRPO, FSPO, Scaf‑GRPO). Across all datasets, FaithRL improves answer F1 by an average of 3.86 percentage points and raises Faithful Rate by 3.48 points. More strikingly, CoT Faith and Key CoT Faith increase by roughly 12–15 points, indicating that the model learns to produce more grounded intermediate reasoning. The attack success rate drops by 15–20 points, showing enhanced robustness to downstream errors caused by unfaithful reasoning. Ablation studies confirm that both explicit PRM rewards and implicit DTR contrastive signals are necessary; removing either component degrades performance.

The paper also discusses limitations. The PRM itself may misclassify some steps, suggesting future work on ensemble judges or human‑in‑the‑loop verification. DTR incurs extra sampling cost when many steps are flagged, though the authors mitigate this by early truncation and selective resampling. Finally, the current focus on open‑book QA leaves open the question of how FaithRL transfers to other tasks such as multi‑choice QA, summarization, or code generation.

In conclusion, FaithRL demonstrates that step‑level, faithfulness‑aware reinforcement learning can substantially reduce hallucinations in small reasoning models without sacrificing answer accuracy. By jointly optimizing for answer correctness and intermediate grounding, the method enables SRMs to deliver reliable, interpretable reasoning even under tight computational budgets, paving the way for trustworthy AI deployment on edge devices and other resource‑constrained platforms.

Comments & Academic Discussion

Loading comments...

Leave a Comment