"It Talks Like a Patient, But Feels Different": Co-Designing AI Standardized Patients with Medical Learners

Standardized patients (SPs) play a central role in clinical communication training but are costly, difficult to scale, and inconsistent. Large language model (LLM) based AI standardized patients (AI-SPs) promise flexible, on-demand practice, yet learners often report that they talk like a patient but feel different. We interviewed 12 clinical-year medical students and conducted three co-design workshops to examine how learners experience constraints of SP encounters and what they expect from AI-SPs. We identified six learner-centered needs, translated them into AI-SP design requirements, and synthesized a conceptual workflow. Our findings position AI-SPs as tools for deliberate practice and show that instructional usability, rather than conversational realism alone, drives learner trust, engagement, and educational value.

💡 Research Summary

This paper investigates how medical learners experience the constraints of traditional standardized patients (SPs) and how they envision AI‑driven standardized patients (AI‑SPs) that can overcome those limitations. The authors conducted a two‑phase qualitative study with twelve clinical‑year medical students from two schools. Phase 1 consisted of semi‑structured remote interviews (≈45 min each) that explored learners’ perceptions of SP realism, emotional load, feedback timing, and the inability to practice physical examinations. Thematic analysis revealed six recurring needs (T1–T6): (1) alignment of simulation fidelity with learning goals, (2) avoidance of predictable “game‑like” information release, (3) inclusion of evidence‑gathering beyond dialogue, (4) real‑time support during breakdowns, (5) timely, context‑rich feedback, and (6) opportunities to practice emotional labor and shared decision‑making.

Phase 2 involved three in‑person co‑design workshops where the same participants sketched their “ideal AI‑SP,” rotated ideas, and refined concepts. The workshop data were mapped onto the interview‑derived needs, yielding six concrete design requirements (D1–D6):

- D1 – Goal‑aligned fidelity modes (e.g., OSCE vs. daily practice) that define distinct realism targets and evaluation criteria.

- D2 – Policy‑driven information release rules that guarantee visible cues (e.g., jaundice) while keeping deeper clinical data inquiry‑dependent, thus preventing predictability.

- D3 – Multimodal evidence interaction, adding a virtual body map, examination checklist, and test‑ordering interface so learners can practice physical exams and diagnostic work‑up.

- D4 – Hybrid input as a reliability layer: voice remains primary, but text or keyword shortcuts appear when speech recognition fails or conversation stalls, reducing anxiety.

- D5 – Learner‑controlled scaffolding and dual‑loop feedback: optional prompts and adjustable difficulty support a progression from coached practice to exam‑style stress testing; lightweight in‑action cues (e.g., trust or affect shifts) are complemented by a structured post‑session debrief that reconstructs missed questions and links errors to knowledge resources.

- D6 – Controllable affect and relational variability: selectable patient personalities and emotional responses, with replayable scenarios, enable deliberate practice of explanation, negotiation, and shared decision‑making.

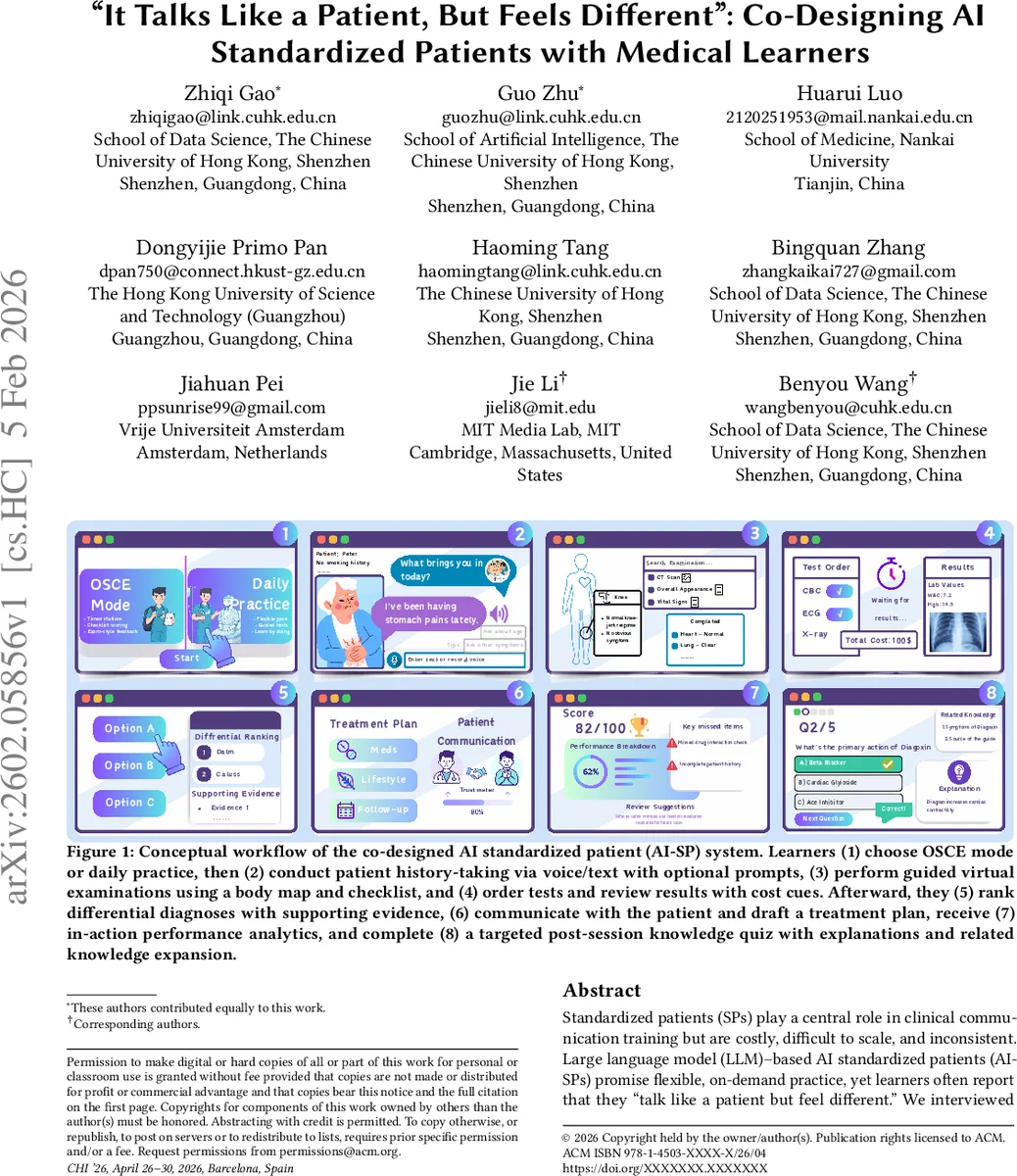

These requirements are integrated into a conceptual AI‑SP workflow (Figure 1) comprising eight stages: (1) mode selection, (2) voice/text history‑taking, (3) guided virtual examination, (4) test ordering with cost cues, (5) differential diagnosis ranking, (6) patient communication and treatment planning, (7) in‑action performance analytics (trust, affect, cue‑based hints), and (8) a targeted post‑session knowledge quiz with explanations and related content. The workflow emphasizes three quality dimensions identified in the discussion: controllability (what can vary and why), observability (making learning progress visible), and learnability (how feedback drives improvement).

The authors argue that conversational fluency alone does not earn learner trust; instead, instructional usability—clear purpose, transparent limits, and actionable feedback—determines engagement and educational value. By allowing learners to configure fidelity, information flow, multimodal evidence collection, and affective behavior, AI‑SPs become transparent, reliable tools for deliberate practice rather than opaque “chatbots.” The co‑design approach proved effective in surfacing real‑world constraints and translating them into implementable system specifications.

In sum, the study contributes (1) an empirically grounded set of learner‑centered design requirements for LLM‑based AI standardized patients, (2) a detailed workflow that operationalizes those requirements, and (3) a conceptual shift from pursuing pure conversational realism toward prioritizing instructional usability, controllability, and dual‑loop feedback. These insights provide a roadmap for developers and educators seeking to integrate AI‑SPs into medical curricula, especially for high‑stakes assessments such as OSCEs and for ongoing clinical communication skill development.

Comments & Academic Discussion

Loading comments...

Leave a Comment