RRAttention: Dynamic Block Sparse Attention via Per-Head Round-Robin Shifts for Long-Context Inference

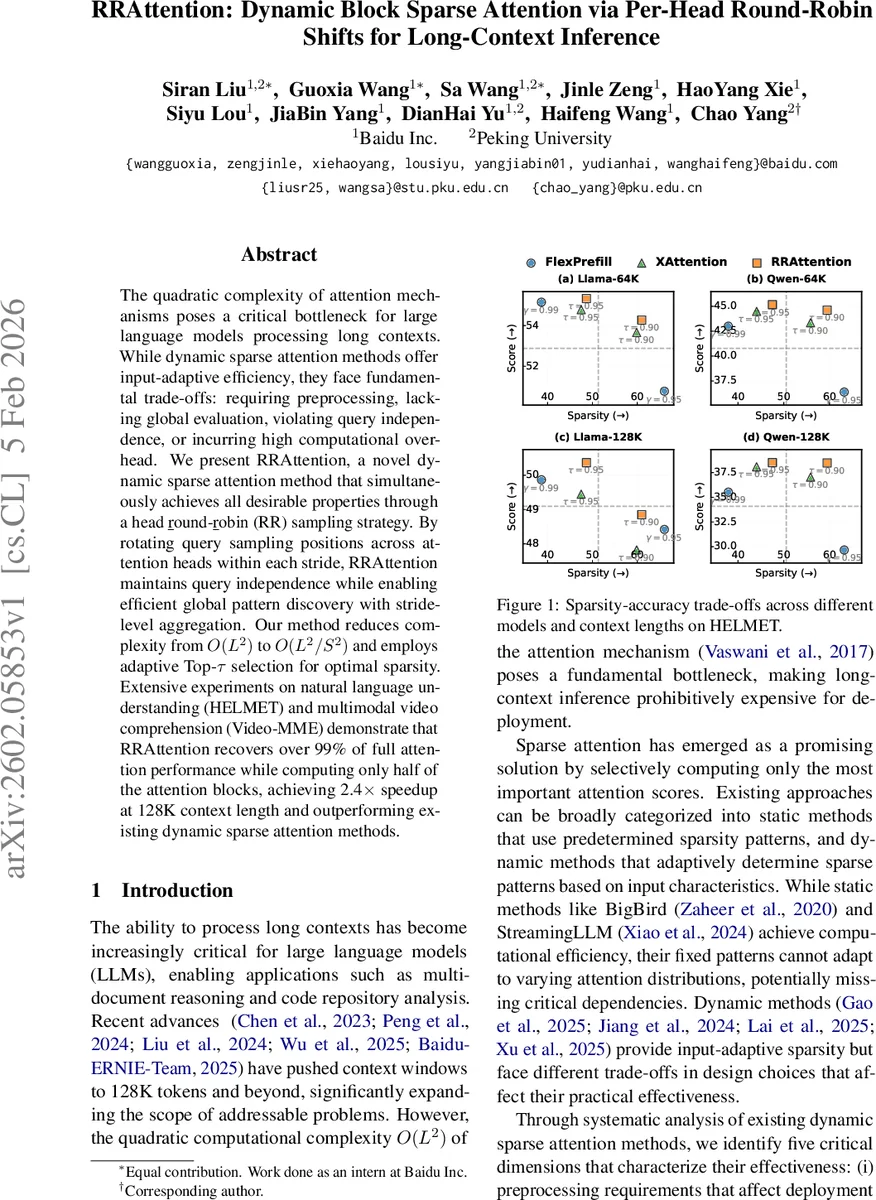

The quadratic complexity of attention mechanisms poses a critical bottleneck for large language models processing long contexts. While dynamic sparse attention methods offer input-adaptive efficiency, they face fundamental trade-offs: requiring preprocessing, lacking global evaluation, violating query independence, or incurring high computational overhead. We present RRAttention, a novel dynamic sparse attention method that simultaneously achieves all desirable properties through a head \underline{r}ound-\underline{r}obin (RR) sampling strategy. By rotating query sampling positions across attention heads within each stride, RRAttention maintains query independence while enabling efficient global pattern discovery with stride-level aggregation. Our method reduces complexity from $O(L^2)$ to $O(L^2/S^2)$ and employs adaptive Top-$τ$ selection for optimal sparsity. Extensive experiments on natural language understanding (HELMET) and multimodal video comprehension (Video-MME) demonstrate that RRAttention recovers over 99% of full attention performance while computing only half of the attention blocks, achieving 2.4$\times$ speedup at 128K context length and outperforming existing dynamic sparse attention methods.

💡 Research Summary

RRAttention addresses the quadratic cost of self‑attention in large language models when processing very long sequences (e.g., 128 K tokens). Existing dynamic sparse attention methods improve efficiency by adapting sparsity patterns to the input, but they each sacrifice at least one of five desirable properties: (i) preprocessing‑free deployment, (ii) global evaluation of attention importance, (iii) query independence, (iv) pattern‑agnostic operation across heads, and (v) efficient softmax granularity. The authors systematically analyze prior work (SeerAttention, MInference, FlexPreFill, XAttention) and show that none simultaneously satisfies all criteria.

The core contribution of RRAttention is a head round‑robin (RR) sampling strategy. The input sequence of length L is divided into strides of size S (S ≪ L). For each attention head h, a single query token is sampled from each stride at position

(P(i, h) = iS + (S-1-(h \bmod S))).

Thus, across the H heads, every token position within a stride is eventually sampled, guaranteeing that no part of the sequence is systematically ignored. This sampling preserves query independence because each head computes its own attention distribution without mixing information from other queries.

After sampling, the method aggregates keys within each stride, computes a dot‑product between the sampled query and the aggregated key vector, and normalizes by (1/(S\sqrt{d})). The resulting stride‑level importance scores (I^{(h)}{i,j}) are passed through a row‑wise softmax to obtain probabilities (P^{(h)}{i,j}). Because the computation operates on ((L/S) \times (L/S)) matrices, the complexity drops from (O(L^2)) to (O(L^2/S^2)) while still evaluating the entire key space (global evaluation).

The stride‑level probabilities are then summed into block‑level scores (S^{(h)}{m,n}) for each query block m and key block n. For each query block, a Top‑τ threshold is applied: blocks are sorted by importance and the minimal set whose cumulative importance exceeds τ is retained. This yields a dynamic block selection matrix (B{\text{dynamic}}). To guarantee that the final query block always attends to all previous tokens (important for causal models), a static matrix (B_{\text{static}}) that keeps all blocks for the last query block is OR‑combined with (B_{\text{dynamic}}).

RRAttention therefore satisfies all five desiderata:

- No preprocessing – the method works directly on the raw input without offline pattern search or distillation.

- Global evaluation – stride‑level aggregation considers every key token.

- Query independence – each head’s sampled query is processed separately.

- Pattern‑agnostic – the same RR scheme applies uniformly across heads.

- Efficient softmax granularity – softmax is performed at stride level, reducing the cost of fine‑grained normalization.

The authors evaluate RRAttention on two extensive benchmarks:

- HELMET, a long‑context natural‑language understanding suite covering retrieval‑augmented generation, multi‑shot in‑context learning, long‑document QA, summarization, and synthetic recall.

- Video‑MME, a multimodal video‑understanding benchmark for vision‑language models.

Two large language models (Meta‑LLaMA‑3.1‑8B‑Instruct and Qwen2.5‑7B‑Instruct) and a vision‑language model (Qwen2‑VL‑7B‑Instruct) serve as backbones. Baselines include FlashAttention (dense), FlexPreFill, and XAttention, each tuned with multiple sparsity thresholds.

Key results:

- Accuracy – RRAttention recovers > 99 % of full‑attention performance across all HELMET tasks, outperforming FlexPreFill and XAttention by 0.5–1.0 % absolute on average.

- Speed – At a context length of 128 K tokens, RRAttention achieves a 2.4× end‑to‑end speedup over dense attention, and 10–30 % faster than the best prior dynamic sparse method.

- Computation – Only half of the attention blocks are computed; pattern‑search overhead is reduced by 18.2 % relative to XAttention.

- Scalability – Additional experiments (Appendix B) on 200 K‑token sequences and larger models (up to 30 B parameters) confirm that the trade‑off holds at larger scales.

The paper also discusses limitations and future work. The stride size S and Top‑τ threshold are currently hyperparameters; learning them adaptively (e.g., via meta‑learning or reinforcement learning) could further improve efficiency. Moreover, while block‑level softmax is efficient, some tasks may benefit from token‑level refinement, suggesting a hybrid approach. Finally, extending the method to encoder‑decoder architectures and integrating it with retrieval‑augmented pipelines are promising directions.

In summary, RRAttention introduces a simple yet powerful head‑round‑robin sampling mechanism that enables global, query‑independent, pattern‑agnostic sparse attention with stride‑level softmax. It reduces the asymptotic complexity of attention, delivers state‑of‑the‑art speed‑accuracy trade‑offs on long‑context language and multimodal tasks, and does so without any preprocessing overhead, making it a practical solution for deploying LLMs in real‑world, ultra‑long‑context scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment