Allocentric Perceiver: Disentangling Allocentric Reasoning from Egocentric Visual Priors via Frame Instantiation

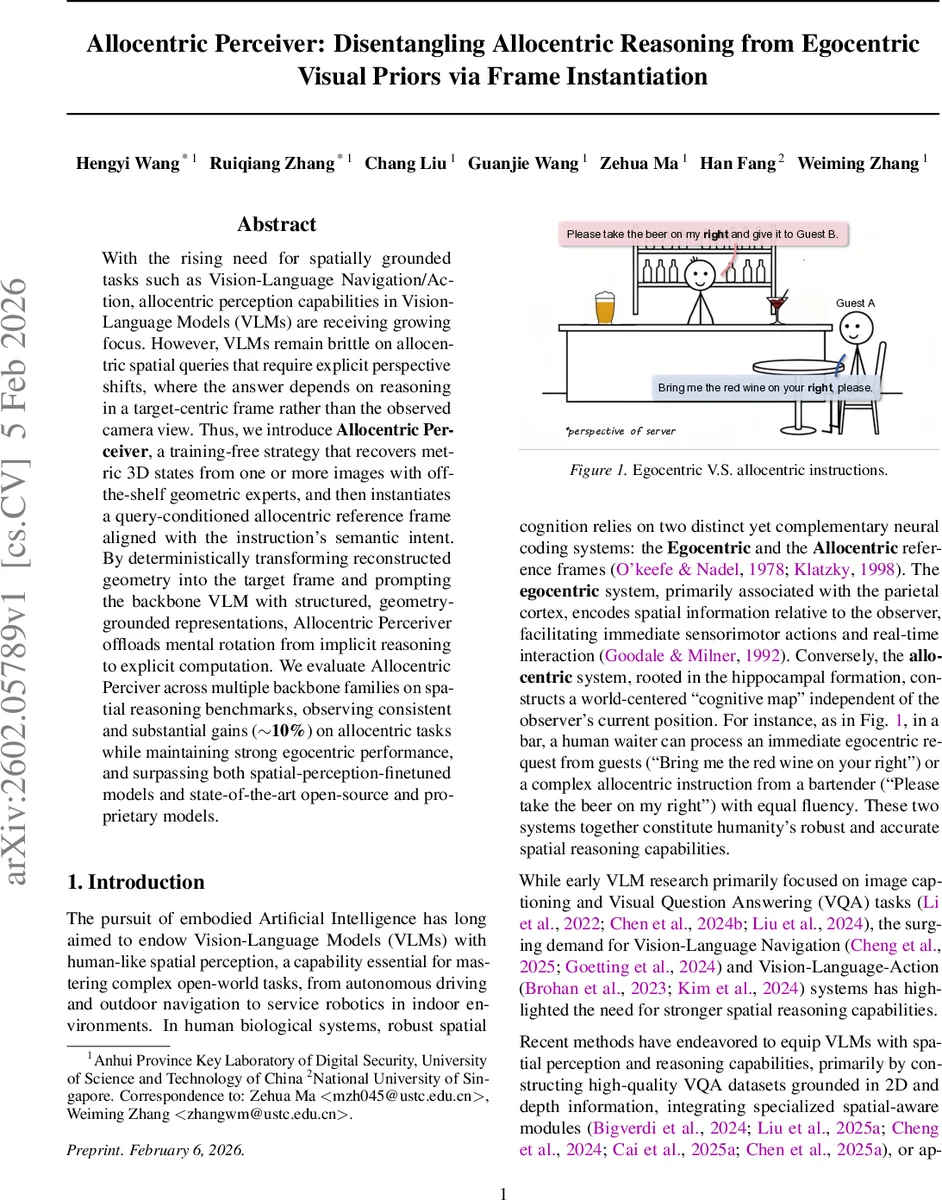

With the rising need for spatially grounded tasks such as Vision-Language Navigation/Action, allocentric perception capabilities in Vision-Language Models (VLMs) are receiving growing focus. However, VLMs remain brittle on allocentric spatial queries that require explicit perspective shifts, where the answer depends on reasoning in a target-centric frame rather than the observed camera view. Thus, we introduce Allocentric Perceiver, a training-free strategy that recovers metric 3D states from one or more images with off-the-shelf geometric experts, and then instantiates a query-conditioned allocentric reference frame aligned with the instruction’s semantic intent. By deterministically transforming reconstructed geometry into the target frame and prompting the backbone VLM with structured, geometry-grounded representations, Allocentric Perceriver offloads mental rotation from implicit reasoning to explicit computation. We evaluate Allocentric Perciver across multiple backbone families on spatial reasoning benchmarks, observing consistent and substantial gains ($\sim$10%) on allocentric tasks while maintaining strong egocentric performance, and surpassing both spatial-perception-finetuned models and state-of-the-art open-source and proprietary models.

💡 Research Summary

The paper tackles a fundamental weakness of current Vision‑Language Models (VLMs): their inability to reliably answer spatial questions that require an allocentric (world‑centered) perspective rather than the egocentric (camera‑centered) view they are trained on. The authors identify a “Reference Frame Gap” caused by visual‑semantic ambiguity in large image‑text corpora, where the same textual relation can be true in one frame and false in another. A feasibility study shows that removing visual input actually improves allocentric performance while dramatically hurting egocentric performance, confirming that egocentric visual priors interfere with allocentric reasoning.

To close this gap, the authors propose Allocentric Perceiver, a training‑free pipeline that (1) extracts metric 3D information from one or more images using off‑the‑shelf perception modules, (2) constructs a query‑conditioned allocentric reference frame aligned with the semantic intent of the question, and (3) feeds a geometry‑grounded textual representation of the transformed scene to the backbone VLM. The three stages are:

-

Metric‑Aware Egocentric Perception – The pipeline first parses the natural‑language query to obtain a set of object descriptors. Using LangSAM, it detects candidate regions for each descriptor, applying a hierarchical semantic relaxation to handle long, attribute‑rich phrases. Depth‑Anything‑3 together with known camera intrinsics and extrinsics lifts the segmented pixels into 3D point clouds. Multi‑view observations are merged into a unified world coordinate system, and DBSCAN clustering removes noise and resolves fragmented instances. The centroid of each object’s point cloud becomes its metric state.

-

Dynamic Frame Instantiation – The system parses the query to identify the reference object (e.g., “the man” in “Where is the bag relative to the man?”). The centroid of this reference object becomes the origin of a new allocentric frame. A rotation matrix is built from three orthonormal axes (right, down, front). The front axis is inferred either from intrinsic semantic cues (e.g., a person’s facing direction) or from extrinsic relational clues present in the query. This yields an explicit transformation (T: W \rightarrow F_{allo}) that re‑expresses all object coordinates in the allocentric frame.

-

Symbolic Geometry Reasoning – After transformation, each object’s position is expressed as a structured textual snippet (e.g., “Clock (3.97, 0.55, 1.45)”). The VLM receives a prompt that contains these geometry‑grounded facts together with the original question. Because the VLM now operates on unambiguous, metric data, it no longer needs to perform implicit mental rotation; the spatial reasoning reduces to simple relational inference over the provided numbers.

The authors evaluate the method on the ViewSpatial‑Bench, which contains two egocentric and three allocentric tasks, using several state‑of‑the‑art VLMs (Qwen2.5‑VL‑7B, InternVL2.5‑8B, etc.). Allocentric Perceiver consistently improves allocentric accuracy by roughly 10 % across all backbones, while egocentric performance is either maintained or slightly enhanced (1‑3 %). Compared against fine‑tuned spatial models, prompting‑only baselines, and recent 3D‑LLMs that operate in a global coordinate system, the proposed approach achieves comparable or superior results without any additional training.

Key contributions are: (i) formalizing the Reference Frame Gap and providing empirical evidence of its impact; (ii) introducing a training‑free, modular framework that decouples allocentric reasoning from egocentric visual priors via explicit metric reconstruction and frame transformation; and (iii) demonstrating backbone‑agnostic performance gains on multiple benchmarks. Limitations include reliance on the quality of external depth and segmentation models, and the current focus on single‑reference‑object frames rather than more complex relational frames. Future work may explore richer multi‑object reference frames, real‑time integration for robotic control, and tighter coupling between perception modules and language models.

In summary, Allocentric Perceiver offers a practical solution for endowing VLMs with true perspective‑taking abilities, paving the way for more reliable embodied AI applications such as navigation, manipulation, and augmented reality interaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment