Balancing FP8 Computation Accuracy and Efficiency on Digital CIM via Shift-Aware On-the-fly Aligned-Mantissa Bitwidth Prediction

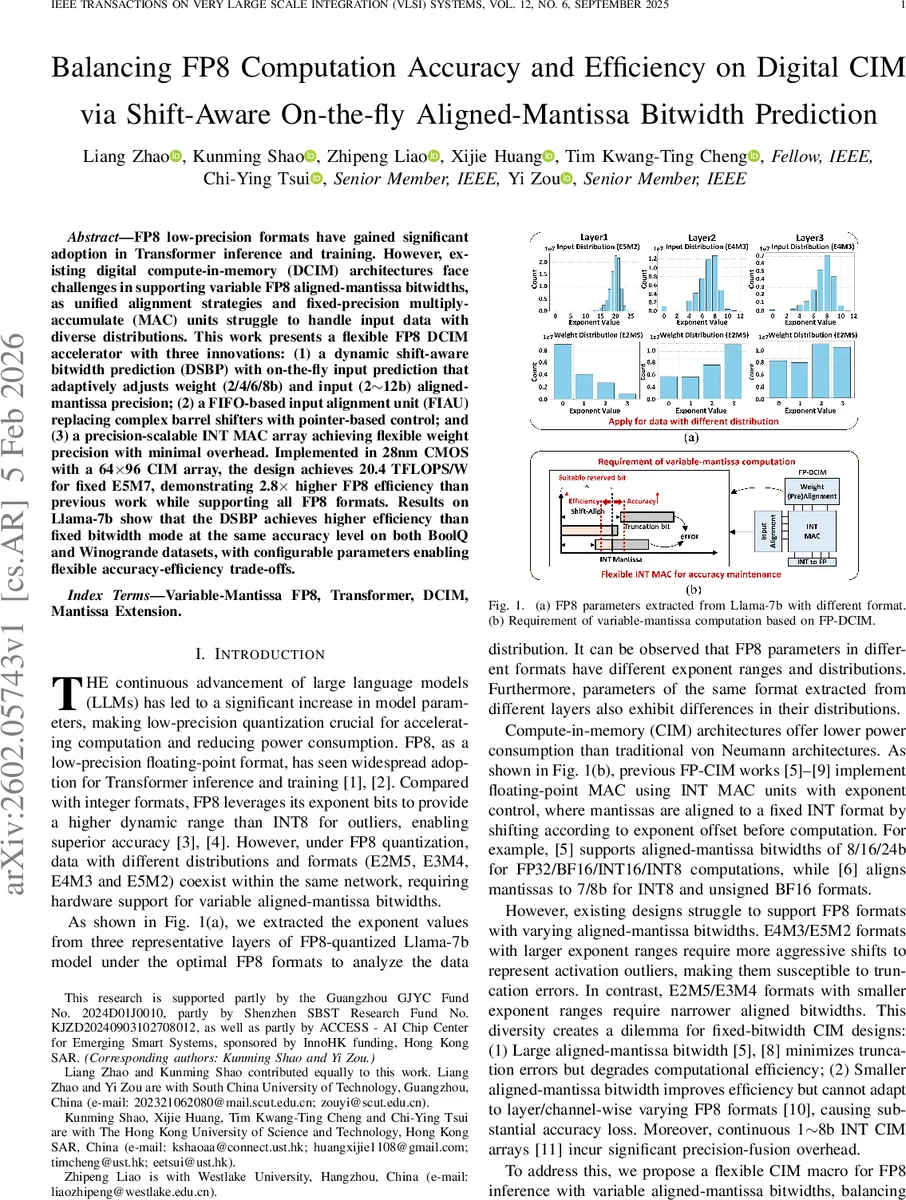

FP8 low-precision formats have gained significant adoption in Transformer inference and training. However, existing digital compute-in-memory (DCIM) architectures face challenges in supporting variable FP8 aligned-mantissa bitwidths, as unified alignment strategies and fixed-precision multiply-accumulate (MAC) units struggle to handle input data with diverse distributions. This work presents a flexible FP8 DCIM accelerator with three innovations: (1) a dynamic shift-aware bitwidth prediction (DSBP) with on-the-fly input prediction that adaptively adjusts weight (2/4/6/8b) and input (2$\sim$12b) aligned-mantissa precision; (2) a FIFO-based input alignment unit (FIAU) replacing complex barrel shifters with pointer-based control; and (3) a precision-scalable INT MAC array achieving flexible weight precision with minimal overhead. Implemented in 28nm CMOS with a 64$\times$96 CIM array, the design achieves 20.4 TFLOPS/W for fixed E5M7, demonstrating 2.8$\times$ higher FP8 efficiency than previous work while supporting all FP8 formats. Results on Llama-7b show that the DSBP achieves higher efficiency than fixed bitwidth mode at the same accuracy level on both BoolQ and Winogrande datasets, with configurable parameters enabling flexible accuracy-efficiency trade-offs.

💡 Research Summary

The paper addresses a critical limitation of existing digital compute‑in‑memory (DCIM) accelerators when handling the emerging FP8 low‑precision formats used in large language models (LLMs). FP8 comes in several variants (E2M5, E3M4, E4M3, E5M2) that differ in exponent range and mantissa size. In a single network, different layers may use different FP8 formats, which forces a hardware design to either allocate a large, fixed mantissa alignment width (wasting area and power) or a small width (causing severe truncation errors and accuracy loss).

To solve this, the authors propose a three‑pronged architecture:

-

Dynamic Shift‑Aware Bitwidth Prediction (DSBP) – a software‑hardware co‑design algorithm that, for each column‑wise group of 64 elements, computes the exponent offset (shiftᵢ) of every operand, weights the offsets by 2⁻ˢʰⁱᶠᵗ, and forms a weighted average. This average is scaled by a configurable factor k and combined with a fixed bias B_fix to produce a dynamic aligned‑mantissa bitwidth B_g. The algorithm restricts the output to a small set of admissible widths (1/3/5/7 b for weights, 1 ~ 11 b for inputs) and rounds up to the nearest legal value, ensuring hardware friendliness.

-

Mantissa Prediction Unit (MPU) – a dedicated on‑chip circuit that implements the DSBP formula in three pipeline stages. Stage 1 computes shiftᵢ·2⁻ˢʰⁱᶠᵗ and 2⁻ˢʰⁱᶠᵗ using simple shifters; Stage 2 aggregates the 64 results with two 64‑input adder trees; Stage 3 performs the division via an 8‑bit reciprocal lookup table, multiplies by k, adds B_fix, and saturates to a 5‑bit result. The MPU occupies only 7 % of the total macro area and is clock‑gated in fixed‑width mode.

-

FIFO‑based Input Alignment Unit (FIAU) – replaces traditional barrel shifters with a pointer‑controlled FIFO. Mantissas are stored in two’s‑complement form, written MSB‑first. A read pointer holds at the most‑significant bit for (exponent offset + 1) cycles, thereby achieving the required right‑shift effect without combinational shifter logic. A “save_len” signal determines when the pointer jumps to the next mantissa, allowing flexible output precision. Compared with barrel shifters, FIAU reduces area by 21.7 % and power by 34.1 %.

The accelerator also features a precision‑scalable INT MAC array that supports 2 ~ 12 b input precision and 2/4/6/8 b weight precision. For 2/4/8 b weight modes, the array uses a regular fusion path; for the 6 b weight mode, three adjacent 64 × 2 b MAC columns are fused using 4‑2 compressors and full adders, adding only a modest amount of extra circuitry.

Implemented in 28 nm CMOS, the macro comprises a 64 × 96 6T SRAM array, operates at 0.6 ~ 0.9 V and 50 ~ 250 MHz, and achieves 20.4 TFLOPS/W for the fixed E5M7 format—2.8× higher than prior FP‑CIM works.

Experimental validation uses the Llama‑7B model on the BoolQ and Winogrande benchmarks. With a “Precise” configuration (k = 1, B_fix = 6/5) the average input/weight bitwidth is 7.65/6.61 b, delivering 22.5 TFLOPS/W while matching the FP8 baseline accuracy (75.0 % / 70.1 %). An “Efficient” configuration (k = 2, B_fix = 4/4) reduces the average bitwidth to 5.58/6.08 b, boosting energy efficiency to 33.7 TFLOPS/W with only a negligible accuracy drop. Across 12 design points (six fixed‑width and six DSBP‑driven), the dynamic scheme consistently lies on a Pareto frontier, offering higher efficiency at comparable accuracy.

A further test on ResNet‑18 (ImageNet) confirms generality: the Precise setting reaches 69.6 % top‑1 accuracy (equal to FP8), while the Efficient setting attains 68.1 % with 1.7× higher energy efficiency.

Area and power breakdowns show that the fusion unit occupies 14.6 % of the macro, with only 9.4 % dedicated to non‑reused datapaths. Compared with prior works (SOTAC, ESSCIRC, ISCAS), the proposed design achieves superior TFLOPS/W and TOPS/W while supporting all FP8 formats and dynamic mantissa scaling—features absent in earlier accelerators.

In summary, the paper delivers a practical, low‑power DCIM accelerator that dynamically adapts mantissa alignment to the statistical properties of each layer, replaces costly barrel shifters with a lightweight FIFO mechanism, and provides a flexible INT MAC array. The result is a system that can handle heterogeneous FP8 formats without sacrificing accuracy, while delivering up to 2 × higher energy efficiency compared to fixed‑precision baselines.

Comments & Academic Discussion

Loading comments...

Leave a Comment