Mitigating Hallucination in Financial Retrieval-Augmented Generation via Fine-Grained Knowledge Verification

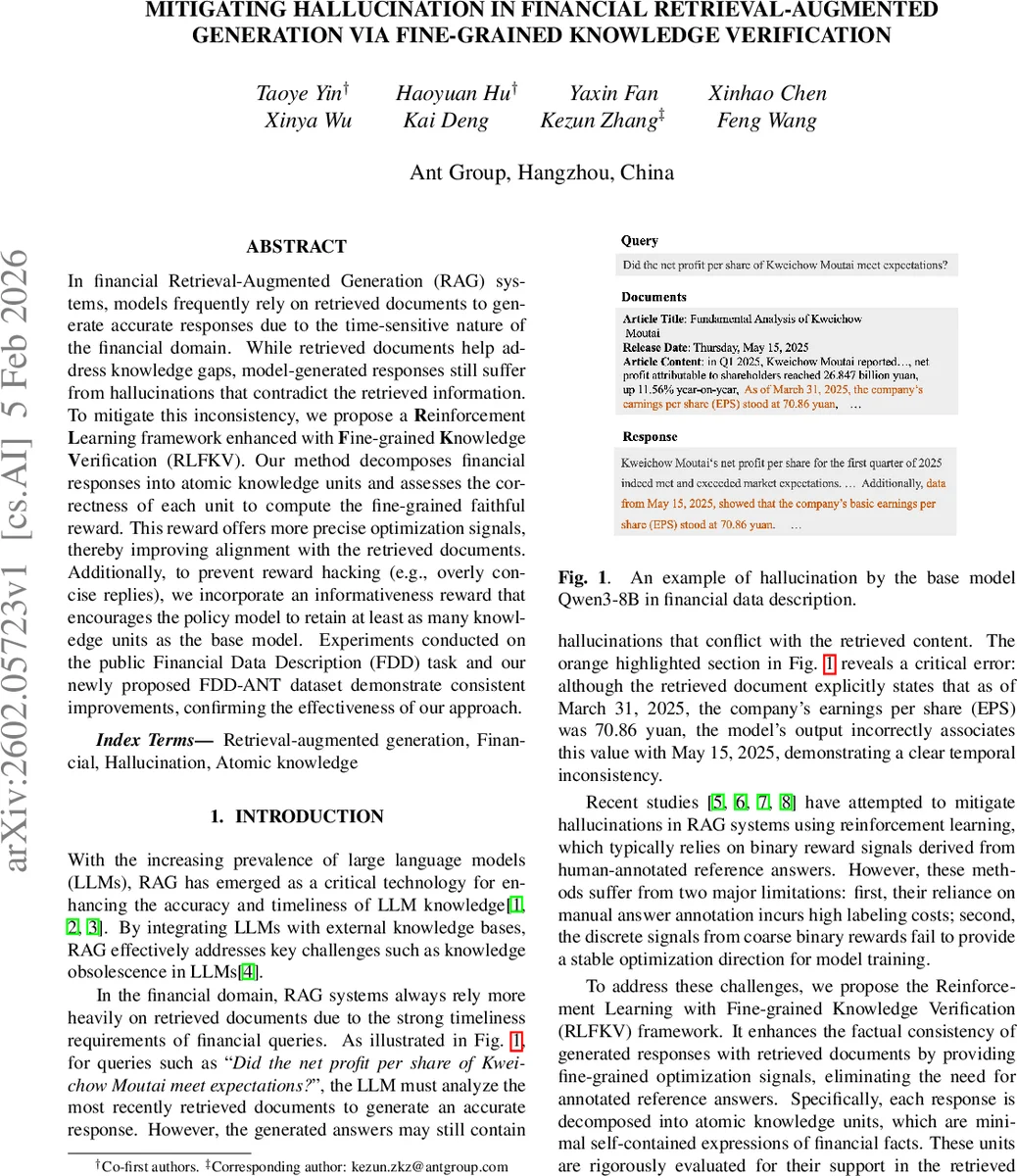

In financial Retrieval-Augmented Generation (RAG) systems, models frequently rely on retrieved documents to generate accurate responses due to the time-sensitive nature of the financial domain. While retrieved documents help address knowledge gaps, model-generated responses still suffer from hallucinations that contradict the retrieved information. To mitigate this inconsistency, we propose a Reinforcement Learning framework enhanced with Fine-grained Knowledge Verification (RLFKV). Our method decomposes financial responses into atomic knowledge units and assesses the correctness of each unit to compute the fine-grained faithful reward. This reward offers more precise optimization signals, thereby improving alignment with the retrieved documents. Additionally, to prevent reward hacking (e.g., overly concise replies), we incorporate an informativeness reward that encourages the policy model to retain at least as many knowledge units as the base model. Experiments conducted on the public Financial Data Description (FDD) task and our newly proposed FDD-ANT dataset demonstrate consistent improvements, confirming the effectiveness of our approach.

💡 Research Summary

The paper addresses the persistent problem of factual hallucination in financial Retrieval‑Augmented Generation (RAG) systems, where even after retrieving up‑to‑date documents, large language models (LLMs) still produce answers that contradict the source material. Existing reinforcement‑learning (RL) approaches mitigate this issue by using binary rewards derived from human‑annotated reference answers, but they suffer from high annotation cost and coarse‑grained feedback that provides unstable training signals.

To overcome these limitations, the authors propose a novel RL framework called Reinforcement Learning with Fine‑grained Knowledge Verification (RLFKV). The core idea is to decompose each generated response into a set of atomic knowledge units (AKUs) that capture the minimal factual elements required in a financial answer. An AKU is defined as a quadruple (entity, metric, value, timestamp), reflecting the strict temporal sensitivity and quantitative nature of financial texts. For example, the sentence “As of March 31 2025, earnings per share were 70.86 yuan” becomes (Kweichow Moutai, EPS, 70.86 yuan, 2025‑03‑31).

The decomposition is performed automatically by a large evaluation model (Qwen‑3‑32B) guided by a specially crafted prompt and a financial metric dictionary. Once the AKUs are extracted, the same evaluation model verifies each unit against the retrieved documents D, producing a binary consistency score s_i ∈ {0,1} for each unit.

These fine‑grained verification results are transformed into two complementary reward signals:

-

Faithfulness reward (r_f) – penalizes the number of incorrect AKUs using an exponential decay factor η and an upper bound γ on the error count. The formulation r_f = 1 / (e^η·min(score,γ)) yields a smooth, differentiable penalty that avoids overly harsh gradients when many errors are present.

-

Informativeness reward (r_i) – a binary constraint that ensures the policy model generates at least as many AKUs as the base model π_0 (k ≥ k_0). This prevents reward‑hacking behaviors where the model might produce overly concise answers to maximize r_f.

The overall reward r is the average of r_f and r_i. Policy optimization follows the Generalized Proximal Policy Optimization (GRPO) algorithm, with a KL‑divergence term to limit policy drift.

Experiments are conducted on two datasets: the publicly available Financial Data Description (FDD) benchmark (1,461 stock‑related samples) and a newly constructed FDD‑ANT dataset (2,000 samples covering stocks, funds, and macro‑economic indicators). Both datasets lack official training splits, so the authors randomly sample 4,000 instances for RL fine‑tuning. Evaluation uses GPT‑4o as an automatic judge: factuality is scored on a three‑point scale (0, 60, 100), and informativeness is measured by counting AKUs in the generated answer.

Baseline models include general‑purpose LLMs (DeepSeek‑V2‑Lite‑Chat‑16B, Qwen‑3‑8B, LLaMA‑3.1‑8B‑Instruct) and domain‑specific financial models (Xuanyuan‑13B, Dianjin‑R1‑7B). RLFKV is applied to Qwen‑3‑8B and LLaMA‑3.1‑8B‑Instruct. Results show consistent improvements: on FDD, RLFKV‑Qwen3 raises factuality from 86.5 to 89.5 points (+3.0) and informativeness from 13.4 to 13.5; on FDD‑ANT, factuality climbs from 90.2 to 93.3 (+3.1) and informativeness from 10.8 to 12.3 (+1.5). Similar gains are observed for the LLaMA‑based model. Ablation without the informativeness reward demonstrates that factuality remains stable while informativeness drops noticeably, confirming the necessity of both components.

Further analysis compares fine‑grained rewards to coarse binary rewards (1 if no factual errors, else 0). Fine‑grained rewards lead to higher factuality scores, smoother reward trajectories, and faster convergence (≈2 k steps).

Error analysis on FDD‑ANT reveals three dominant residual error types: (1) missing timestamps (55 % of errors), (2) inaccurate temporal expressions (28 %), and (3) numerical rounding errors (17 %). These stem from the current verification focusing on binary presence rather than precise temporal or numeric formatting.

In conclusion, RLFKV demonstrates that fine‑grained, document‑grounded verification can replace costly human annotations while providing richer training signals for RAG models. The approach is especially suited to finance, where up‑to‑date and precise information is critical. Future work will explore richer temporal normalization, more accurate numeric handling, and extensions to multi‑turn conversational settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment