MedErrBench: A Fine-Grained Multilingual Benchmark for Medical Error Detection and Correction with Clinical Expert Annotations

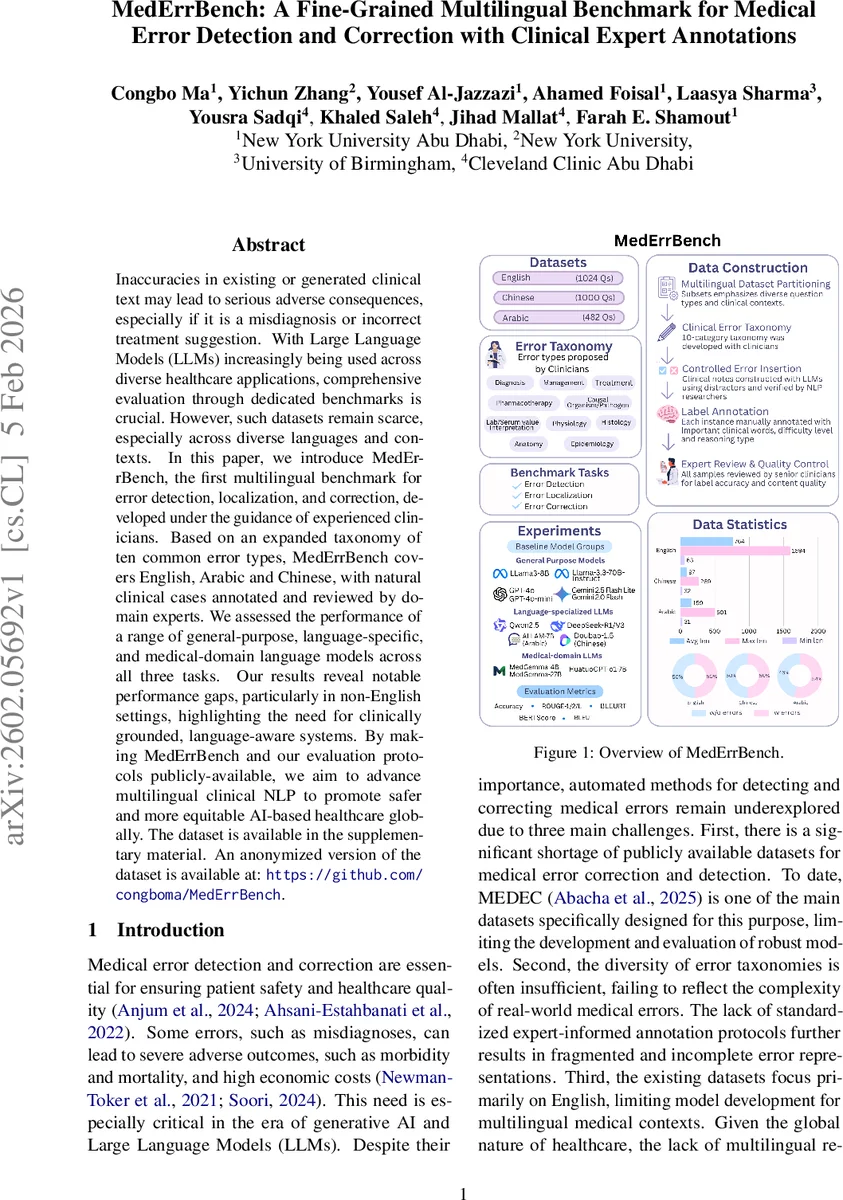

Inaccuracies in existing or generated clinical text may lead to serious adverse consequences, especially if it is a misdiagnosis or incorrect treatment suggestion. With Large Language Models (LLMs) increasingly being used across diverse healthcare applications, comprehensive evaluation through dedicated benchmarks is crucial. However, such datasets remain scarce, especially across diverse languages and contexts. In this paper, we introduce MedErrBench, the first multilingual benchmark for error detection, localization, and correction, developed under the guidance of experienced clinicians. Based on an expanded taxonomy of ten common error types, MedErrBench covers English, Arabic and Chinese, with natural clinical cases annotated and reviewed by domain experts. We assessed the performance of a range of general-purpose, language-specific, and medical-domain language models across all three tasks. Our results reveal notable performance gaps, particularly in non-English settings, highlighting the need for clinically grounded, language-aware systems. By making MedErrBench and our evaluation protocols publicly-available, we aim to advance multilingual clinical NLP to promote safer and more equitable AI-based healthcare globally. The dataset is available in the supplementary material. An anonymized version of the dataset is available at: https://github.com/congboma/MedErrBench.

💡 Research Summary

MedErrBench is introduced as the first multilingual benchmark designed to evaluate large language models (LLMs) on three core clinical NLP tasks: medical error detection, error localization, and error correction. The authors identify a critical gap in existing resources—most datasets are monolingual (English) and cover only a narrow set of error types—hindering the development of safe, globally applicable AI systems for healthcare.

To address this, the paper presents a clinician‑driven taxonomy of ten error categories that expands upon the five types used in the earlier MEDEC dataset. The categories—Diagnosis, Management, Treatment, Pharmacotherapy, Causal Organism/Pathogen, Lab/Serum Value Interpretation, Physiology, Histology, Anatomy, and Epidemiology—are each defined with concrete examples, providing a comprehensive schema for both annotation and model evaluation.

Data construction proceeds in three languages: English, Chinese, and Arabic. English and Chinese cases are derived from MedQA, while Arabic cases come from MedArabiQ and MedAraBench. For each source question, the authors retain the correct answer and deliberately replace it with a plausible but incorrect alternative, thereby creating a “clean” version and an “error‑injected” version of the clinical note. Where the original sources lack certain error types, practicing clinicians contribute real‑world cases to fill the gaps (e.g., Physiology, Histology, Anatomy, and Epidemiology for English; Lab/Serum interpretation for Arabic).

A two‑stage quality‑control pipeline ensures clinical validity. First, native‑speaking NLP researchers use LLM‑assisted prompts to transform short exam questions into full‑length clinical narratives and to insert errors. Second, two independent clinicians review every instance, correcting any hallucinations, confirming error labels, and annotating three auxiliary attributes: (1) important clinical words, (2) difficulty level (Easy, Medium, Hard), and (3) reasoning type (Factual Recall, Single‑hop, Multi‑hop). Disagreements are resolved without conflict, resulting in a high‑quality, richly annotated corpus.

The benchmark defines three sub‑tasks: (a) error detection (binary classification), (b) error localization (identifying the sentence or span containing the error), and (c) error correction (generating a corrected version of the text). Evaluation metrics include accuracy for detection, ROUGE‑1/2/L and BLEU for correction quality, and BERTScore and BLEURT for semantic fidelity.

A broad suite of models is evaluated, grouped into:

- General‑purpose LLMs (GPT‑4o, GPT‑4o‑mini, Gemini 2.5 Flash Lite, Gemini 2.0 Flash, LLaMA‑3‑8B, LLaMA‑3‑70B‑Instruct).

- Language‑specialized LLMs (Chinese: Qwen2.5‑7B‑Instruct, DeepSeek‑R1, DeepSeek‑V3, Doubao‑1.5‑Thinking‑Pro; Arabic: 3ALLAM‑7B).

- Medical‑domain LLMs (MedGemma‑4B, MedGemma‑27B, HuatuoGPT‑o1‑7B).

Results reveal a consistent performance hierarchy: English data yields the highest scores across all model families, while Arabic and Chinese exhibit markedly lower accuracy, ROUGE, and BLEU. Even language‑specialized models struggle to match the performance of medical‑domain models on non‑English tasks, indicating that multilingual medical knowledge remains under‑represented in current LLMs. Error‑type analysis shows that categories requiring deep domain expertise (e.g., Physiology, Histology, Epidemiology) are the most challenging, whereas Diagnosis, Management, and Pharmacotherapy are detected and corrected more reliably.

Additional ablation studies demonstrate that providing explicit error‑type definitions and exemplar cases to the model improves performance, especially for the more complex categories. Few‑shot experiments indicate that difficulty level strongly influences outcomes; “Hard” instances cause steep drops in both detection and correction metrics, underscoring the limited reasoning depth of current models. Cross‑lingual generalization experiments confirm that knowledge does not transfer effectively between languages without targeted fine‑tuning.

The authors acknowledge limitations: the dataset size, while multilingual, remains modest compared to monolingual corpora; error insertion is synthetic and may not capture the full nuance of real clinical mistakes; automatic metrics may not fully reflect clinical appropriateness of corrections, suggesting the need for human evaluation in future work.

In conclusion, MedErrBench delivers a rigorously validated, multilingual resource that fills a crucial gap in medical NLP research. By making the data and evaluation protocols publicly available, the paper invites the community to develop language‑aware, clinically grounded LLMs, with the ultimate goal of improving patient safety and equity in AI‑driven healthcare worldwide.

Comments & Academic Discussion

Loading comments...

Leave a Comment