ADCA: Attention-Driven Multi-Party Collusion Attack in Federated Self-Supervised Learning

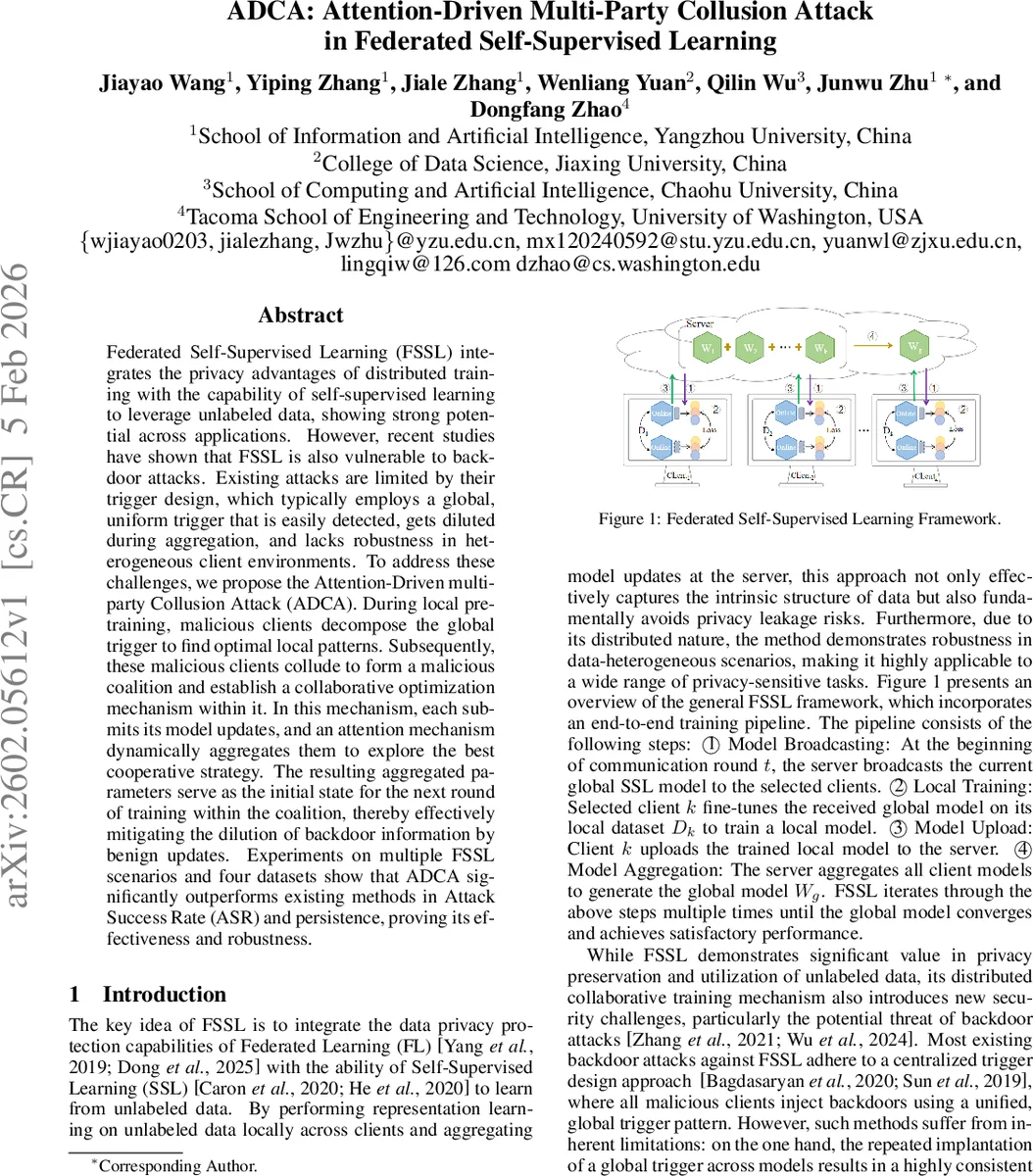

Federated Self-Supervised Learning (FSSL) integrates the privacy advantages of distributed training with the capability of self-supervised learning to leverage unlabeled data, showing strong potential across applications. However, recent studies have shown that FSSL is also vulnerable to backdoor attacks. Existing attacks are limited by their trigger design, which typically employs a global, uniform trigger that is easily detected, gets diluted during aggregation, and lacks robustness in heterogeneous client environments. To address these challenges, we propose the Attention-Driven multi-party Collusion Attack (ADCA). During local pre-training, malicious clients decompose the global trigger to find optimal local patterns. Subsequently, these malicious clients collude to form a malicious coalition and establish a collaborative optimization mechanism within it. In this mechanism, each submits its model updates, and an attention mechanism dynamically aggregates them to explore the best cooperative strategy. The resulting aggregated parameters serve as the initial state for the next round of training within the coalition, thereby effectively mitigating the dilution of backdoor information by benign updates. Experiments on multiple FSSL scenarios and four datasets show that ADCA significantly outperforms existing methods in Attack Success Rate (ASR) and persistence, proving its effectiveness and robustness.

💡 Research Summary

The paper introduces ADCA (Attention‑Driven Multi‑Party Collusion Attack), a novel backdoor attack specifically designed for Federated Self‑Supervised Learning (FSSL). Existing backdoor methods for FSSL rely on a single, globally uniform trigger that is implanted by all malicious clients. Such uniform triggers are easy to detect and become heavily diluted when aggregated with benign updates, leading to low persistence and reduced attack success. ADCA addresses these shortcomings through two key innovations: (1) distributed trigger decomposition and (2) attention‑driven collaborative optimization among malicious clients.

Distributed Trigger Decomposition

The authors formalize a trigger as a set of three parameters: location (TL), size (TS), and gap (TG). By partitioning a global trigger into M sub‑triggers, each malicious client receives a unique local pattern. The injection function R(x, e, φ*) = x ⊙ (1‑Tφ*) + e ⊙ Tφ* places the sub‑trigger only on the designated mask region, allowing precise control over where and how the trigger appears. This decomposition yields several benefits: (i) increased stealth because the visual pattern varies across clients, (ii) better alignment with heterogeneous local data distributions, and (iii) reduced likelihood of detection by pattern‑based defenses. The sub‑triggers are embedded into a shadow dataset that contains both clean and poisoned samples. Training on this dataset uses a contrastive learning objective L_eff that maximizes cosine similarity between poisoned samples and the target class while preserving the main task performance. A stealth objective L_stealth aligns representations of clean inputs from the backdoored encoder with those of the benign global encoder, ensuring that the backdoor does not noticeably degrade accuracy. The overall loss L = λ1·L_eff + λ2·L_stealth balances effectiveness and stealth.

Attention‑Driven Collaborative Optimization

In standard federated learning, each malicious client downloads the current global model, performs local training, and sends its update to the server. Because the server aggregates all updates (often via simple averaging), the malicious contribution is quickly washed out. ADCA introduces a malicious coalition that performs an internal aggregation before sending updates. A cross‑attention mechanism computes attention scores over the set of malicious updates, dynamically weighting each client’s contribution based on how much backdoor information it carries. The resulting aggregated parameters replace the global model as the initialization for the next local training round of the coalition members. This internal aggregation dramatically amplifies the backdoor signal while keeping the coalition’s updates indistinguishable from normal client updates at the server side. The authors formalize the dilution problem (Equation 6) and show that their method makes the norm of the backdoor component far larger than that of benign components, thereby evading detection metrics such as PCM (Equation 7).

Experimental Evaluation

ADCA is evaluated on four benchmark datasets: CIFAR‑10, CIFAR‑100, STL‑10, and GTSRB. Baselines include BADFSS, UBA, and other recent backdoor attacks for federated settings. Metrics reported are Attack Success Rate (ASR), clean accuracy (ACC), and persistence (ASR after many communication rounds). Across all datasets, ADCA achieves a 15–30 percentage‑point increase in ASR compared with baselines, while ACC drops by less than 1 %. Moreover, after 50 communication rounds the ASR remains above 80 %, demonstrating strong persistence. The authors also test against several defense mechanisms—FLAME, FL Trust, PatchSearch, PoisonCAM, and EmInspector—and find that detection rates remain low (often below 30 %), indicating that ADCA can bypass current state‑of‑the‑art defenses for FSSL.

Contributions and Implications

- First systematic study of trigger decomposition (location, size, gap) in the context of FSSL, establishing a clear link between distributed trigger design and attack performance.

- Introduction of an attention‑based internal aggregation scheme that preserves backdoor features across global rounds, substantially improving persistence.

- Comprehensive empirical validation showing that ADCA outperforms existing attacks under diverse settings and defeats a range of contemporary defenses.

Limitations and Future Work

The current design assumes synchronous federated rounds and a relatively high participation rate of malicious clients; performance may degrade in highly asynchronous or low‑participation scenarios. Trigger parameters are manually selected; automated meta‑learning of optimal decomposition could further enhance stealth and effectiveness. Future research directions include extending ADCA to asynchronous federated protocols, exploring meta‑attention techniques for server‑side detection of colluding clients, and investigating defenses that can identify the internal attention patterns used by malicious coalitions.

In summary, ADCA presents a powerful new paradigm for backdoor attacks in federated self‑supervised learning, combining distributed trigger heterogeneity with attention‑driven collusion to achieve high attack success, stealth, and long‑term persistence, thereby exposing significant vulnerabilities in current federated learning security frameworks.

Comments & Academic Discussion

Loading comments...

Leave a Comment