EgoPoseVR: Spatiotemporal Multi-Modal Reasoning for Egocentric Full-Body Pose in Virtual Reality

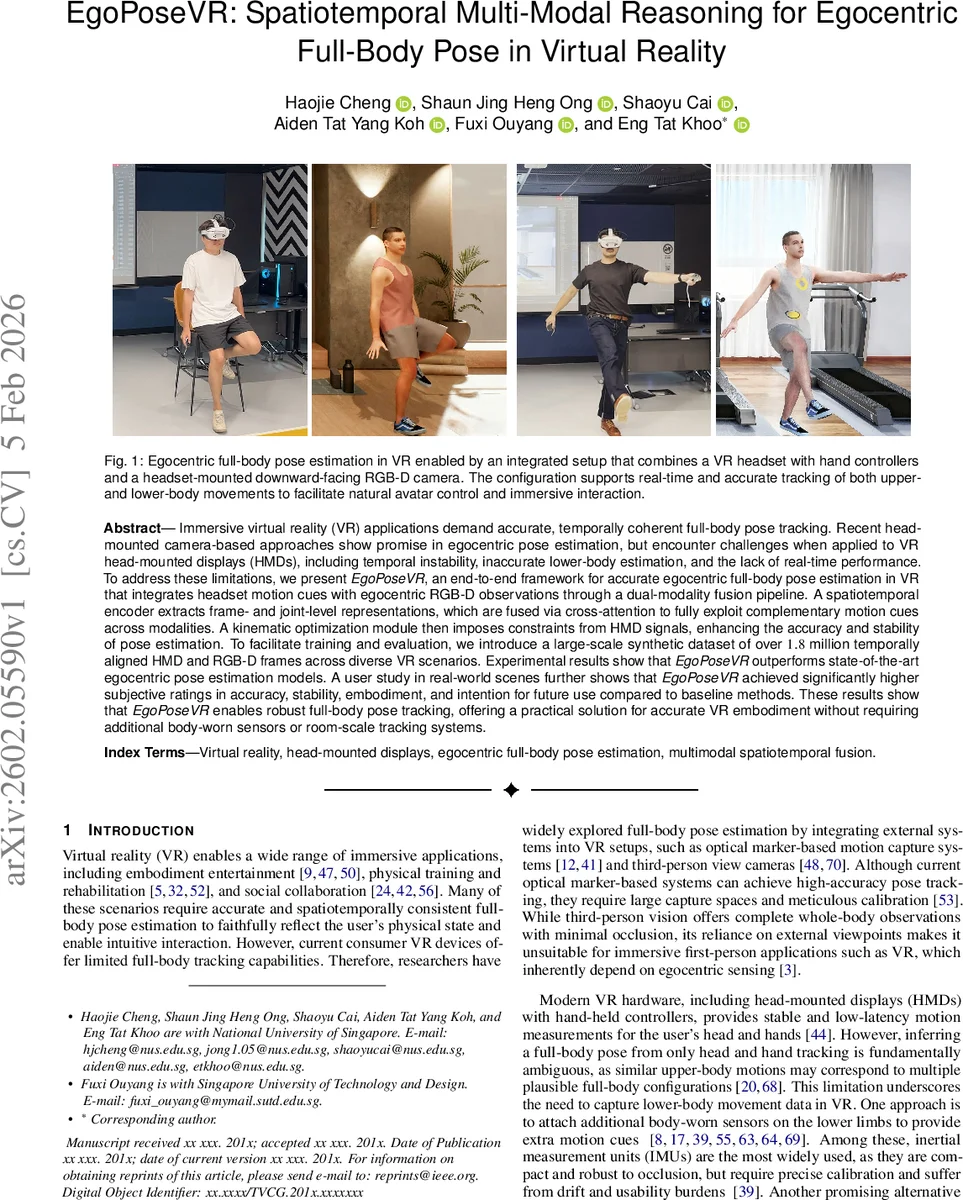

Immersive virtual reality (VR) applications demand accurate, temporally coherent full-body pose tracking. Recent head-mounted camera-based approaches show promise in egocentric pose estimation, but encounter challenges when applied to VR head-mounted displays (HMDs), including temporal instability, inaccurate lower-body estimation, and the lack of real-time performance. To address these limitations, we present EgoPoseVR, an end-to-end framework for accurate egocentric full-body pose estimation in VR that integrates headset motion cues with egocentric RGB-D observations through a dual-modality fusion pipeline. A spatiotemporal encoder extracts frame- and joint-level representations, which are fused via cross-attention to fully exploit complementary motion cues across modalities. A kinematic optimization module then imposes constraints from HMD signals, enhancing the accuracy and stability of pose estimation. To facilitate training and evaluation, we introduce a large-scale synthetic dataset of over 1.8 million temporally aligned HMD and RGB-D frames across diverse VR scenarios. Experimental results show that EgoPoseVR outperforms state-of-the-art egocentric pose estimation models. A user study in real-world scenes further shows that EgoPoseVR achieved significantly higher subjective ratings in accuracy, stability, embodiment, and intention for future use compared to baseline methods. These results show that EgoPoseVR enables robust full-body pose tracking, offering a practical solution for accurate VR embodiment without requiring additional body-worn sensors or room-scale tracking systems.

💡 Research Summary

EgoPoseVR addresses the long‑standing challenge of obtaining accurate, temporally stable full‑body pose estimates in immersive virtual reality without relying on external motion‑capture rigs or body‑worn sensors. The authors propose an end‑to‑end framework that fuses low‑dimensional, high‑frequency headset and controller motion data with high‑dimensional egocentric RGB‑D video captured by a downward‑facing camera mounted on the HMD.

The system consists of four main components. First, motion data from the head‑mounted display (position, 6‑DoF orientation, linear and angular velocity) and from the two hand controllers are concatenated into a 72‑dimensional vector per frame. Second, a dual‑stream spatiotemporal encoder processes the motion stream and the visual stream separately. Both streams are built on transformer layers that capture frame‑wise temporal dynamics and joint‑wise spatial dependencies. The visual stream includes a visibility‑aware joint detection module that mitigates the effects of motion blur and abrupt scene changes caused by rapid head movements.

Third, the modality‑specific embeddings are merged using a cross‑attention fusion module. Here, the motion features serve as queries while the visual features act as keys and values, allowing the network to refine ambiguous lower‑body predictions with complementary visual cues. This cross‑modal attention dramatically reduces the pose ambiguity that plagues HMD‑only methods.

Fourth, the fused pose estimate is passed through a differentiable kinematic optimization layer. The optimizer enforces consistency with the raw HMD signals (e.g., head‑controller relative pose), preserves skeletal constraints such as bone lengths and joint angle limits, and penalizes physically implausible configurations. Because the optimization is differentiable, its loss can be back‑propagated during training, leading to a model that jointly learns perception and kinematic refinement.

To train and evaluate the approach, the authors generate a synthetic dataset of 1.8 million temporally aligned frames covering a wide variety of indoor and outdoor VR scenarios, lighting conditions, and avatar appearances. Each frame includes synchronized HMD motion data, RGB‑D images, and 2D/3D joint annotations (positions and rotations). This dataset is substantially larger and more diverse than prior egocentric pose benchmarks, and it uniquely provides the motion signals required for realistic VR integration.

Quantitative experiments on the synthetic benchmark show that EgoPoseVR reduces mean per‑joint position error (MPJPE) by over 12 % compared with state‑of‑the‑art egocentric pose models, and it achieves significantly lower acceleration error, indicating superior temporal stability. The system runs at 97 FPS on an RTX 4090, satisfying real‑time VR constraints. A user study with 20 participants in real‑world VR scenes confirms the objective gains: participants rated EgoPoseVR higher on accuracy, stability, embodiment, and intention to use in future applications, with all differences statistically significant.

The paper’s contributions are: (1) the first real‑time egocentric full‑body pose estimator that jointly leverages HMD motion cues and a headset‑mounted RGB‑D camera; (2) a novel dual‑stream spatiotemporal architecture combined with cross‑attention fusion and a differentiable kinematic optimizer; (3) the release of a large‑scale synthetic multimodal dataset tailored for VR pose estimation; and (4) extensive validation demonstrating both quantitative superiority and positive subjective user experience.

Limitations include the requirement for a downward‑facing depth camera, which is not yet standard on commercial headsets, and the inevitable domain gap between synthetic training data and real‑world footage. The authors plan to explore domain‑adaptation techniques and to test the framework on a broader range of HMD‑camera configurations, aiming toward a sensor‑free, universally deployable VR embodiment solution.

Comments & Academic Discussion

Loading comments...

Leave a Comment