When Shared Knowledge Hurts: Spectral Over-Accumulation in Model Merging

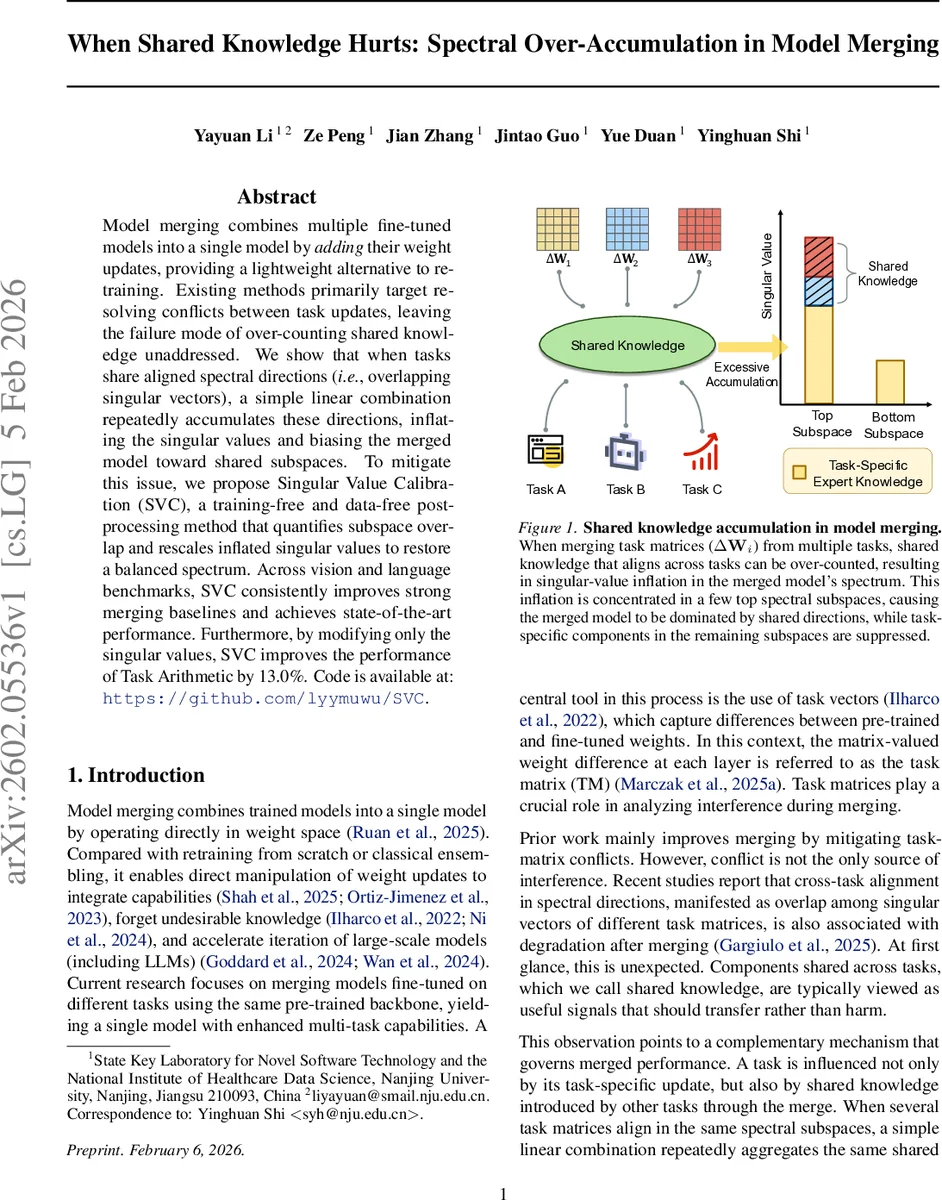

Model merging combines multiple fine-tuned models into a single model by adding their weight updates, providing a lightweight alternative to retraining. Existing methods primarily target resolving conflicts between task updates, leaving the failure mode of over-counting shared knowledge unaddressed. We show that when tasks share aligned spectral directions (i.e., overlapping singular vectors), a simple linear combination repeatedly accumulates these directions, inflating the singular values and biasing the merged model toward shared subspaces. To mitigate this issue, we propose Singular Value Calibration (SVC), a training-free and data-free post-processing method that quantifies subspace overlap and rescales inflated singular values to restore a balanced spectrum. Across vision and language benchmarks, SVC consistently improves strong merging baselines and achieves state-of-the-art performance. Furthermore, by modifying only the singular values, SVC improves the performance of Task Arithmetic by 13.0%. Code is available at: https://github.com/lyymuwu/SVC.

💡 Research Summary

Model merging, which aggregates the weight updates of several fine‑tuned models into a single network, has become a popular lightweight alternative to full retraining, especially for large language and vision models. Existing research has largely focused on mitigating conflicts between task updates—either by pruning conflicting parameters, learning task‑specific masks, or using validation data to guide the merge. However, the authors identify a previously overlooked failure mode: spectral over‑accumulation. When multiple tasks share aligned spectral directions—i.e., their task matrices have overlapping singular vectors—a simple linear combination repeatedly aggregates these shared directions. This repeated accumulation inflates the corresponding singular values, causing the merged model to be dominated by a few top spectral subspaces while suppressing information in the remaining subspaces. Consequently, downstream performance degrades even though the tasks appear compatible.

The paper provides a rigorous theoretical analysis. For each layer, the merged task matrix ΔW_merge is decomposed via singular value decomposition (SVD) as ΔW_merge = U Σ Vᵀ. The left singular vectors {u_r} define a shared column‑space basis. Each task matrix ΔW_i is projected onto this basis, yielding subspace‑wise responses a_{r i} = u_rᵀ ΔW_i. The merged response in subspace r is a_{r merge} = ∑i a{r i}. The authors introduce a projection coefficient s_{r i} = ⟨a_{r merge}, a_{r i}⟩ / ‖a_{r i}‖². When s_{r i}>1, the merged update amplifies task i’s contribution in subspace r, indicating over‑counting. Lemma 3.2 shows that s_{r i}=1+∑{j≠i}⟨a{r j}, a_{r i}⟩ / ‖a_{r i}‖², so positive cross‑task inner products directly cause amplification. Theorem 3.3 links this amplification to singular‑value inflation: the optimal calibrated singular value for task i in subspace r is σ_{r i}^* = γ_{r i} σ_r with γ_{r i}=1/s_{r i}. Hence, whenever the sum of cross‑terms is positive, σ_r exceeds the projection‑optimal magnitude, confirming that shared directions are over‑represented in the spectrum.

To correct this, the authors propose Singular Value Calibration (SVC), a training‑free, data‑free post‑processing step. The procedure is:

- Perform SVD on the merged task matrix to obtain U, Σ, V.

- Use U as a common coordinate system and compute a_{r i} for each task.

- Calculate s_{r i} and derive per‑task scaling factors γ_{r i}=1/s_{r i} (or 0 if s_{r i}≤0).

- Average γ_{r i} across tasks within each subspace to obtain a subspace‑wise calibration factor γ_r^*.

- Rescale the original singular values: σ_r^* = γ_r^* · σ_r, forming a calibrated Σ^*.

- Reconstruct the calibrated merged update ΔW_calibrated = U Σ^* Vᵀ and add it back to the pretrained weights.

Because only the singular values are altered, the directional information (the singular vectors) remains intact, preserving the learned representations while restoring a balanced spectrum.

Empirical evaluation spans both vision (e.g., CLIP‑ViT, ResNet) and language (e.g., LLaMA, GLUE) backbones. The authors apply SVC to several baseline merging strategies: simple averaging, weighted averaging, and Task Arithmetic. Across all benchmarks, SVC consistently reduces the gap between original and optimal singular values, especially in the top few subspaces, and yields 2–5 percentage‑point improvements in overall accuracy. Notably, when SVC is combined with Task Arithmetic, performance jumps by 13 percentage‑points, demonstrating that even state‑of‑the‑art merging can be substantially enhanced by addressing spectral over‑accumulation. Additional analyses show that SVC can be targeted to specific tasks such as preference optimization, where subspace‑specific calibration yields further gains.

In summary, the paper uncovers a new, theoretically grounded failure mode in model merging—spectral over‑accumulation of shared knowledge—and introduces a simple yet effective remedy, Singular Value Calibration. SVC’s training‑free and data‑free nature makes it readily applicable to existing pipelines, and its strong empirical gains suggest that future work on multi‑task and multi‑modal model merging should consider spectral balance as a core design principle.

Comments & Academic Discussion

Loading comments...

Leave a Comment