Feature points evaluation on omnidirectional vision with a photorealistic fisheye sequence -- A report on experiments done in 2014

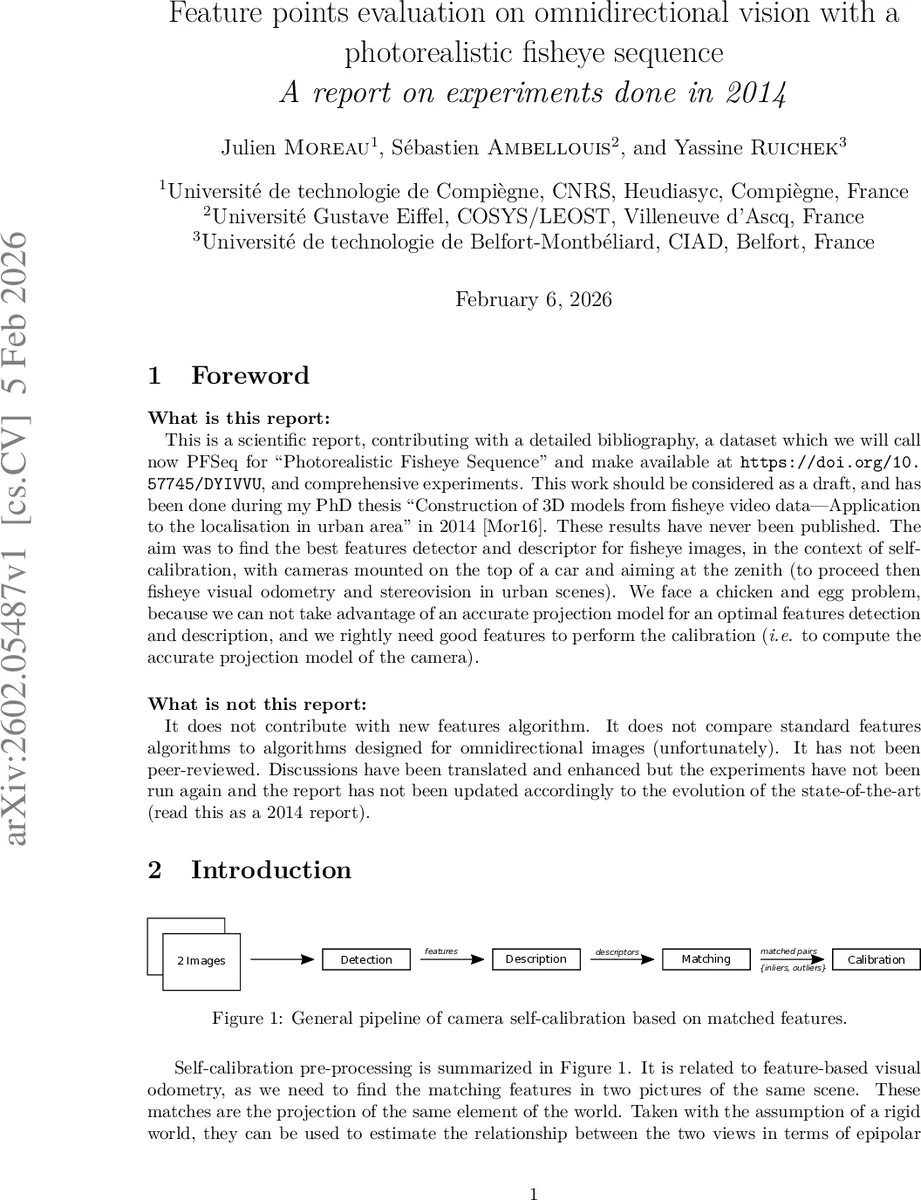

What is this report: This is a scientific report, contributing with a detailed bibliography, a dataset which we will call now PFSeq for ‘‘Photorealistic Fisheye Sequence’’ and make available at https://doi.org/10. 57745/DYIVVU, and comprehensive experiments. This work should be considered as a draft, and has been done during my PhD thesis ‘‘Construction of 3D models from fisheye video data-Application to the localisation in urban area’’ in 2014 [Mor16]. These results have never been published. The aim was to find the best features detector and descriptor for fisheye images, in the context of selfcalibration, with cameras mounted on the top of a car and aiming at the zenith (to proceed then fisheye visual odometry and stereovision in urban scenes). We face a chicken and egg problem, because we can not take advantage of an accurate projection model for an optimal features detection and description, and we rightly need good features to perform the calibration (i.e. to compute the accurate projection model of the camera). What is not this report: It does not contribute with new features algorithm. It does not compare standard features algorithms to algorithms designed for omnidirectional images (unfortunately). It has not been peer-reviewed. Discussions have been translated and enhanced but the experiments have not been run again and the report has not been updated accordingly to the evolution of the state-of-the-art (read this as a 2014 report).

💡 Research Summary

This report, originally drafted in 2014 as part of Julien Moreau’s PhD work, presents a comprehensive evaluation of feature‑point detectors and descriptors for fisheye (omnidirectional) images, with a focus on self‑calibration, visual odometry, and stereo reconstruction for a vehicle‑mounted zenith‑looking camera. The authors introduce a new synthetic dataset, the Photorealistic Fisheye Sequence (PFSeq), which is publicly available via DOI 10.57745/DYIVVU. PFSeq consists of photorealistic renderings of a car moving through an urban canyon of tall buildings, providing ground‑truth 3‑D points, camera poses, and intrinsic parameters for each frame. The dataset is deliberately designed to stress feature extraction: extreme radial distortion, rapid rotations, large scale changes, and varying illumination are all present.

The paper first outlines the “chicken‑and‑egg” problem inherent to omnidirectional calibration: accurate projection models are needed to extract reliable features, yet those very features are required to estimate the projection model. Consequently, the authors review a broad spectrum of existing approaches, ranging from classic planar detectors (Harris, SIFT, SURF, ORB) to a suite of fisheye‑specific adaptations proposed in the literature up to 2014. The adaptations can be grouped into three families:

-

Geometric re‑projection methods – features are detected on the distorted image, then each region is re‑projected onto a virtual perspective plane (e.g., the “dynamic windows” of IBR03, the virtual camera approach of MFB06). These require an a‑priori calibration of the sensor.

-

Spherical‑domain methods – the entire image is mapped onto a unit sphere, and Gaussian scale‑space, gradient computation, and descriptor formation are performed in spherical coordinates. Notable examples include sSIFT (spherical SIFT), pSIFT (parabolic SIFT), OmniSIFT (Riemannian geometry + polar descriptor), and the Laplace‑Beltrami based approaches of PG11/PG13.

-

Division‑model based methods – a simple 1‑parameter division model approximates fisheye distortion; the image is rectified only for the purpose of applying Gaussian blur, after which descriptors are computed on the original distorted image. RD‑SIFT and its lightweight variant sRD‑SIFT belong to this class.

The authors implement a large experimental matrix on PFSeq, evaluating each detector‑descriptor pair on the following metrics:

- Number of detected keypoints per frame.

- Number of putative matches after descriptor matching.

- Inlier ratio after RANSAC‑based fundamental/essential matrix estimation.

- Mean reprojection error of the calibrated model.

- Cumulative translation error in a visual‑odometry pipeline.

Results show that the classic SIFT detector, when applied directly to fisheye images, yields the highest raw match count but suffers from a low inlier ratio and higher reprojection error due to distortion‑induced scale inconsistency. Among the fisheye‑specific methods, sSIFT consistently provides the best inlier ratio and the lowest reprojection error, especially on high‑resolution sequences where its spherical diffusion filter can be computed efficiently. pSIFT performs slightly worse on inliers but is more robust to extreme rotations because its parabolic approximation avoids the bandwidth limitations of the spherical Fourier transform. OmniSIFT achieves the highest descriptor distinctiveness (as measured by precision‑recall curves) but incurs a substantial computational overhead, making it less attractive for real‑time applications.

The division‑model approaches (RD‑SIFT, sRD‑SIFT) strike a compelling balance: they require only an approximate knowledge of the optical centre and a single distortion radius, yet they produce descriptors that are comparable to rectified‑SIFT (rectSIFT) while running at near‑SIFT speed. In visual‑odometry experiments, sRD‑SIFT yields the greatest number of RANSAC inliers and the smallest trajectory drift, outperforming both rectSIFT and the more complex spherical methods.

To validate the simulation findings, the authors repeat a subset of experiments on real fisheye footage captured from a vehicle in downtown Paris. The trends largely hold—sSIFT and sRD‑SIFT remain the top performers—but overall match counts drop due to uncontrolled lighting, motion blur, and dynamic objects. This confirms that PFSeq is a realistic benchmark while also highlighting the gap between synthetic and real‑world performance.

Based on these observations, the authors propose a pragmatic two‑stage self‑calibration pipeline to resolve the chicken‑and‑egg dilemma:

-

Stage 1 – Rough calibration: Use a lightweight detector (e.g., sRD‑SIFT) that only needs a coarse estimate of the optical centre and radius. This stage quickly gathers a sufficient set of reliable matches to compute an initial intrinsic/extrinsic estimate via a 9‑point algorithm with RANSAC.

-

Stage 2 – Refined calibration: With the initial parameters, re‑project the images onto the sphere and apply a more accurate spherical detector/descriptor (sSIFT or OmniSIFT). The refined matches are then fed into a bundle‑adjustment to obtain high‑precision calibration and enable downstream tasks such as dense stereo reconstruction or sky segmentation.

The report’s contributions are threefold: (i) the release of PFSeq, a publicly accessible fisheye benchmark with ground truth; (ii) a systematic, quantitative comparison of a wide range of feature‑point algorithms on fisheye imagery; and (iii) a practical, computationally efficient calibration strategy that can be adopted in real‑time autonomous‑driving pipelines. The authors acknowledge that the work predates modern deep‑learning based feature detectors (e.g., SuperPoint, R2D2, LoFTR) and that integrating such methods into the PFSeq benchmark remains an open research direction. Additionally, the paper lacks a detailed runtime analysis on embedded hardware, which would be essential for deployment in automotive systems. Nonetheless, the study provides a solid baseline and valuable insights for anyone tackling the challenges of omnidirectional vision in robotics and autonomous navigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment