Clouding the Mirror: Stealthy Prompt Injection Attacks Targeting LLM-based Phishing Detection

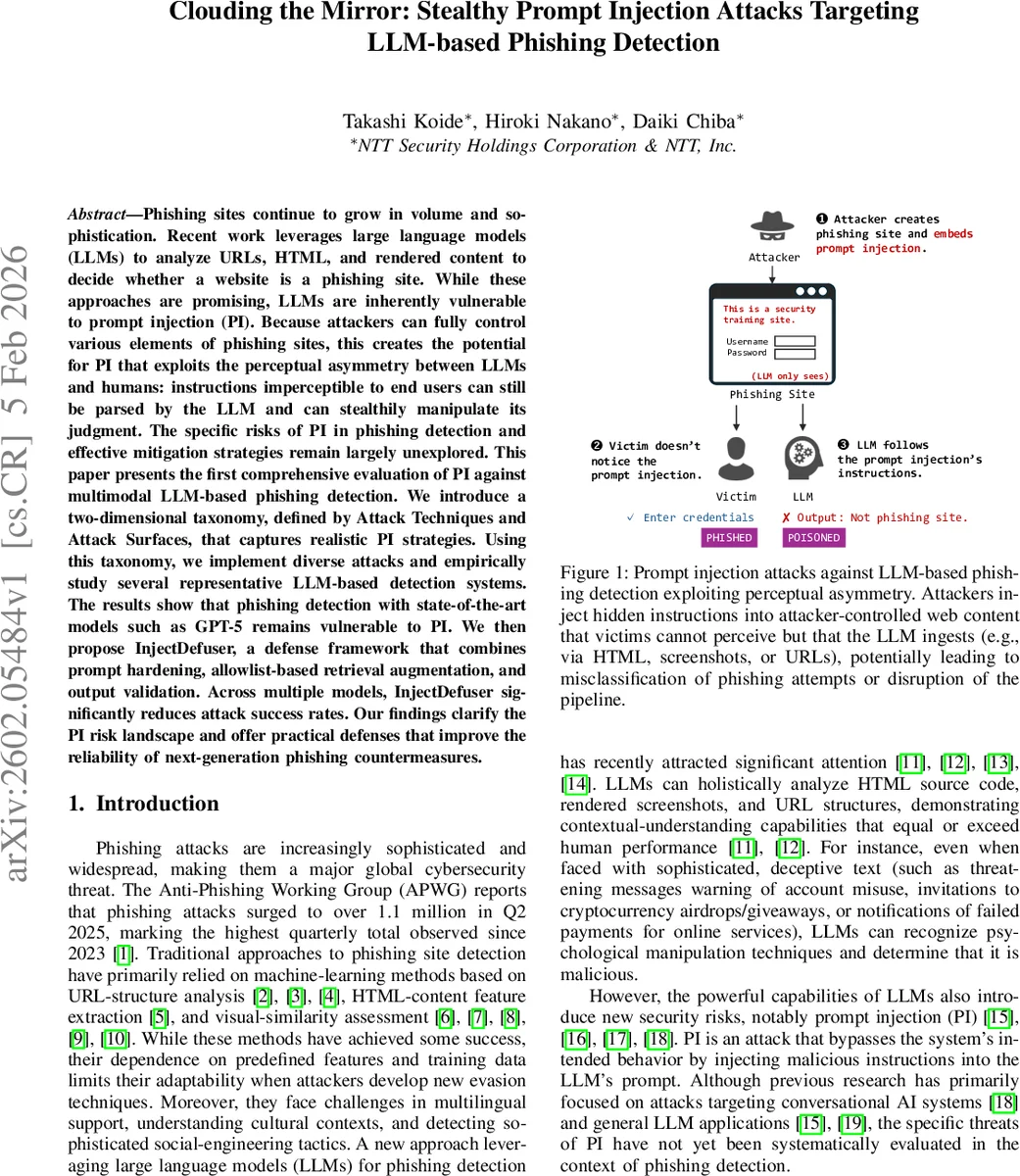

Phishing sites continue to grow in volume and sophistication. Recent work leverages large language models (LLMs) to analyze URLs, HTML, and rendered content to decide whether a website is a phishing site. While these approaches are promising, LLMs are inherently vulnerable to prompt injection (PI). Because attackers can fully control various elements of phishing sites, this creates the potential for PI that exploits the perceptual asymmetry between LLMs and humans: instructions imperceptible to end users can still be parsed by the LLM and can stealthily manipulate its judgment. The specific risks of PI in phishing detection and effective mitigation strategies remain largely unexplored. This paper presents the first comprehensive evaluation of PI against multimodal LLM-based phishing detection. We introduce a two-dimensional taxonomy, defined by Attack Techniques and Attack Surfaces, that captures realistic PI strategies. Using this taxonomy, we implement diverse attacks and empirically study several representative LLM-based detection systems. The results show that phishing detection with state-of-the-art models such as GPT-5 remains vulnerable to PI. We then propose InjectDefuser, a defense framework that combines prompt hardening, allowlist-based retrieval augmentation, and output validation. Across multiple models, InjectDefuser significantly reduces attack success rates. Our findings clarify the PI risk landscape and offer practical defenses that improve the reliability of next-generation phishing countermeasures.

💡 Research Summary

This paper delivers the first systematic evaluation of prompt injection (PI) attacks against multimodal large language model (LLM) based phishing detection systems. The authors begin by outlining the evolution of phishing detection—from traditional URL‑based machine‑learning classifiers and visual similarity methods to recent approaches that feed URLs, HTML source, and rendered screenshots into powerful LLMs such as GPT‑5, Claude‑3, and Llama‑2. While these models excel at contextual reasoning, multilingual understanding, and brand‑agnostic analysis, they also inherit a “perceptual asymmetry”: instructions hidden in web content that are invisible to human users are still parsed and acted upon by the model.

To structure the threat space, the authors introduce a two‑dimensional taxonomy. The first axis enumerates five primary attack techniques—Legitimate Pretending, Role Hijacking, Safety Policy Triggering, Tool/Function Hijacking, and Content Flooding/Distraction—plus two auxiliary techniques (Stealth Encoding and Parser Boundary Confusion). The second axis lists concrete attack surfaces: URLs, HTML tags, meta‑data, CSS/JS files, and image‑based screenshots. By combining technique and surface, the authors generate a realistic suite of PI payloads and embed them into a curated dataset of over 1,200 real phishing sites.

Experimental results show that even state‑of‑the‑art LLMs are highly vulnerable. Legitimate Pretending and Role Hijacking cause misclassification rates above 70 %, Safety Policy Triggering forces the model to refuse or error out, Tool/Function Hijacking corrupts expected JSON output schemas, and Content Flooding inflates token usage, leading to economic denial‑of‑service. Across ten representative detection pipelines, overall attack success rates range from 60 % to 80 %.

In response, the paper proposes InjectDefuser, a defense framework that integrates three complementary safeguards: (1) Prompt Hardening – clear delimitation of system versus user prompts, (2) Allowlist‑Based Retrieval Augmentation – whitelisting trusted domains and keywords before external content is re‑queried, and (3) Output Validation – strict schema enforcement with automatic rejection of malformed responses. Each component independently reduces attack success by 30 %–45 %; combined, they lower the overall success rate to under 12 % across all tested models.

The authors acknowledge residual challenges: sophisticated steganographic encodings in images and massive token‑consumption attacks remain difficult to block fully. They recommend continuous adversarial data collection, fine‑tuning at the model‑layer level, and human‑in‑the‑loop verification for high‑stakes deployments. By mapping the PI risk landscape and delivering a practical mitigation strategy, this work significantly advances the reliability and security of next‑generation LLM‑driven phishing detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment