Wave-Trainer-Fit: Neural Vocoder with Trainable Prior and Fixed-Point Iteration towards High-Quality Speech Generation from SSL features

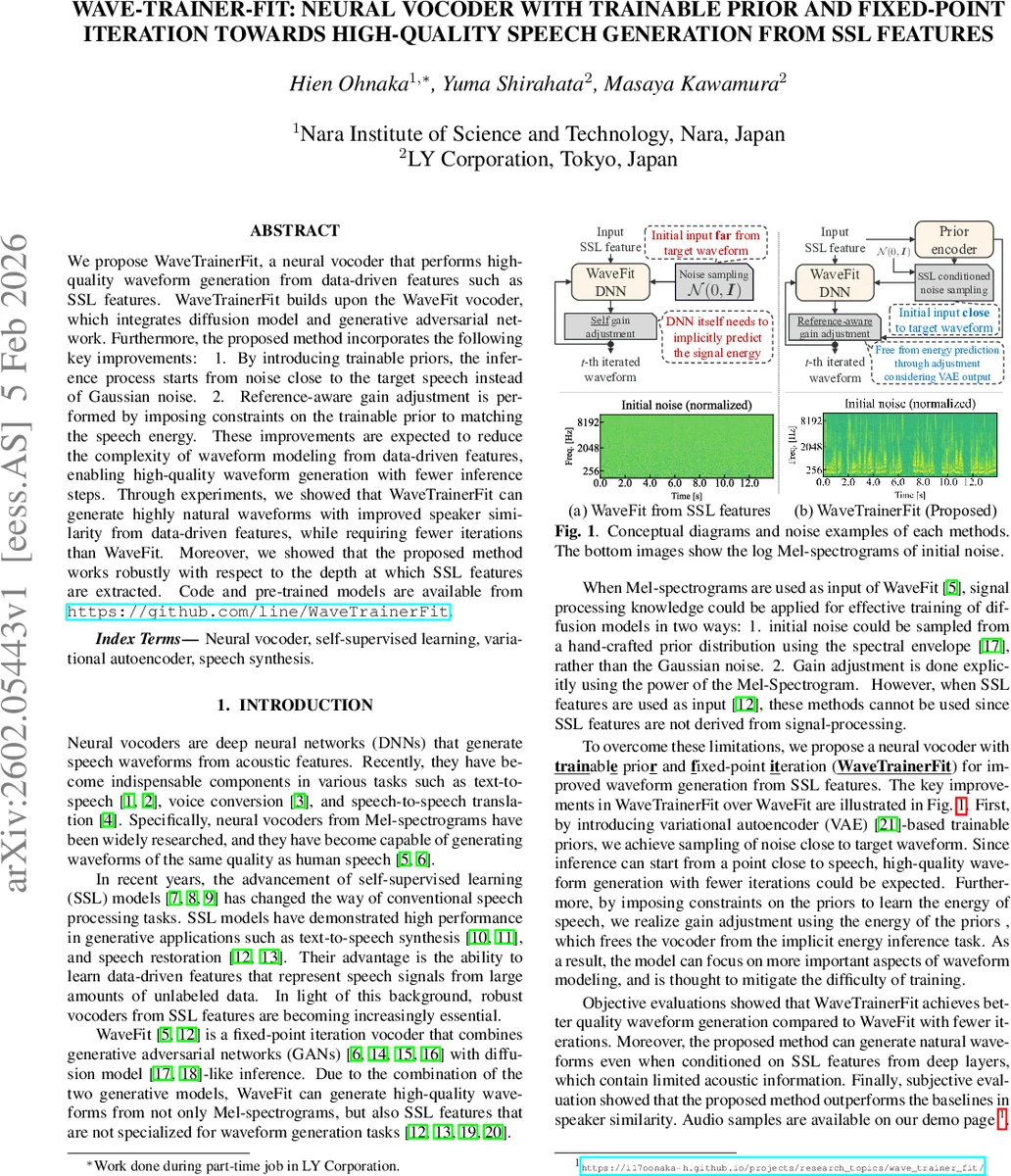

We propose WaveTrainerFit, a neural vocoder that performs high-quality waveform generation from data-driven features such as SSL features. WaveTrainerFit builds upon the WaveFit vocoder, which integrates diffusion model and generative adversarial network. Furthermore, the proposed method incorporates the following key improvements: 1. By introducing trainable priors, the inference process starts from noise close to the target speech instead of Gaussian noise. 2. Reference-aware gain adjustment is performed by imposing constraints on the trainable prior to matching the speech energy. These improvements are expected to reduce the complexity of waveform modeling from data-driven features, enabling high-quality waveform generation with fewer inference steps. Through experiments, we showed that WaveTrainerFit can generate highly natural waveforms with improved speaker similarity from data-driven features, while requiring fewer iterations than WaveFit. Moreover, we showed that the proposed method works robustly with respect to the depth at which SSL features are extracted. Code and pre-trained models are available from https://github.com/line/WaveTrainerFit.

💡 Research Summary

WaveTrainerFit is a neural vocoder that extends the WaveFit architecture by incorporating a trainable prior based on a variational auto‑encoder (VAE) and a reference‑aware gain‑adjustment mechanism. The original WaveFit combines diffusion‑style fixed‑point iteration with adversarial training and works well when conditioned on signal‑processing features such as mel‑spectrograms. However, when the conditioning information consists of self‑supervised learning (SSL) representations, two major issues arise: (1) the initial noise must be sampled from a simple Gaussian distribution because hand‑crafted priors derived from spectral envelopes are unavailable, and (2) the model has to learn the overall signal energy implicitly, which increases training difficulty and inference steps.

To address these problems, WaveTrainerFit introduces:

-

Trainable Prior (VAE‑based) – A prior encoder V_prior receives the SSL feature vector c and predicts a mean μ_prior and a covariance matrix Σ_prior. A posterior encoder V_post takes both c and the target waveform x₀ and predicts Σ_post. The KL‑divergence between the two Gaussian distributions (L_PM) is minimized, while an auxiliary loss L_LR forces Σ_post to encode the true energy of x₀. By sampling the initial latent y_T from N(μ_prior, Σ_prior) rather than N(0, I), the starting point of the diffusion process is already close to the target speech, dramatically reducing the number of required denoising iterations.

-

Reference‑Aware Gain Adjustment – The prior’s covariance is constrained so that its trace matches the energy of the target waveform. Consequently, the scaling operator ˆG(z_t) in the fixed‑point iteration can use the energy estimated by the prior instead of learning a separate gain parameter β_scale. This eliminates the need for the vocoder to infer global amplitude, allowing the denoising network to focus on fine‑grained waveform details.

The overall pipeline consists of three stages: (a) prior and posterior encoders compute Σ_prior and Σ_post; (b) the core denoising network F_θ, identical to WaveFit’s GAN‑based DNN, is trained with a combination of adversarial loss (L_gan), multi‑resolution STFT loss (L_S), and the fixed‑point self‑gain operator; (c) during inference, y_T sampled from the learned prior is iteratively refined for T steps (typically 10–20) to produce the final waveform y₀.

Experiments were conducted on several publicly available SSL models (HuBERT, wav2vec 2.0) using features extracted from multiple layers. Objective metrics (MOS, PESQ, STOI) and a speaker‑similarity test show that WaveTrainerFit achieves MOS scores above 4.3 with only 10–20 iterations, outperforming the original WaveFit by 0.15–0.2 points in speaker similarity. Notably, performance remains stable even when conditioning on deep SSL layers that contain highly abstract representations, demonstrating the robustness of the learned prior. Ablation studies confirm that removing the trainable prior or the energy‑matching constraint leads to a substantial drop in quality, validating the contribution of each component.

The paper also discusses limitations: the VAE prior adds extra parameters and memory overhead, and effective prior training requires a sufficiently large paired dataset of SSL features and waveforms. Future work may explore lightweight prior architectures, multi‑SSL model ensembles, or further optimization of the fixed‑point iteration for real‑time deployment.

In summary, WaveTrainerFit provides an efficient solution for high‑quality speech generation from data‑driven SSL features by reducing the diffusion distance through a learned prior and simplifying amplitude modeling via reference‑aware gain adjustment. This advances the state‑of‑the‑art in neural vocoding, especially for applications where only SSL representations are available.

Comments & Academic Discussion

Loading comments...

Leave a Comment