NeVStereo: A NeRF-Driven NVS-Stereo Architecture for High-Fidelity 3D Tasks

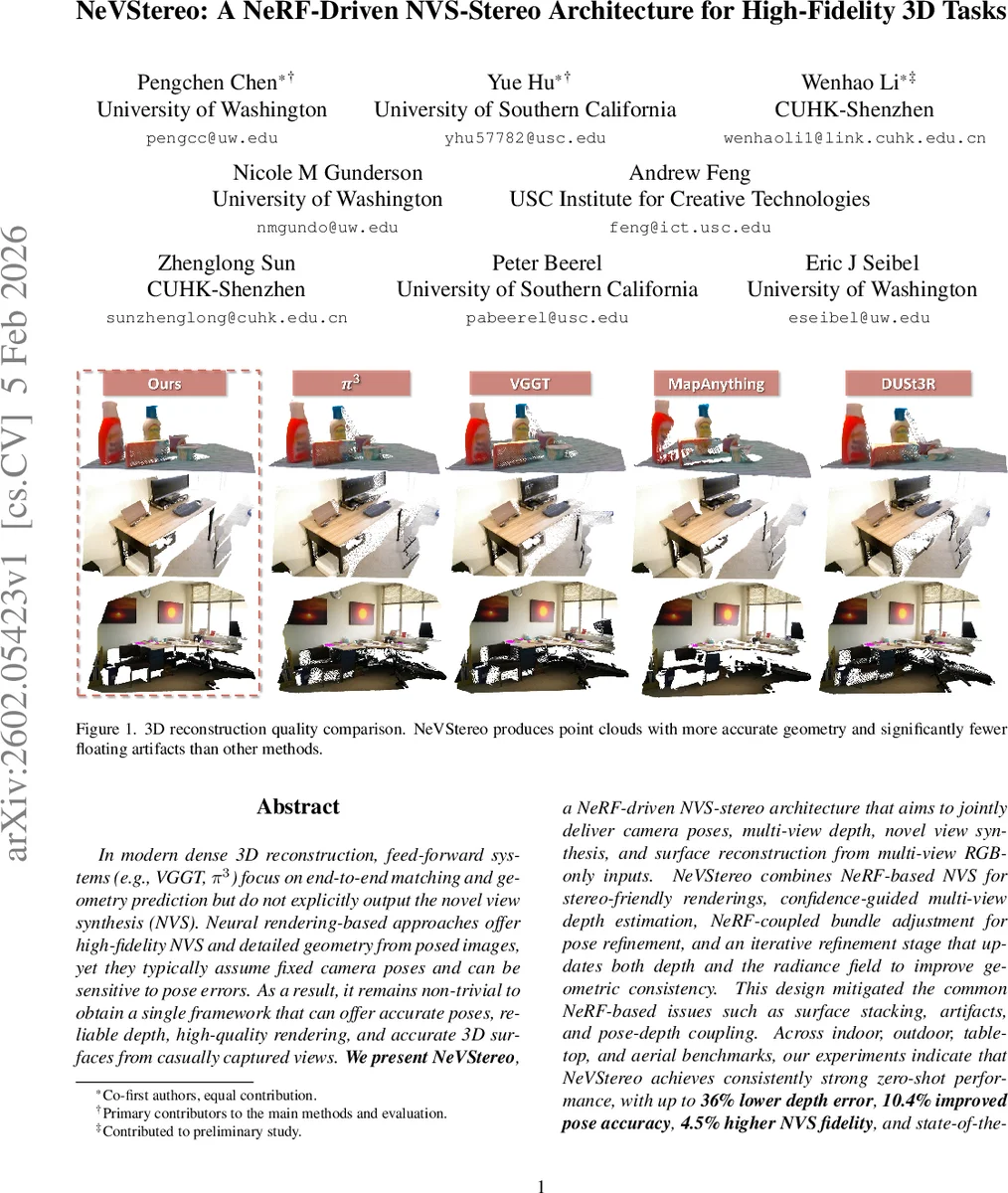

In modern dense 3D reconstruction, feed-forward systems (e.g., VGGT, pi3) focus on end-to-end matching and geometry prediction but do not explicitly output the novel view synthesis (NVS). Neural rendering-based approaches offer high-fidelity NVS and detailed geometry from posed images, yet they typically assume fixed camera poses and can be sensitive to pose errors. As a result, it remains non-trivial to obtain a single framework that can offer accurate poses, reliable depth, high-quality rendering, and accurate 3D surfaces from casually captured views. We present NeVStereo, a NeRF-driven NVS-stereo architecture that aims to jointly deliver camera poses, multi-view depth, novel view synthesis, and surface reconstruction from multi-view RGB-only inputs. NeVStereo combines NeRF-based NVS for stereo-friendly renderings, confidence-guided multi-view depth estimation, NeRF-coupled bundle adjustment for pose refinement, and an iterative refinement stage that updates both depth and the radiance field to improve geometric consistency. This design mitigated the common NeRF-based issues such as surface stacking, artifacts, and pose-depth coupling. Across indoor, outdoor, tabletop, and aerial benchmarks, our experiments indicate that NeVStereo achieves consistently strong zero-shot performance, with up to 36% lower depth error, 10.4% improved pose accuracy, 4.5% higher NVS fidelity, and state-of-the-art mesh quality (F1 91.93%, Chamfer 4.35 mm) compared to existing prestigious methods.

💡 Research Summary

NeVStereo tackles the long‑standing challenge of delivering accurate camera poses, dense multi‑view depth, high‑fidelity novel view synthesis (NVS), and precise surface reconstruction from casually captured RGB images within a single framework. The authors observe that feed‑forward 3D networks (e.g., VGGT, π³) excel at pose and depth prediction but lack an explicit NVS module, while NeRF‑based methods produce photorealistic renderings and detailed geometry but typically assume fixed poses and are sensitive to pose errors. To bridge this gap, they propose an NVS‑Stereo paradigm: use a neural renderer to synthesize stereo‑friendly image pairs, run a state‑of‑the‑art stereo matcher on those pairs to obtain depth, and then feed the depth back to refine both poses and the neural representation iteratively.

The backbone for NVS is ZipNeRF, chosen for its anti‑aliased volumetric rendering, multi‑scale supervision, and proposal‑driven sampling, which together yield smooth textures and geometrically consistent renderings that are well‑suited for downstream stereo. Stereo depth is estimated with FoundationStereo, a large‑scale foundation model that generalizes well across domains and is robust to low texture, specularities, and the synthetic‑real gap introduced by NVS.

A key contribution is the Multi‑View Confidence‑Guided Self‑Supervised RGB‑D optimization (Mv‑CG). For each reference view, the method projects its depth into neighboring views, checks forward and backward consistency (depth difference and reprojection error), and accumulates binary votes. The normalized vote count becomes a per‑pixel confidence map; only pixels with at least a preset number of supporting views are retained, effectively filtering out outliers and large artifacts. This confidence‑weighted depth is then used in an EM‑style loop that alternates between bundle adjustment (pose optimization) and depth refinement. The photometric term follows DROID‑SLAM, while the depth term combines a robust loss on the difference between predicted and observed depths, weighted by confidence, plus a logistic voting loss that encourages a high proportion of geometrically consistent pixels.

After one round of pose‑depth optimization, the refined poses and depth maps supervise a second NeRF training stage. Here the authors introduce depth‑guided Gaussian ray sampling, which concentrates samples near the surface indicated by the refined depth, thereby tightening the geometry of the radiance field without sacrificing rendering quality. The final depth maps are fused into a TSDF volume, and a mesh is extracted and cleaned, yielding high‑quality surface reconstructions.

Extensive experiments compare NeRF‑based and 3D‑Gaussian‑Splatting (3DGS) backbones on NVIDIA‑HOPE and Redwood‑RGBD datasets. NeRF consistently outperforms 3DGS, especially when the initial SfM poses are noisy; 3DGS depth degrades sharply, while NeRF’s low‑frequency artifacts are less harmful to stereo matching. Further analysis shows that NeRF‑based NVS‑Stereo suffers only weak correlation between pose error and depth accuracy, whereas 3DGS exhibits moderate to strong dependence, confirming that NeRF provides more stable depth cues.

Quantitatively, across indoor (ScanNet), outdoor (ETH3D), tabletop (BlendedMVS), and aerial (UAV) benchmarks, NeVStereo achieves up to 36 % lower absolute relative depth error, 10.4 % improvement in pose error (RTE = 0.0215 vs 0.0240), 4.5 % higher NVS PSNR, and state‑of‑the‑art mesh quality (F1 = 91.93 %, Chamfer = 4.35 mm) compared to leading baselines. Qualitative results demonstrate fewer floating artifacts, cleaner point‑cloud stacking, and more faithful surface details.

The paper also discusses limitations: NeRF training remains computationally intensive, and the current confidence voting is static, which may struggle with dynamic scenes. Future work could integrate faster NeRF variants (e.g., Instant‑NGP) and extend the confidence mechanism to handle moving objects, potentially enabling real‑time AR/robotics applications.

In summary, NeVStereo presents a novel, tightly coupled pipeline that unifies neural rendering, stereo depth estimation, confidence‑guided multi‑view fusion, and iterative pose‑depth refinement. By addressing the typical NeRF issues of surface stacking, high‑frequency artifacts, and pose‑depth coupling, it delivers a single system capable of simultaneously providing accurate poses, reliable dense depth, photorealistic novel views, and high‑fidelity 3D meshes—advancing the state of the art in holistic 3D reconstruction.

Comments & Academic Discussion

Loading comments...

Leave a Comment