Breaking Semantic Hegemony: Decoupling Principal and Residual Subspaces for Generalized OOD Detection

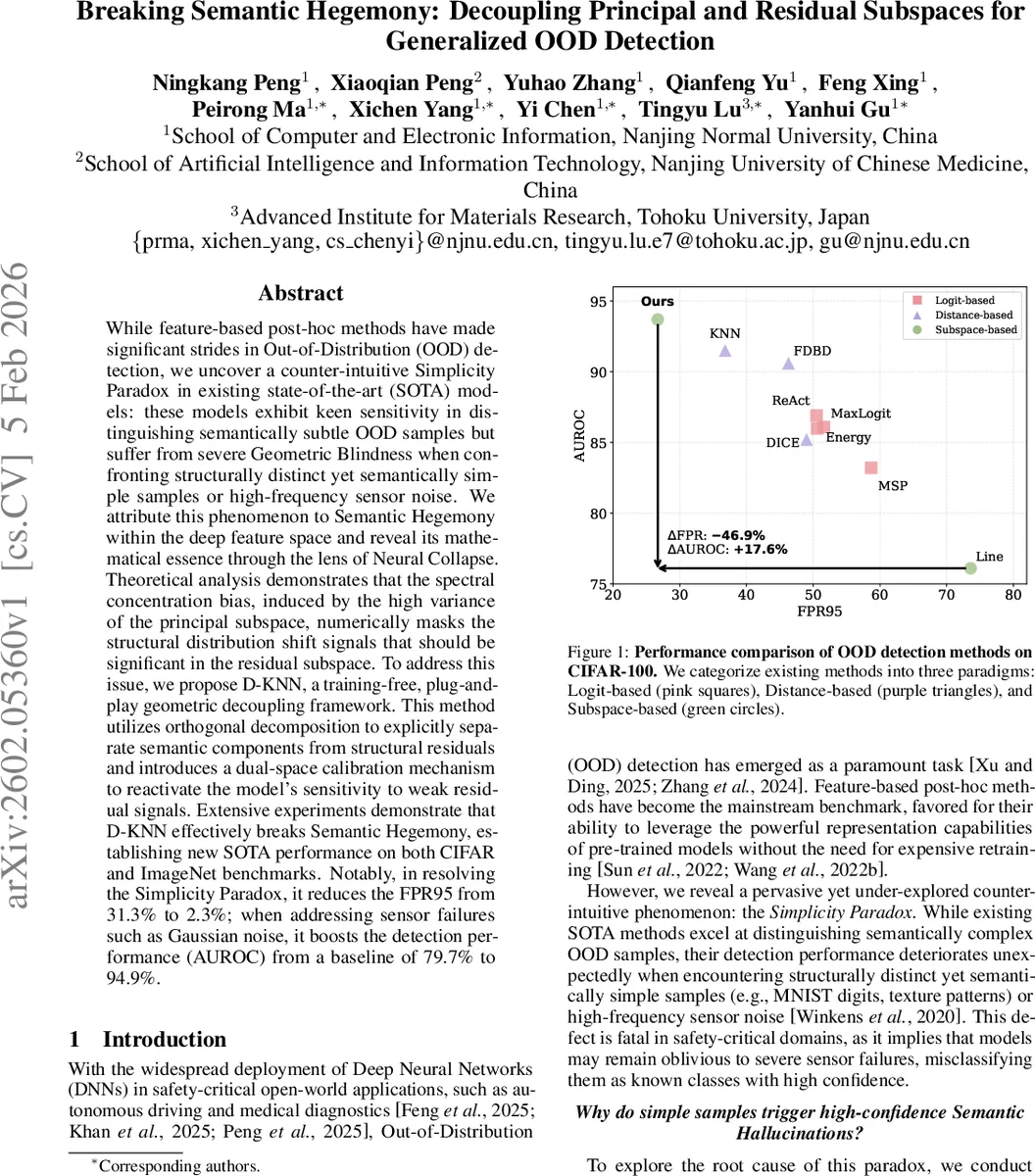

While feature-based post-hoc methods have made significant strides in Out-of-Distribution (OOD) detection, we uncover a counter-intuitive Simplicity Paradox in existing state-of-the-art (SOTA) models: these models exhibit keen sensitivity in distinguishing semantically subtle OOD samples but suffer from severe Geometric Blindness when confronting structurally distinct yet semantically simple samples or high-frequency sensor noise. We attribute this phenomenon to Semantic Hegemony within the deep feature space and reveal its mathematical essence through the lens of Neural Collapse. Theoretical analysis demonstrates that the spectral concentration bias, induced by the high variance of the principal subspace, numerically masks the structural distribution shift signals that should be significant in the residual subspace. To address this issue, we propose D-KNN, a training-free, plug-and-play geometric decoupling framework. This method utilizes orthogonal decomposition to explicitly separate semantic components from structural residuals and introduces a dual-space calibration mechanism to reactivate the model’s sensitivity to weak residual signals. Extensive experiments demonstrate that D-KNN effectively breaks Semantic Hegemony, establishing new SOTA performance on both CIFAR and ImageNet benchmarks. Notably, in resolving the Simplicity Paradox, it reduces the FPR95 from 31.3% to 2.3%; when addressing sensor failures such as Gaussian noise, it boosts the detection performance (AUROC) from a baseline of 79.7% to 94.9%.

💡 Research Summary

The paper uncovers a previously overlooked failure mode of state‑of‑the‑art post‑hoc out‑of‑distribution (OOD) detectors, which the authors term the “Simplicity Paradox.” While modern feature‑based detectors excel at spotting semantically complex OOD examples (e.g., SVHN, LSUN), they dramatically under‑perform on structurally simple but semantically trivial inputs such as handwritten digits (MNIST, EMNIST) or high‑frequency sensor noise. The authors trace this paradox to a phenomenon they call “Semantic Hegemony”: during the terminal phase of training deep networks exhibit Neural Collapse, causing almost all feature energy to collapse onto a low‑dimensional principal subspace spanned by class means. Consequently, the eigenvalue spectrum is heavily skewed, and the ratio ρ of principal‑to‑residual energy tends to infinity. Standard distance‑based scores (e.g., Euclidean K‑nearest‑neighbor, Mahalanobis) become dominated by the principal components, effectively masking any structural anomalies that reside in the orthogonal residual subspace. This creates a “geometric blind spot” for OOD samples that differ only in the residual directions.

To remedy this, the authors propose Dual‑Space K‑Nearest‑Neighbor (D‑KNN), a training‑free, plug‑and‑play framework that explicitly decouples the feature space into a principal (semantic) subspace and an orthogonal residual (structural) subspace. The pipeline consists of: (1) L2‑normalizing all features onto the unit hypersphere; (2) performing PCA on the normalized training set to obtain a basis V for the principal subspace and defining projection operators P_prin = VVᵀ and P_res = I – P_prin; (3) projecting each test sample into two independent views, z_prin and z_res, by applying the respective projections and re‑projecting onto the hypersphere; (4) computing k‑NN distances in each view, yielding scores s_prin and s_res; (5) calibrating these scores with Z‑scores using the mean and standard deviation of in‑distribution (ID) scores (μ_v, σ_v), which dramatically amplifies residual deviations because σ_res is extremely small under Neural Collapse; (6) fusing the calibrated scores with a weight α (typically 0.5–0.7) to obtain the final anomaly score S = α·ŝ_prin + (1−α)·ŝ_res. No additional model parameters are learned, and the method can be applied to any pre‑trained classifier.

Theoretical analysis introduces an “Asymptotic Risk Vanishing” theorem. Assuming an ideal Neural Collapse regime (within‑class covariance → 0) and a non‑zero gap Δ between ID and OOD means in the residual space, the calibrated score for OOD samples diverges while the calibrated ID distribution converges to a standard normal. By Chebyshev’s inequality, the detection risk tends to zero as σ_in → 0, proving that the dual‑space calibration asymptotically guarantees perfect separation.

Empirically, the authors evaluate D‑KNN on two scales. For CIFAR‑10/100 they use WideResNet‑28‑10, ResNet‑18, and DenseNet‑101 backbones, testing against standard OOD sets (SVHN, LSUN, iSUN, Textures) plus the deliberately simple MNIST/EMNIST and high‑frequency corruptions from CIFAR‑100‑C. For ImageNet‑1K they use a pre‑trained ResNet‑50 and test on seven large‑scale OOD datasets (iNaturalist, ImageNet‑O, NIN‑CO, OpenImage‑O, Places, SUN, Textures). Key results include: • On the Simplicity Paradox (MNIST/EMNIST) D‑KNN reduces FPR95 from 31.3 % to 2.3 %, a >13× improvement. • Under high‑frequency noise (CIFAR‑100‑C) AUROC rises from 79.7 % to 94.9 %. • Across ImageNet‑O and other large‑scale OOD sets, average FPR95 drops from 47.0 % (baseline) to 15.9 % with D‑KNN, while AUC gains 2–10 percentage points over strong baselines such as Mahalanobis, ReAct, ViM, and standard K‑NN. • Computational overhead is minimal: only a one‑time PCA and two k‑NN searches per query, with inference time increase <10 % compared to vanilla K‑NN.

In summary, the paper identifies a fundamental spectral bias in deep feature representations that hampers OOD detection for structurally simple anomalies, formalizes its cause via Neural Collapse, and offers a simple yet theoretically grounded dual‑space decoupling method that dramatically improves detection performance without any retraining. The work opens avenues for further research into residual‑space modeling, integration with training‑time regularizers that mitigate Semantic Hegemony, and extensions to other modalities such as video or point clouds.

Comments & Academic Discussion

Loading comments...

Leave a Comment