Multimodal Latent Reasoning via Hierarchical Visual Cues Injection

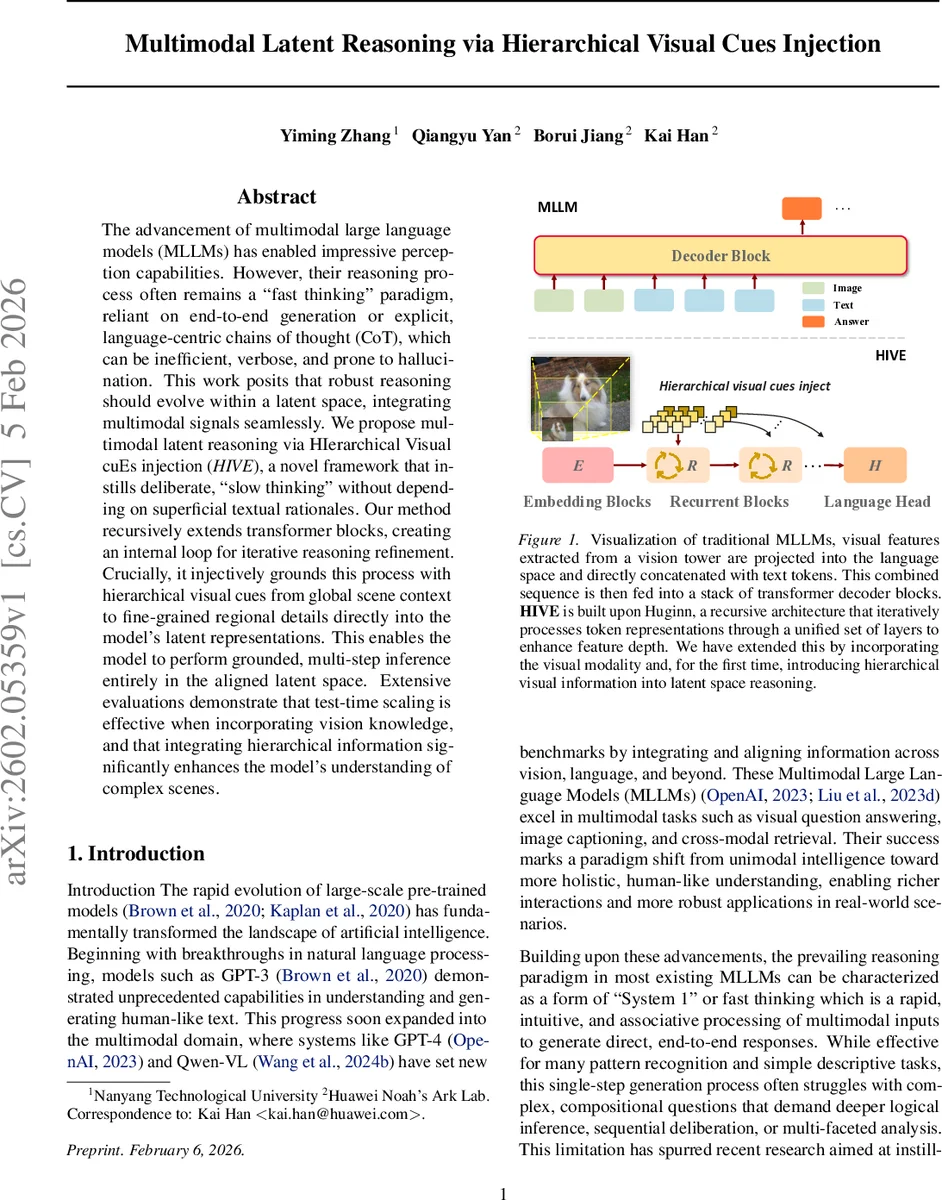

The advancement of multimodal large language models (MLLMs) has enabled impressive perception capabilities. However, their reasoning process often remains a “fast thinking” paradigm, reliant on end-to-end generation or explicit, language-centric chains of thought (CoT), which can be inefficient, verbose, and prone to hallucination. This work posits that robust reasoning should evolve within a latent space, integrating multimodal signals seamlessly. We propose multimodal latent reasoning via HIerarchical Visual cuEs injection (\emph{HIVE}), a novel framework that instills deliberate, “slow thinking” without depending on superficial textual rationales. Our method recursively extends transformer blocks, creating an internal loop for iterative reasoning refinement. Crucially, it injectively grounds this process with hierarchical visual cues from global scene context to fine-grained regional details directly into the model’s latent representations. This enables the model to perform grounded, multi-step inference entirely in the aligned latent space. Extensive evaluations demonstrate that test-time scaling is effective when incorporating vision knowledge, and that integrating hierarchical information significantly enhances the model’s understanding of complex scenes.

💡 Research Summary

The paper introduces HIVE (Multimodal Latent Reasoning via Hierarchical Visual Cues Injection), a novel framework that moves multimodal large language models (MLLMs) away from the conventional “fast‑thinking” single‑pass generation toward a deliberate, “slow‑thinking” process performed entirely in latent space. Traditional MLLMs project visual features from a vision tower into the language embedding space and concatenate them with text tokens, feeding the combined sequence into a stack of decoder blocks. While effective for straightforward tasks, this architecture relies heavily on textual chain‑of‑thought (CoT) prompts for complex reasoning, leading to verbosity, inefficiency, and hallucination.

HIVE addresses these limitations through two key innovations. First, it adopts a loop‑transformer architecture based on Huginn, which consists of three shared modules: an Embedding block (E), a Recurrent block (R), and a Language Head (H). The R block is executed repeatedly, allowing the model to refine its hidden states iteratively without increasing the number of trainable parameters. The number of recurrent iterations is treated as a test‑time scaling factor, enabling flexible depth of reasoning.

Second, HIVE injects visual information hierarchically rather than using a single final‑layer representation. From a pretrained Vision Transformer (ViT) it extracts hidden states at four depths (layers 6, 12, 18, 24). Lower layers retain high‑resolution textures and edges, while higher layers encode global semantics and object relationships. Each selected layer is passed through lightweight patch‑merger modules that project the visual tensors into the LLM’s hidden dimension. During the early recurrent steps, these projected cues are fed into the R block in a “curriculum” order—from coarse‑grained to fine‑grained—so that the model first grounds its reasoning on global scene context and progressively refines it with detailed visual evidence.

The injection schedule adapts to the sampled recurrence depth r. If r ≥ 4, the full hierarchy is injected in the first four iterations, after which the model continues with pure language modeling to polish the answer. If r < 4, a subset of the hierarchy is selected at regular intervals to guarantee that even shallow reasoning receives a representative visual spectrum. Training randomly samples r from a log‑normal Poisson distribution, forcing the model to be robust to varying numbers of reasoning steps. The loss is the expected cross‑entropy over all sampled r, encouraging consistency across depths.

Empirical evaluation spans visual question answering, image captioning, and complex scene‑understanding benchmarks. HIVE consistently outperforms baseline MLLMs that use static visual embeddings and textual CoT, achieving 4–7 % absolute gains on compositional reasoning tasks. Moreover, because reasoning is performed in latent space, token consumption drops by over 30 % compared to explicit CoT prompting. Test‑time scaling experiments demonstrate that adding more visual knowledge during inference further boosts accuracy and reduces hallucination, confirming the effectiveness of the hierarchical visual cues.

In summary, HIVE demonstrates that (1) recursive transformer loops enable depth‑adjustable “slow thinking” without extra parameters, (2) hierarchical visual cue injection provides multi‑scale grounding that stabilizes hidden‑state dynamics, and (3) stochastic recurrence depth during training yields a model that generalizes across a wide range of reasoning budgets. The authors suggest future extensions to video, audio, and dynamic visual‑layer selection, positioning HIVE as a versatile foundation for next‑generation multimodal reasoning systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment