How Do Language Models Acquire Character-Level Information?

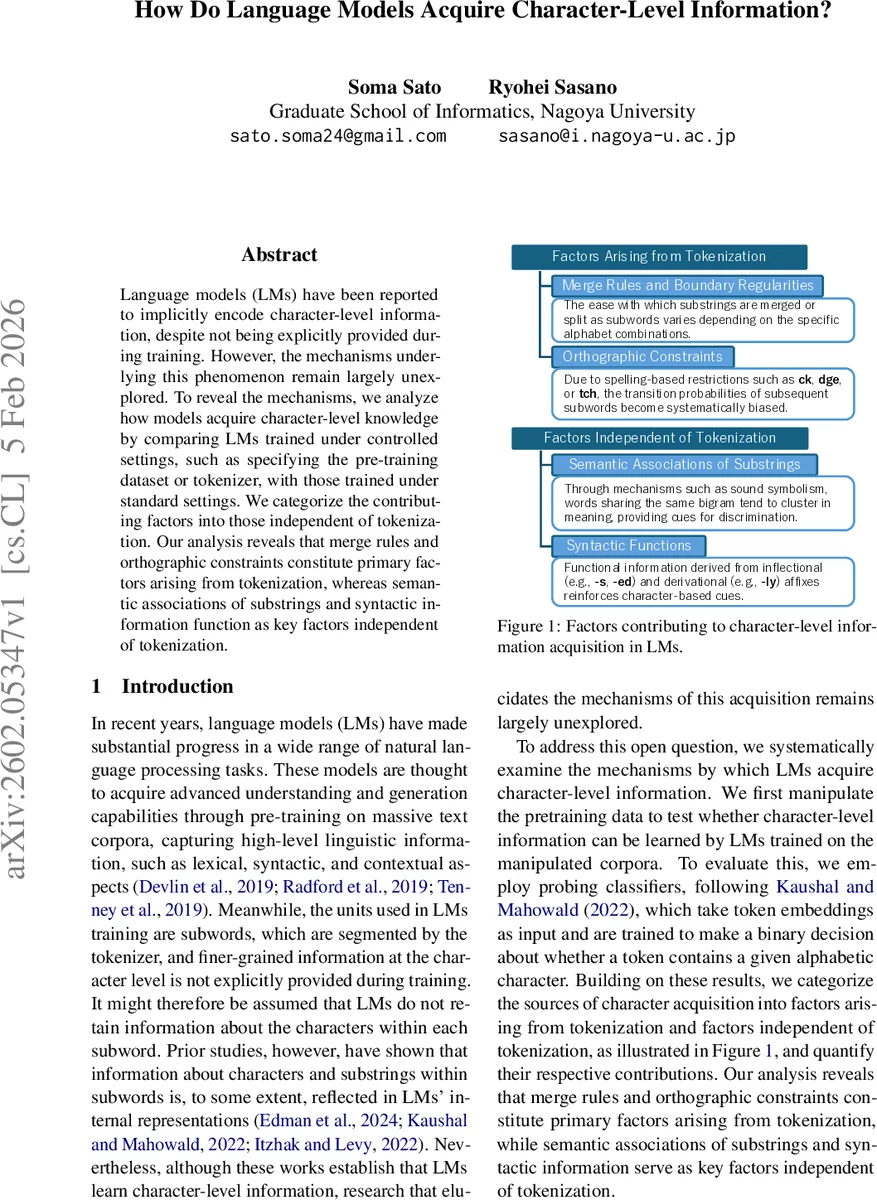

Language models (LMs) have been reported to implicitly encode character-level information, despite not being explicitly provided during training. However, the mechanisms underlying this phenomenon remain largely unexplored. To reveal the mechanisms, we analyze how models acquire character-level knowledge by comparing LMs trained under controlled settings, such as specifying the pre-training dataset or tokenizer, with those trained under standard settings. We categorize the contributing factors into those independent of tokenization. Our analysis reveals that merge rules and orthographic constraints constitute primary factors arising from tokenization, whereas semantic associations of substrings and syntactic information function as key factors independent of tokenization.

💡 Research Summary

The paper investigates how modern neural language models (LMs) acquire character‑level information despite never being explicitly given characters during pre‑training. While prior work has shown that subword embeddings retain some knowledge about the letters and substrings they contain, the underlying mechanisms have not been systematically dissected. To fill this gap, the authors conduct a series of controlled experiments that vary the tokenizer, the pre‑training corpus, and the relationship between form and meaning. Their goal is to separate factors that arise from the tokenization process itself from those that are independent of tokenization, and to quantify each factor’s contribution to the overall character‑level knowledge encoded in the model.

Probing task.

Following Kaushal and Mahowald (2022), the authors define a binary classification task: given a token embedding, predict whether the token contains a particular alphabetic character (e.g., “e”). Crucially, they match the token‑length distribution between positive and negative examples, thereby removing the confounding effect that longer tokens are more likely to contain any given character. A two‑layer MLP (SELUs → Tanh, dropout 0.1) is trained on static token embeddings, and micro‑averaged accuracy across all 26 letters is reported.

Models and data.

Two small‑scale LMs are trained from scratch on the FineWeb 10‑billion‑token corpus: (1) BERT‑Tiny, an encoder‑only model (2 layers, 2 heads, hidden 128) trained with masked language modeling, using a WordPiece tokenizer; (2) nanoGPT, a decoder‑only model (12 layers, 12 heads, hidden 768) trained with next‑word prediction, using a BPE tokenizer. The tokenizers are learned on the same corpus, yielding vocabularies of roughly 42 k tokens. For comparison, publicly released large models (GPT‑2, GPT‑J, BERT‑base) are also evaluated on the same probing task.

Baseline results.

All models achieve substantially above‑chance performance, confirming that character‑level information is indeed present. nanoGPT reaches 66.7 % accuracy when token‑lengths are matched, comparable to BERT‑base (67.1 %). When length matching is omitted (“unmatched” condition), accuracies rise modestly (e.g., nanoGPT 69.6 %) because longer tokens are over‑represented among positives. A fine‑grained analysis shows that short tokens (≤ 3 characters) yield the highest probing accuracy (≈ 80 %), while accuracy declines monotonically with token length, suggesting that frequency and semantic richness of short tokens aid character detection.

Input‑string transformations.

To disentangle the role of form‑meaning associations, the authors create two transformed versions of the pre‑training data:

- WordSub – each subword in the tokenizer vocabulary is replaced by a unique random string, preserving sentence structure and overall semantics but destroying any systematic link between a token’s spelling and its meaning.

- CharPert – every alphabetic character in the raw text is randomly replaced (case preserved), thereby erasing regularities of character sequences while keeping word order and spacing intact.

Both transformed corpora are re‑tokenized with the original BPE or a simple whitespace‑based “Word” tokenizer (no subword segmentation). Probing results show:

- BPE on CharPert still attains 58.2 % accuracy (well above the 50 % chance level), indicating that the tokenization algorithm alone introduces a non‑trivial bias toward preserving character information.

- BPE on WordSub drops to 53.8 % (a 12.9 % absolute loss relative to the original FineWeb data), demonstrating that the semantic link between a token’s spelling and its meaning contributes significantly.

- The Word tokenizer (no subword segmentation) yields accuracies around 50 % for both transformed datasets, confirming that without a learned subword vocabulary the model cannot recover character‑level cues.

Factor taxonomy.

Based on these observations, the authors propose a two‑tier taxonomy:

- Factors arising from tokenization – (a) Merge rules (the sequence of most‑frequent character‑pair merges learned by BPE/WordPiece) and (b) Orthographic constraints (implicit boundaries imposed by whitespace symbols and token‑length regularities).

- Factors independent of tokenization – (a) Semantic associations of substrings (the tendency of meaningful morphemes, prefixes, suffixes, and stems to co‑occur with particular letters) and (b) Syntactic functions (affixation patterns such as –s, –ed, –ly that systematically encode characters).

Quantitative analysis suggests that tokenization‑derived factors account for roughly 60–70 % of the probing performance, while the remaining gain stems from semantic and syntactic cues.

Implications and conclusions.

The study demonstrates that (i) the very act of subword segmentation creates strong priors that embed character‑level information into token embeddings, even when the raw data is heavily perturbed; (ii) meaning‑form relationships further amplify this signal; and (iii) syntactic regularities provide an additional, tokenization‑independent boost. Importantly, a modest‑sized model (nanoGPT) matches the character‑level probing performance of much larger public models, indicating that tokenizer design and data preprocessing are more decisive than sheer model capacity for this particular ability.

From an applied perspective, the findings suggest that deliberately shaping merge rules or enforcing orthographic constraints can be used to enhance a model’s sensitivity to spelling, which may benefit downstream tasks such as spell‑checking, OCR error correction, or low‑resource language modeling where character information is crucial. Conversely, if one wishes to suppress inadvertent reliance on surface form (e.g., for robustness to adversarial character perturbations), training on CharPert‑style data or using tokenizers with weaker merge biases could be a viable strategy.

Overall, the paper provides a clear, empirically grounded decomposition of the sources of character‑level knowledge in neural LMs, offering both theoretical insight and practical guidance for future tokenizer and pre‑training pipeline design.

Comments & Academic Discussion

Loading comments...

Leave a Comment