MentorCollab: Selective Large-to-Small Inference-Time Guidance for Efficient Reasoning

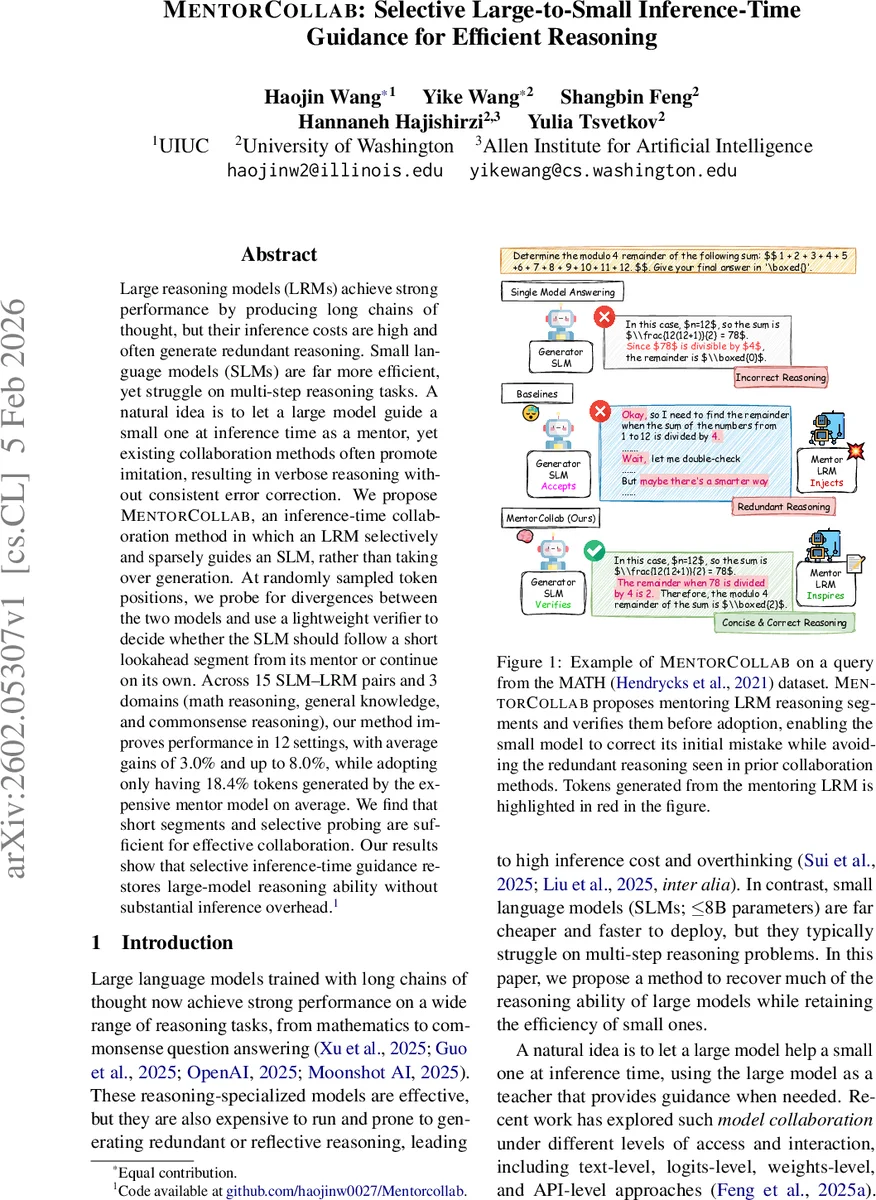

Large reasoning models (LRMs) achieve strong performance by producing long chains of thought, but their inference costs are high and often generate redundant reasoning. Small language models (SLMs) are far more efficient, yet struggle on multi-step reasoning tasks. A natural idea is to let a large model guide a small one at inference time as a mentor, yet existing collaboration methods often promote imitation, resulting in verbose reasoning without consistent error correction. We propose MentorCollab, an inference-time collaboration method in which an LRM selectively and sparsely guides an SLM, rather than taking over generation. At randomly sampled token positions, we probe for divergences between the two models and use a lightweight verifier to decide whether the SLM should follow a short lookahead segment from its mentor or continue on its own. Across 15 SLM–LRM pairs and 3 domains (math reasoning, general knowledge, and commonsense reasoning), our method improves performance in 12 settings, with average gains of 3.0% and up to 8.0%, while adopting only having 18.4% tokens generated by the expensive mentor model on average. We find that short segments and selective probing are sufficient for effective collaboration. Our results show that selective inference-time guidance restores large-model reasoning ability without substantial inference overhead.

💡 Research Summary

MentorCollab tackles the longstanding trade‑off between the strong reasoning abilities of large language models (LLMs) and the efficiency of small language models (SLMs). While large reasoning models (LRMs) excel at chain‑of‑thought (CoT) generation, they are costly to run and often produce redundant or overly verbose reasoning traces. Small models, on the other hand, are cheap and fast but typically fail on multi‑step problems such as mathematics, graduate‑level knowledge questions, or complex commonsense scenarios. Prior attempts at model collaboration have largely focused on imitation: the large model either takes over generation entirely or injects tokens whenever the small model is uncertain. This approach forces the SLM to mimic the LRM’s long CoT, inflating token usage without reliably correcting the SLM’s mistakes.

MentorCollab proposes a fundamentally different paradigm: selective, sparse, inference‑time guidance. The method proceeds in three stages—decision, consultation, and verification. At each decoding step the system samples a Bernoulli variable Bₜ with probability ρ (set to 0.25 in the main experiments). If Bₜ = 1, the system queries the mentor model M and obtains its next token xᴹₜ; the generator G also produces its own token xᴳₜ. A simple word‑level comparison yields a divergence indicator Dₜ (1 if the tokens differ). Only when Dₜ = 1 does the system move to the consultation phase: both models are prompted to generate a short future segment of length L (16 tokens in the paper). These two candidate segments, sᴳ and sᴹ, are then passed to a lightweight verifier V, which decides which segment to adopt.

Two verifier designs are explored. MentorCollab‑FREE treats the generator G itself as the verifier, using a prompt that asks “Which of the following continuations (A or B) is more likely to lead to the correct answer?” without revealing the source model. This requires no extra training but relies on the generator’s own judgment. MentorCollab‑MLP, in contrast, trains a tiny multi‑layer perceptron on a binary classification task: given the generator’s last‑layer hidden state at a verification point, predict whether the mentor’s segment would be the correct one. Training data are collected by running MentorCollab‑FREE on a development split and recording cases where the mentor’s segment leads to a correct answer while the generator’s does not. The MLP is trained with binary cross‑entropy and then reused across all test pairs, eliminating the need for per‑pair fine‑tuning.

The authors evaluate MentorCollab on three domains: (1) MA TH, a benchmark of competition‑level math problems; (2) SuperGPQA, a graduate‑level general‑knowledge dataset covering 285 disciplines; and (3) Com2‑hard‑Intervention, a challenging commonsense reasoning set involving causal interventions. They test fifteen generator–mentor pairs, mixing three SLM families (Llama 3.1‑8B, Gemma‑3‑4B‑PT, Qwen‑3‑8B‑Base) and two instruct‑tuned SLMs (Llama 3.2‑3B‑Instruct, Qwen‑3‑1.7B) with three LRMs (Qwen‑3‑14B, Qwen‑3‑32B, DeepSeek‑R1‑Distilled‑Llama‑70B). Baselines include Average Decoding (averaging top‑5 token distributions), Nudging (switching to mentor when the generator’s next‑token probability falls below a threshold), CoSD (rule‑based verification based on probability ratios), R‑Stitch (entropy‑based switching), and Co‑LLM (a supervised token‑level classifier requiring tokenizer compatibility).

Results show that MentorCollab improves accuracy in 12 of the 15 settings, with average gains of 3 percentage points and peaks up to 8 pp. Importantly, the mentor contributes only about 18.4 % of the total tokens, dramatically reducing inference cost compared to baselines that either fully hand over to the mentor or frequently inject mentor tokens. MentorCollab‑MLP matches or exceeds the performance of the supervised Co‑LLM baseline while avoiding its heavy training requirements and tokenizer constraints. Ablation studies reveal that short mentor segments (4–8 tokens) are sufficient, and that probing frequency around ρ = 0.25 offers the best trade‑off between cost and benefit; higher probing rates increase token usage without proportional gains.

The key insight is that effective collaboration does not require the small model to imitate the large model’s entire reasoning chain. Instead, by detecting divergences at sparse points and allowing the mentor to provide concise, targeted look‑ahead guidance, the system preserves the SLM’s autonomy, corrects its critical errors, and keeps computational overhead low. This makes MentorCollab a practical solution for real‑world deployments where API‑based large‑model calls are expensive, such as mobile assistants, low‑latency chatbots, or large‑scale inference pipelines. The method is lightweight, training‑free (FREE variant) or requires only a one‑time MLP training (MLP variant), and works across heterogeneous model families without needing shared tokenizers or joint fine‑tuning.

In summary, MentorCollab demonstrates that selective, inference‑time mentorship can recover much of the reasoning power of large models while retaining the efficiency of small models, opening a promising avenue for cost‑effective, high‑quality language‑model reasoning.

Comments & Academic Discussion

Loading comments...

Leave a Comment