Fast-SAM3D: 3Dfy Anything in Images but Faster

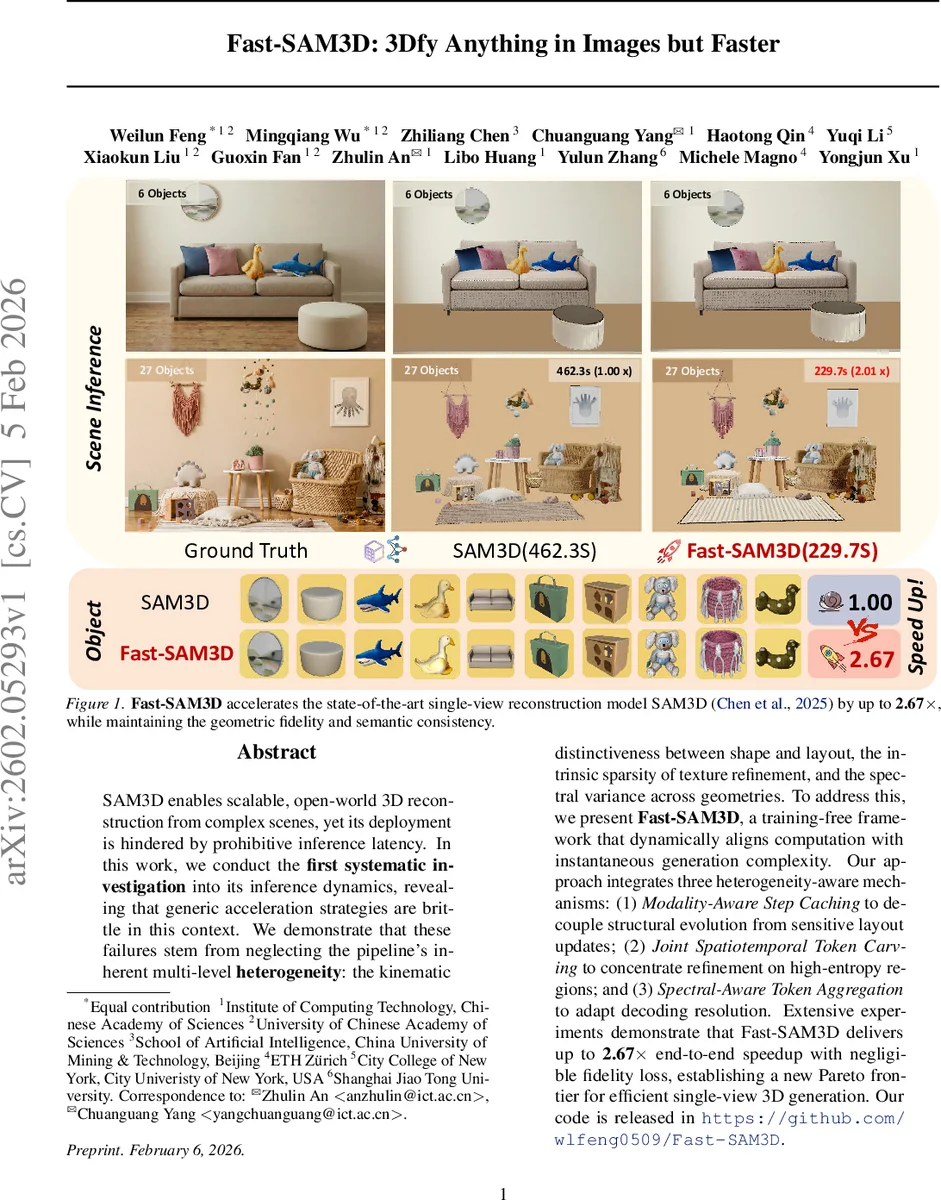

SAM3D enables scalable, open-world 3D reconstruction from complex scenes, yet its deployment is hindered by prohibitive inference latency. In this work, we conduct the \textbf{first systematic investigation} into its inference dynamics, revealing that generic acceleration strategies are brittle in this context. We demonstrate that these failures stem from neglecting the pipeline’s inherent multi-level \textbf{heterogeneity}: the kinematic distinctiveness between shape and layout, the intrinsic sparsity of texture refinement, and the spectral variance across geometries. To address this, we present \textbf{Fast-SAM3D}, a training-free framework that dynamically aligns computation with instantaneous generation complexity. Our approach integrates three heterogeneity-aware mechanisms: (1) \textit{Modality-Aware Step Caching} to decouple structural evolution from sensitive layout updates; (2) \textit{Joint Spatiotemporal Token Carving} to concentrate refinement on high-entropy regions; and (3) \textit{Spectral-Aware Token Aggregation} to adapt decoding resolution. Extensive experiments demonstrate that Fast-SAM3D delivers up to \textbf{2.67$\times$} end-to-end speedup with negligible fidelity loss, establishing a new Pareto frontier for efficient single-view 3D generation. Our code is released in https://github.com/wlfeng0509/Fast-SAM3D.

💡 Research Summary

The paper addresses the prohibitive inference latency of SAM3D, a state‑of‑the‑art single‑view 3D reconstruction model that produces high‑quality, open‑world meshes and textures from an image and a mask. By profiling SAM3D, the authors discover that latency is concentrated in three coupled components: the dual‑stage iterative denoising (structure and texture) and the combinatorial cost of decoding long token sequences in the mesh decoder. Moreover, they find that generic acceleration techniques such as uniform step skipping or random token pruning fail because they ignore three forms of heterogeneity inherent to the pipeline: (i) kinematic distinctiveness between smooth shape evolution and volatile layout updates, (ii) intrinsic sparsity of texture refinement where only a few spatial regions require intensive updates, and (iii) spectral variance across geometries, meaning that simple objects can tolerate aggressive token compression while complex objects cannot.

To exploit these observations, the authors propose Fast‑SAM3D, a training‑free framework that dynamically aligns computation with the instantaneous generation complexity. Fast‑SAM3D consists of three plug‑and‑play modules:

-

Modality‑Aware Step Caching for the Sparse Structure generator. Shape tokens, which evolve smoothly, are extrapolated using a first‑order finite‑difference (Δ) and a Taylor expansion, allowing several diffusion steps to be skipped. Layout tokens, which control global pose, are updated via a momentum‑anchored smoothing that blends a linear extrapolation with the most recent full‑compute anchor, preventing pose drift.

-

Joint Spatiotemporal Token Carving for the Sparse Latent generator. The method computes per‑token temporal magnitude (M) and abruptness (A) together with a frequency‑based complexity score (S_freq) derived from a lightweight FFT. These cues are combined into a unified importance score J_i(t). Only the top‑K tokens with highest J are actively updated at each step; the rest reuse cached tangent updates. An error‑bounded switching criterion (based on instantaneous relative change ε and accumulated error E) decides when a full backbone evaluation is required.

-

Spectral‑Aware Token Aggregation for the mesh decoder. By measuring geometric spectral entropy for each instance, the system decides how aggressively to compress token grids: simple objects receive radical aggregation (large compression), while complex objects receive conservative aggregation (minimal compression). This adapts decoding resolution to the object’s intrinsic frequency content.

Extensive experiments on six objects and 27 scenes demonstrate up to 2.67× end‑to‑end speedup (average 2.3×) with negligible fidelity loss: Chamfer distance increases by less than 3 %, PSNR drops by <0.5 dB, and pose accuracy remains stable thanks to the layout‑aware caching. Ablation studies confirm that each module contributes additively, and the combination yields the best trade‑off. The framework requires no additional training; it can be inserted as a set of wrappers around the existing SAM3D codebase.

The paper’s contribution lies in introducing a heterogeneity‑aware acceleration paradigm for 3D diffusion models, moving beyond uniform optimizations that work in 2D domains. Limitations include the need to manually set hyper‑parameters such as the cache stride, top‑K proportion, and spectral thresholds, and the current focus on single‑view inputs. Future work could extend the approach to multi‑view or video streams, automate hyper‑parameter selection, and integrate with quantization or distillation techniques for further gains.

In summary, Fast‑SAM3D offers a practical, training‑free solution that dramatically reduces inference time while preserving the high‑fidelity, open‑world capabilities of SAM3D, paving the way for real‑time or interactive 3D generation applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment