Affordance-Aware Interactive Decision-Making and Execution for Ambiguous Instructions

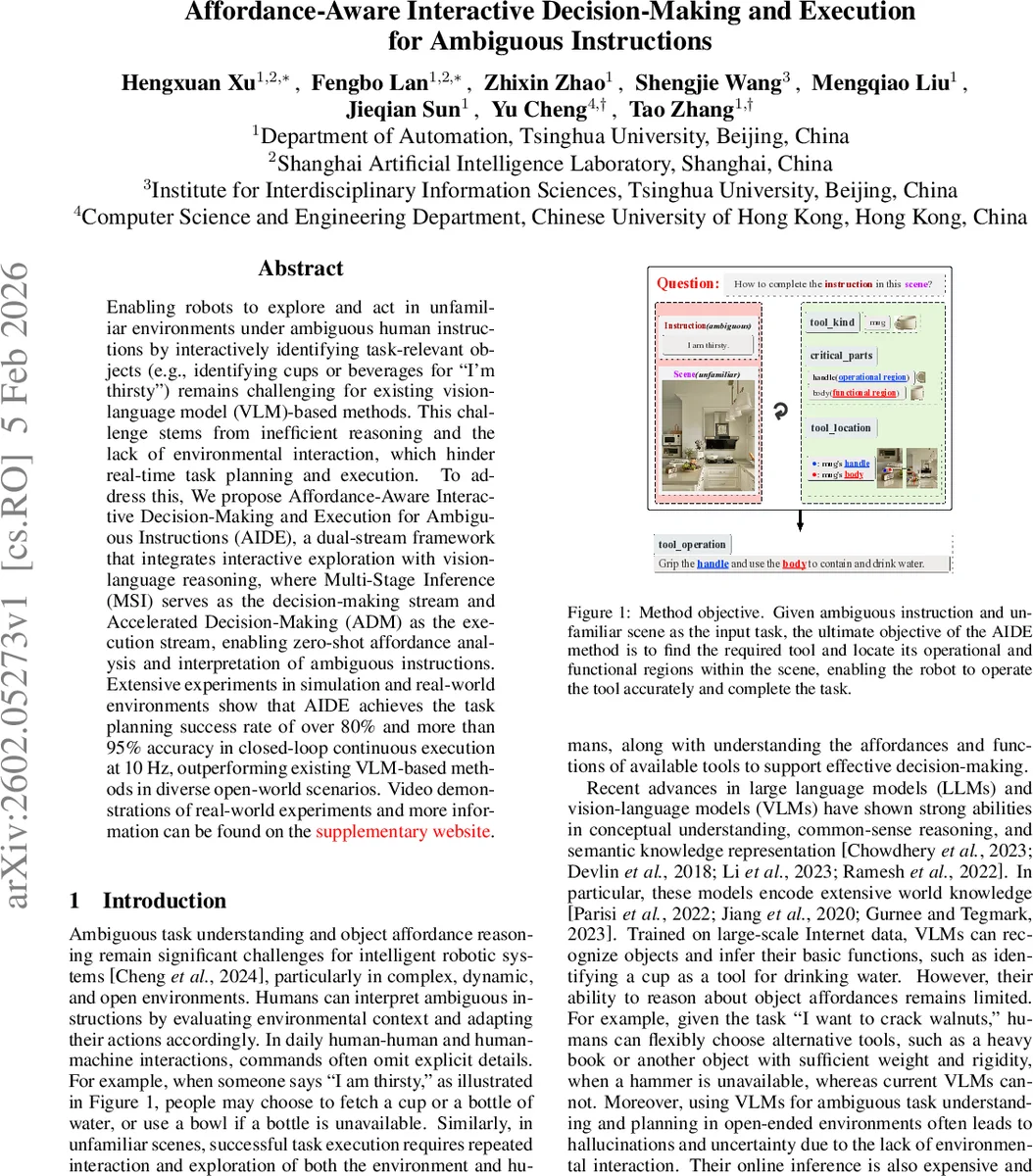

Enabling robots to explore and act in unfamiliar environments under ambiguous human instructions by interactively identifying task-relevant objects (e.g., identifying cups or beverages for “I’m thirsty”) remains challenging for existing vision-language model (VLM)-based methods. This challenge stems from inefficient reasoning and the lack of environmental interaction, which hinder real-time task planning and execution. To address this, We propose Affordance-Aware Interactive Decision-Making and Execution for Ambiguous Instructions (AIDE), a dual-stream framework that integrates interactive exploration with vision-language reasoning, where Multi-Stage Inference (MSI) serves as the decision-making stream and Accelerated Decision-Making (ADM) as the execution stream, enabling zero-shot affordance analysis and interpretation of ambiguous instructions. Extensive experiments in simulation and real-world environments show that AIDE achieves the task planning success rate of over 80% and more than 95% accuracy in closed-loop continuous execution at 10 Hz, outperforming existing VLM-based methods in diverse open-world scenarios.

💡 Research Summary

The paper addresses a fundamental limitation of current vision‑language model (VLM) based robotic planners: they struggle with ambiguous human instructions because they lack efficient reasoning and direct environmental interaction. To overcome this, the authors propose AIDE (Affordance‑Aware Interactive Decision‑Making and Execution), a dual‑stream framework that couples high‑level decision making with low‑latency execution.

AIDE consists of two complementary streams. The Multi‑Stage Inference (MSI) stream is invoked only when a novel situation arises or when the execution stream cannot locate a suitable tool. MSI employs a Multimodal Chain‑of‑Thought (MM‑CoT) module that leverages GPT‑5’s multimodal reasoning together with state‑of‑the‑art visual models (YOLO‑World for detection and SAM2 for segmentation). The MM‑CoT first predicts the target tool type and its critical parts (e.g., handle, body), then the visual models locate candidate objects in the scene, and finally GPT‑5 selects the most appropriate instance. The resulting task‑grounding output (tool label, location, operational and functional regions) is stored in an Instruction‑Tool Relationship Space.

The Relationship Space is built by pairing each ambiguous instruction with three types of scene images (synthetic, real‑world, and augmented) and generating a task‑planning entry via MM‑CoT. For each entry, GPT‑5 scores affordances along X dimensions, producing an affordance vector that quantifies how well a tool can satisfy the instruction. These vectors are clustered (k‑means) to capture category‑level affordances, which naturally induce many‑to‑many links among instructions, tools, and even between tools themselves. This structure mitigates hallucinations and provides a robust cross‑modal representation for rapid retrieval.

Retrieval is handled by the Efficient Retrieval Scheme (ERS), which uses depth‑first search and vector similarity to fetch the most relevant planning results from the Relationship Space.

The Accelerated Decision‑Making (ADM) stream runs continuously at 10 Hz, forming a closed‑loop controller. ADM repeatedly queries ERS for candidate plans, applies an Interactive Exploration Policy, and decides among three motion strategies: (1) visible exploration – approach a region where the target tool is in view, (2) in‑visible exploration – when the tool is nearby but not directly reachable, the robot formulates an intermediate sub‑instruction to enter the region, and (3) no exploration – directly manipulate the operational and functional parts of the identified tool. Each action updates the scene perception, feeding new data back into MM‑CoT and ERS, thus allowing the robot to adapt on the fly.

The authors constructed a comprehensive dataset comprising ambiguous instructions, three scene variants per instruction, and validated task‑grounding results. They evaluated AIDE in both simulation and on a real robot platform across 400 test scenarios involving ambiguous commands such as “I’m thirsty.” Results show that AIDE achieves over 80 % task‑planning success and more than 95 % accuracy in continuous execution, while maintaining a real‑time decision frequency of 10 Hz. Compared to prior VLM‑based planners, AIDE demonstrates superior robustness to instruction ambiguity, reduced hallucination, and the ability to handle open‑world environments with unseen objects.

Key contributions include:

- A dual‑stream architecture that separates expensive reasoning (MSI) from fast execution (ADM).

- A multimodal chain‑of‑thought module that integrates large‑scale language reasoning with precise visual grounding.

- An affordance‑driven Instruction‑Tool Relationship Space that encodes many‑to‑many associations and supports efficient retrieval.

- An interactive exploration policy that enables the robot to actively gather missing information and adapt its motion strategy in real time.

The paper concludes that integrating affordance reasoning with interactive exploration bridges the gap between high‑level language understanding and low‑level robot control, enabling robots to interpret and act upon ambiguous human instructions in unfamiliar settings. Future work will explore richer human‑robot dialogue for clarification, scaling to multi‑step tasks such as cooking or assembly, and continual learning of affordance representations to further improve generalization.

Comments & Academic Discussion

Loading comments...

Leave a Comment